I have trained Flux Dev on my SDXL dataset and merged loras, correcting anatomy censorship and excessive bokeh/blurred backgrounds.

V12 - Merge with SRPO model and some loras, a bit cleaner looking than previous models, might want to use some grain loras if you want that amateur look.

I think not as good at NSFW so might want to use my lora for that: https://civarchive.com/models/652791/jibs-flux-nipple-fix

Jib Mix Flux v8-Flash! SVDQuant-4bit

Update 06/04/2025 - NOW WITH CONTROLNET AND NATIVE LORA SUPPORT!

This new SVDQuant format currently requires ComfyUI and an Nvidia 2000 series+ GPU.

I recommend;

Guidance scale = 2.5-3.5

Sampler = dpmpp_2m.

Scheduler = Sgm_uniform, Beta or Custom Stigmas.

This model is a new format that is a little tricky to first get setup but it is worth it as you can make flux images super fast (5 seconds on a 3090, 2.5 sevonds on a 4090 and 0.8 seconds on 5090! at 10 steps) ,

You need to install the nunchaku project following the instructions there.

and the nunchaku ComfyUI custom nodes to get it to work.

Download and unzip the archive from Civitai to: \comfyui.git\app\models\diffusion_models\jib-mix-svdq\

This is the Nunchaku workflow I am using: https://civarchive.com/models/617562

Thanks a lot to theunlikely for running the Quantization which takes an H100 GPU 6 hours!

Unfortunately, it seems the NSFW capabilities are reduced by the quantisation but can be bought back with my NSFW Lora you need to use the Nunchaku lora loader in the node pack and it will auto convert the lora for use the first time (and optionally can be saved).

There is currently a size limit above 2 million pixels 1024 x 2048 that causes a crash in ComfyUI, so if you get that lower your gen/upscale resolution, The Developers say a fix will be released this week.

Jib Mix Flux Version 8 AccentuEight

Much better Skin texture than Jib Mix Flux V7 but without the bad Flux Lines of Jib Mix V6.

NSFW anatomy is slightly lacking so I have uploaded a separate NSFW model that has very slightly reduced details / artstyles or loras can be used.

The V8 Pruned Model nf4 (6.33 GB) model is actually the Q4_0.gguf

The V8 Pruned Model bf16 (11.84 GB) model is actually the Q8_0.gguf

15/04/2025 - The new fp16 version of V8 provides very slightly better details and anatomical and image consistency, if you have the VRAM for it....

Jib Mix Flux Version 8 AccentuEight NSFW

Better female anatomy with a slight loss in skin details.

The V8 NSFW Pruned Model nf4 (6.33 GB) model is actually a Q4_0.gguf

Jib Mix Flux Version 7.8 Clear Text Focus

This version focuses on having more readable text generation.

The Skin texture may not be generally as realistic as Jib Mix v6 or v7.

It has less nipple flashing through clothes.

The 2000s Analog Core @0.6 weight, removes some of the plastic Fluxness of this merge without hurting the text.

Jib Mix Flux Version 7.2 Pixel Heaven

7/7.2 mainly fixes "Flux Line" caused by merging some effected lora's, this has caused some drop in photo realism but an increase in drawn/concept ability and general details.

It also tones down obsessive amounts of freckles (especially red freckles).

The V7.2 Pruned Model nf4 (6.33 GB) model is actually the Q4_0.gguf

The V7.2 Pruned Model bf16 (11.84 GB) model is actually the Q8_0.gguf

Jib Mix Flux version 7 PixelHeaven - beta

The main change is it removes "Flux lines" that plagued V6 and the original Flux Dev to some extent.

It may be overdoing freckles a lot but I wanted to see what people think of it. hence the Beta name.

I really recommend using Movie Portrait Lora on quite a high weight for a less plastic look, but I couldn't merge it into the model as in testing it was a Lora that can cause "Flux Lines"

Jib Mix Flux Version 6.1 Real Pix Fixed

6.1 mainly tries to fix small stubby hands or massively distorted hands/arms.

(If you still have problems with hands and you are using a low step count around 8 then increasing the step count usually fixes it alternatively applying a low weight (< 0.10) of the Hyper Flux lora pretty much always fixes hands, although you may find it lowers details).

V6.1 is more realistic on the CFG / more natural faces.

I think it actually does art/cartoon styles a bit better than the original v6 as well.

The V6.1 Pruned Model nf4 (6.33 GB) model is actually the Q4_0.gguf

The V6.1 Pruned Model bf16 (11.84 GB) model is actually the Q8_0.gguf

V6 still has the most detailed backgrounds in my testing.

The V6 Pruned Model nf4 (11.84 GB) model is actually Q8_0.gguf

The V6 Pruned Model bf16 (6.33 GB) model is actually a Q4_0.gguf

Jib Mix Flux Version 5 - It's Alive:

Improved photorealism. (Less likely to default to painting styles)

Fixed issues with wonky text.

More detailed backgrounds

Reconfigured NSWF slightly

fp8 V4 Canvas Galore:

better fine details and much better artistic styles, and improved NSFW capabilities.

fp8 V3.0 V3.1 - Clarity Key

I initially uploaded the wrong model file on the 21/10/2024, it was very similar but the new file since 22/10/2024 has slightly better contrast and was used for the sample images.

This version Improves detail levels and has a more cinematic feel like the original flux dev.

reduced the "Flux Chin"

Settings - I use a Flux Guidance of 2.9

Sampler = dpmpp_2m.

Scheduler = Beta or Custom Stigmas.

FP8 V2 - Electric Boogaloo: Better NSFW and skin/image quality.

Settings:

I find the best settings are a guidance and 2.5 and a CFG of 2.8 (although CFG does slow down the generation).

When using Loras these values may/will change.

Version: mx5 GGUF 7GB v1

This is a quantized version of my Flux model to run on lower-end graphics cards.

Thanks to @https://civarchive.com/user/chrisgoringe243 for quantizing this, it is really good quality for such a small model.

There are larger-sized GGUF versions available here: https://huggingface.co/ChrisGoringe/MixedQuantFlux/tree/main

for mid-range graphics cards.

Version 2 - fp16:

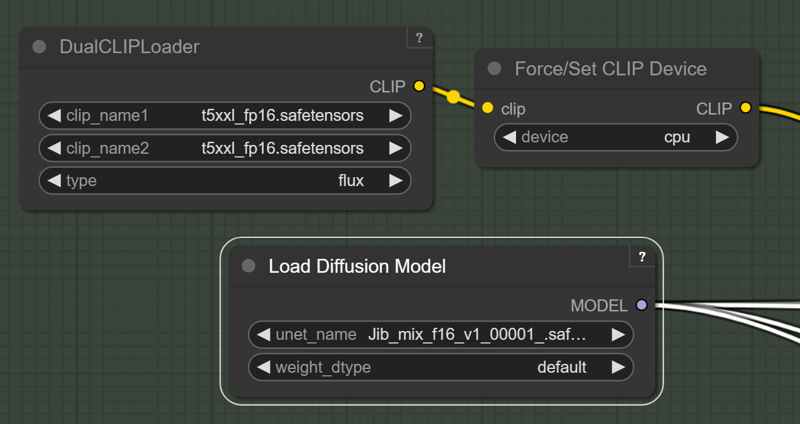

For those with high Vram Cards who want maximum quality I have created this merge with the full fp16 Flux model. if you "only have 24GB of Vram" you will need to force the T5 text encoder onto the CPU/System RAM with this force node on this pack: https://github.com/city96/ComfyUI_ExtraModels

https://github.com/city96/ComfyUI_ExtraModels

Those people waiting for a smaller quantized model I am still looking into it.

Version 2:

Merged in 8 Step Hyper Lora and some others.

Settings:

I like a Guidance of 2 and 8-14 steps.

Resolution: I like a around 1280x1344

Version 1 : brings some of the benefits and look of SDXL with the massive prompt adherence benefits of Flux.

Settings:

I like a Guidance of 2 and 20-40 steps.

Description

Pulled this version back slightly from the hyper-detailed artistic mess to help with consistency of hands etc.

FAQ

Comments (68)

nice, whats new with the 8.5

It should be a bit more consistent with hands: https://civitai.com/posts/16595427 and

It has a little more contrast, and faces are more natural looking and less "Fluxy".

Downsides: It can add red freckles to people, has slightly less fine detail (but less messy details)

thanks!

It's a pity, but I have little VRAM (( Are you planning to make a similar model in the FP8 version?

Yeah, sure. I will will do that now and get it uploading.

@J1B Great news!!!! I'm really looking forward to it!

@J1B Already downloading! I will test it, thanks a lot for your work!

Even the RTX 5090 seems to have issues keeping the new v8.5 loaded at all. Haven't had issues with other Fp16 versions of different models, this one seems strangely demanding.

Hmm strange, it is a pretty standard fp16 Checkpoint like my others I think.

You can try offloading the T5 and other clips to the CPU, only slows it down a bit on the first prompt change in a batch.

Or I have uploaded an fp8 now: https://civitai.com/api/download/models/1755367?type=Model&format=SafeTensor&size=pruned&fp=fp8

@J1B I'll try some of those things. But yeah I've had pixelwave and other things running fine at FP16. Flux support is never pristine, alas.

@J1B Same here on a 5090 - model loading takes longer than other fp16 models -> using Forge the loading gets stuck a while at "Calculating sha256 for" (path to the checkpoint)

@Greywolf666 Interesting, it could be an issue with a lora I mixed in. I don't have a 5090 to do any testing on, yet....

I have a feeling I know the answer but are you going to try and make a INT4 SVDQ of 8.5? I would buzz that very much. I have a feeling you like it as much as I do...but I know it's a bit costly so it is what it is....But I do hope you do!

I'm not sure it is a big enough change to warrant its own SVDQuant, but it might help with stability of hands etc. So I will think about it.

8.5 - Super!!! I was very impressed by your new model. The poses are more natural, the quality of detail is very good, you can't say everything here )) Thank you for the great model!

I am using Forge UI, which schedule type and sampling method should I use with v8.5 to get similar images and quality like the sample photos ?

I use dpmpp_2m/Beta or Sgm_uniform at 20 steps and then I'm doing a 1.8 Ultimate SD Upscale. I use Comfyui but I think you can still do that in Forge.

@J1B What resolution do you suggest for base image? (txt2img, before upscaling) Usually i'm using 1536x1024 or 2048x1024, with fine results

@kapec512 Yeah I normally use 1536x1024 and then do a 1.8x - 2.5x upscale after.

I just tested 2048x1024 and that works fine with no artefacts as well.

@J1B Ok Thanks, I will give it a try

@J1B I successfully installed The Ultimate SD Upscaler in Forge and tested it, and it appears to be functioning well. I would greatly appreciate it if you could share the settings you typically use.

The model is really good, great skin texture and quite fast, it seems to work well with forge too. The only thing I noticed but I don't know if it's forge's problem, are the hands that are sometimes wrong.

For the FP16 version of 8.5, does that include a vae and clip?

No, I do not include those extra parts, as it is a waste of storage space to have several GB extra in each model (and I have 500+ SDXL and 50+ Flux models on my PC at any one time).

@J1B I agree if you already have the Clips ad VAE on your SSD you don't need them baked in.

@wolfdd87 I know this, but usually when people offer a "full' version of a model, most of the time they come with Clip and VAE baked in. And then the pruned versions are usually the versions where these are excluded so that the user can use their own clips and VAEs.

@J1B I agree, the models are big enough already. Plus, sometimes I like to use different CLIP and t5 models for different effects.

Hi,

Which scheduler and sampler would you recommand for v8.5 ?

Keep getting blurry image and broken face with "dmpp_2m" and "sgm_uniform".

thanks 🙏🏻

You could try beta scheduler, how many steps are you doing? I recommend 20 for quality. Flux does have "Islands of stability" so might be worth tweaking to step number a bit if 20 is still no good.

I'm trying with 45 steps... seems to be way too much

@jbrichez I put 8 steps and the quality is great, 3060 12 gig card, 45 second generation.

@soyv4 8 steps only ? I have to test that !

I'm getting alot of body horror deformities with this model. I just took a random sample from my results and 2/3 of them have physical deformities, or are so soft and blurry you can't use them. The remaining 1/3 are quite nice. (Jib mix 8.5) (No loras)

At what resolution?

Great work! Hope to see a Hi-Dream fine-tune from you!

Can this be used commercially? Permission says yes but license says no.

I belive image outputs from Flux Dev models can be sold commercially but you cannot host the model and charge for its use.

Hey, can you upload a FP4 model if possible? This looks like INT4 model and it crashes ComfyUI on RTX 50 series. As per nunchaku FAQ, user with RTX 50 series need FP4 model, and other users need INT4 model.

Thanks for the service!

same issue at first I did not even know why Comfy kept crashing.

do you mean the v8-Flash! SVDQuant-4bit https://civitai.com/api/download/models/1595633?type=Model&format=Diffusers&size=full&fp=nf4

Version?

SVD Quants are much better quality than FP4 (the , they show it working on a 5090 in 0.8 seconds in this demo:https://www.youtube.com/watch?v=aJ2Mw_aoQFc

You need to install the nunchaku project following the instructions there.

and the nunchaku ComfyUI custom nodes to get it to work.

Have you done that?

@J1B I know I got Nunchaku installed but it tells me I need to use FP4 and not INT4 on a Blackwell GPU.

Which probably should say NVFP4...

See there for example: https://huggingface.co/mit-han-lab/nunchaku-flux.1-dev/tree/main

The INT4 is for everything non Blackwell.

The FP4 is for Blackwell.

The FP4 works fine and the INT 4 does not run on my 5080.

@J1B yours which is not a Safetensor just makes Comfy crash, Creart Ultimate for example will give you the error however like this, https://ibb.co/ynSWf3vM

@TiwazM Oh yeah, I think I did know about this but I had forgotten. It costs about $30 in H100 time to convert a checkpoint to Nunchaku SVDQUANT, so I will probably only be coverting my checkpoint when I get my 5090, hopefully next month.

I a preferring to wait longer for the quality of full fp16 Flux models or to use SDXL for quick images atm anyway.

@J1B Hey thanks for your work, I can contribute the checkpoint conversion fee if its less than 50 USD. I can't do the conversion myself, I Have rtx 5080 16GB. But if you can do this then please let me know. For the goodness of community, I am just an hobbyist too?

Is it possible to use v. 8.5 with Forge? I see Comfy mentioned all over, so I'm not sure.

Yes of course, I use Forge myself, and I will say that I like this model, and fantasy and realism works well

For Forge you will need to download the T5 Text Encoder, Clip_L and VAE separately as it is not include to save 5GB+ per model.

Use these instructions if using Forge: https://civitai.com/articles/7828/simple-setting-forge-ui-for-runing-flux-no-include-vae-and-clip

@J1B ok cool, no problem! Thanks :)

I have an already made AI model, i want to keep her body features. Such as breast size, butthock size, all her body features and face, of course, have to stay the same, how can I use this to, for exemple : place a picture of my model, dressed in underwear, and then just ask to generate the same picture but entirely naked ? Please help

You would have to train a lora on your existing character images and use that. how many dataset images do you have already? As a single image will make a very inflexible lora, although I have tested single image loras for flux: https://civitai.com/models/1047517/jibs-synthwave-glow

@J1B I was about to train my lora, i have many images, from different angles, different face expressions etc, but do i need to train the lora without any naked pics ? Or do i need to train my model with explicit pics using this model ? i want consistency on the naked body, i dont want aerolas or genitals parts to be different from a pic to another.

A one point in time, are you going to release a specific Inpaint model version?

Will there be a flux_context model from you?

I was wondering the same, but old FLUX models do not mix very well with the new FLUX KONTEXT, everything needs to be retrained from this new base model. Also the existing flux Lora's do not work well with flux_kontext out of the box. So new Lora's will appear trained on the flux_kontext base models. I tried a simple flux model merge between flux dev and and flux dev kontext and it generated a sft that was just outputting very bad results.

Yeah I hope to make some Flux Kontext loras soon, once I figure it out and then get the new kontext model to have better skin and be more uncensored like my models.

Someone stole your model and put it on Tungsten. https://tungsten.run/model/How9H2zCHo/jib-mix---consisteight-v85

Thanks, it is pretty rampant and hard to stop.

Some has uploaded loads of my models and images to Sea art as well, this isn't my account https://www.seaart.ai/user/J1B

Hey, just a heads up, I am running the "Jib Mix Flux v8-Flash! SVDQuant-4bit" version, and nunchaku gave me the following warning:

Loading models from a folder will be deprecated in v0.4. Please download the latest safetensors model, or use one of the following tools to merge your model into a single file: the CLI utility python -m nunchaku.merge_safetensors or the ComfyUI workflow merge_safetensors.json.

this workflow doesn't work for me as well, I wish there was a simpler way to use the nunchaku quant of this model, fp4 would be nice

Thanks, Nunchaku on my machine is very broken right now I will have to fix it and then do the merge steps, I was planning to update the Nunchaku model with my next release as well.

Just to be clear, the generation is working, although I am using a different workflow. I will try merging it manually on my side and see how it goes. If my system can take the effort... (:

Following up on this one, I just ran the Nunchaku merge workflow. It took about 4 minutes to merge it, on a 2060 Super. It kept low memory, VRAM, CPU, GPU usage. I think the process is mostly I/O bound.

fp4 version pls

I think I will make an fp4 version of Nunchaku for 5000 series cards when I create version 10 of Jib Mix Flux soon, trouble is I will not be able to test it locally without a 5090.

@J1B I can test it if you need help 🫡 just without nsfw stuff as that's not my angle

thanks for Svd version. I love you man!!

I exchanged my RTX 3060 for a 5060 and I can no longer run the SVDQ version, is it an int4? The description stated that an RTX 5090 was used.

No, I don't have an fp4 version for 5000 series cards yet, I plan to make one soon.

Details

Files

Available On (3 platforms)

Same model published on other platforms. May have additional downloads or version variants.