kegant

workflows (comfyui):

✨ v4 (NAI) ✨ https://civarchive.com/models/1856037?modelVersionId=2100596

✨ v4 (CHROMA) ✨ https://civarchive.com/models/2300447?modelVersionId=2588510

✨ v1-v3: ✨ https://civarchive.com/models/861472?modelVersionId=963859

if you aren't using comfyui, GL!

V4 CHROMA UPDATE: Due to popular request, I have trained the model Chroma upon the v4 dataset. I don't know this model as well as SDXL but I figured I'd release it for public consumption either way. It is drastically different than the previous versions of kegant as this is not an AIO but a raw UNET, which means you'll need the clips associated with flux/chroma. The clip locations of the ones I'm using can be found in the workflow posted above.

V4 NAI UPDATE: V4 is a lot of the V3 dataset, although some images were pruned, but most importantly it is a switch from pony to noobai-vpred as the base model. As such, follow conventions appropriate to how noobai was trained with proper danbooru tags. For help, view some of my published images for tagging styles that I usually put at the start of my prompts. The main reason for comfyui usage is to tone down the saturation and contrast of vpred. While the showcase images are all at full denoise, it is highly recommended to turn the main ksampler down to .8 denoise. The reason for this is due to the way the denoising patterns work on vpred models is much different than eps (or epsilon models). This version is not perfect and likely will need a revision as some tags like blur and depth of field are still a bit of an issue. Use things like 'blur' or 'depth of field' in negatives if it keeps going too strong for your tastes and it should fix it up right for you. If you're stuck using the generator and can't make manual sampler changes such as what I'm doing in the attached comfyui workflow for V4, then putting things like 'red_theme' AND 'blue_theme' in negatives can help, but honestly, if you're using this checkpoint, you probably should just use the attached workflow and see how I am using it.

With that said, none of the showcased images were photoshopped or editted post comfy, nor i2i'd, but I AM using a face detailer. This checkpoint struggles harder than the previous pony ones with far away shots, such as 'full_body' or 'wide_angle' for faces, so a face detailer is highly recommended for this version. There is a face detailer attached to the v4 workflow. Its really not hard to set up and they run faster than a full latent anyway and very much so improve faces from far away. I'm using this guys guide here (and it works great):

https://www.youtube.com/watch?v=gDBeKIa4sHA

V3 UPDATE: V3 is mostly a monsters update, with a few cameos but more importantly it is a finer grain control over artistic elements. I have done some obscene things with this version such as manually editting a lot of the source images by hand in gimp to remove as many jpeg artifacts as humanly possible. Watermarks are non existent and aren't necessary to negatively tag, plants and fauna issue has been rectified, and hopefully males are easier to generate as I added a lot. To see the full tag list of the images I've included in this update its under 'about the version'. Many images in v3 have been tagged with very powerful monikers, namely 'film grain, halftone effect, dark fantasy, muted colors, sepia'. You may see me frequently use them because the source images my prompts are pulling from contained those elements within the source. If you don't wish to see any of those and standard anime, use those in negatives. The style is so strong in some cases that it may bleed if no prompt is issued. Some weapons were added, namely swords, guts's 'massive sword', and katana work (from Cis). Generating images with weapons is always going to be painful no matter how good you are at prompting due to SDXL's limitations, but hopefully some of those added images of katanas and swords will help guide the model into more accurate poses with them.

V2 UPDATE: V2 is the first version I have trained and influenced myself manually. It is still mostly the same stack as V1, however some weights have been lowered and the images I have added and trained fixed a few issues V1 was having. V2 focuses more upon desert style lighting and effects, as well as changed the artistic style slightly for a bit smaller eyes and a bit smaller lips. The lighting has only gotten more ridiculous in this version and I feel if I try to do much more with lighting the whole thing will collapse. I guess we can call this the kegant dune update.

kegant PDXL is a pony based model focused on mutating pony to a more retro and gritty appearance while focusing heavily upon lighting effects.

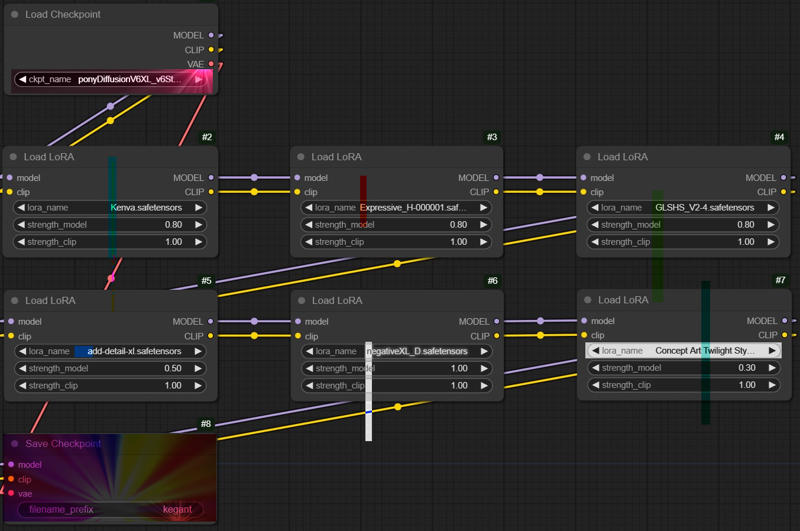

It primarily focuses around the baked version of 5 separate loras and 1 embedding into the ponyv6 model. These models are:

https://civarchive.com/models/366990/pony-custom-styles?modelVersionId=454703

https://civarchive.com/models/341353/expressiveh-hentai-lora-style?modelVersionId=382152

https://civarchive.com/models/550871/bss-styles-for-pony?modelVersionId=669776

https://civarchive.com/models/122359/detail-tweaker-xl?modelVersionId=135867

https://civarchive.com/models/118418/negativexl?modelVersionId=134583

If you can't see the image the following settings were used during the bake:

If you can't see the image the following settings were used during the bake:

Kenva: .8

ExpressiveH: .8

GLSHS: .8

add_detail: .5

negativeXL_D: 1

Concept Art Twilight: .3

Please note that this model has biases in generating females, and prefers to keep subjects not too far away, and not too close. Producing full body may be a little difficult but keep in mind if you specify things like 'shoes', 'boots' or 'feet'/'toes', it will much more be inclined to give you those full body examples you desire. Remember -- This is a pony based checkpoint. It much prefers danbooru style tagging instead of plain english. Sometimes, less is more. Overloading a prompt with too many tags makes it harder for it to understand what to do. If full body is important to you, tag that at the start of your prompt as the higher up in the prompt something is, the more importance it gives it. You can also weight it manually which will help further. I leave all my prompts open on this checkpoint if you are seeking guidance on how to use it.

With that said, this checkpoint isnt as flexible as the goat (aka v6), however what you pay for in flexibility, you gain the additional lighting, artstyle, and speed of generation. To gen the same stack of images with all of the associated loras baked in is about 3 times the speed vs if you did it with v6 and the entire stack, which was the primary focus of this checkpoint.

✨ Please Share Your Cool Creations Below! ✨

Thank you all so much for trying out my first checkpoint.

Please refer to Pony V6 model page for more detailed prompting guidelines.

☄️ Generation Recommendations

* All preview images were generated with no LORA's except for the final two with Haruko Haruhara and Lain as pony has no concept of these characters and they are highly stylized characters that would be very difficult to prompt alone. No other resources were used, just pure text to image with a second pass of latent upscaling only (no pixel upscaling was involved).

Most sample images were generated with ancestral samplers of these types for the initial pass:

Sampler: Euler A / DPM++A

Schedule Type: Karras

Steps: 20 - 30

CFG: 2 - 6

Clip Skip: 2

Denoise: 1

The latent upscaler used was very similar to above, usually opting for a Euler variant as they are typically faster to produce images.

Sampler: Euler A / DPM++A

Schedule Type: Karras

Steps: 15

CFG: 2 - 6

Denoise - 0.5

Upscale By: 1.5-2.0

For some tips on generation, the lower your CFG on the latent upscaler (and steps), the more 'painted' look its going to have, with softer less defined features which creates the 'mist' look in some of the images. Vice versa, the more cfg added, the more 'baked' and shiny it looks. 3.0 cfg is perhaps the closest medium between all the loras that emphasizes each of them the best. For the Harley Quinn image attached, I went nuts with a 10cfg setting to show as well what that looks like, however its highly abstract.

PLEASE look at my attached workflow as it details how you can manipulate kegant to the fullest whether you prefer the sleak glossy design, or the softer extreme retro vibes with film grain effects.

One last note - this checkpoint tends to add 'jpeg artifacts' and various fauna such as 'plants' and 'flowers'. Also it tends to add 'cyberpunk' elements. Add those to the negative prompt if you care to not see those, and it should handle removing them fairly well if so desired. For male work, specifying '1girl' in negative extremely helps it, although the checkpoint as previously mention highly prefers females.

Description

FAQ

Comments (40)

Congratulations on the new version, i glad you went with Noobai V-pred, i feel like it does better with this kinda style vs illustrious.

- omae wa mou shindeiru

- nani ?!

- *kegant V4 release*

Any info on trigger words or what kind of data you trained on? Looking good

sorry forgot to do that. went and pulled it from kohya and posted it, apologies its not sorted, but in 'about this version', i posted it there for v4, as the others all had it too minus v1 which was a simple merge and no training involved.

theres only three (i believe) tags that aren't danbooru. all of the rest are.

one is forehead_cross, i did some editting of an old image putting a satanic cross on her forehead. danbooru has a tag for it with like 5 images, but it's not really meant to be embedded into the skull directly i think. i included 'biomechanical' tag with it because idk how else to describe a phenomenon like that, and since it stood out pretty significantly, it needed to have some tag associated with it.

the other is 'mecha_headgear', trained specifically from this image: https://civitai.com/images/59666810

could have probably gotten around that with either 'biomechanical' or 'dragoon' or something similar, but her helmet in the image dominates it so hard i went ahead and put a unique name to it. funny enough, that image was initially generated in midjourney, then trained upon pony, and with that output, trained onto nai in this version.

the last tag is 'jubei_kibagami'. he is main character from ninja scroll and danbooru has nothing on him. there is this though: https://danbooru.donmai.us/wiki_pages/juubee_ninpuuchou

but 2 images means not much to help or go with. so i trained him in. he works better surprisingly than meier_link who is actually in danbooru, but i cant get very good gens with him in this version for some reason.

Do V-Pred models not need anything special anymore in ComfyUI? Because your workflow looks very normal.

When I started using V-Pred, it needed special nodes and even then sometimes the results would look like SD1.0 in high resolution...

At some point, people realized they could embed v-pred metadata as a part of the model weights (more technically, it's a zero-element tensor with the key of vpred and zsnr)

So, Comfy and A111 + Derivatives can just detect v pred models automagically these days

@velvet_toroyashi ahh cool thanks for the info!

P.S. nice model and style, but as soon as you zoom out a little, the face is just a blob. :D

Khazar123 the workflow linked is super old. from v1 of the checkpoint.

i recommend using a face detailer on v4 if you are using it. it helps tremendously. if using comfy, impact pack -> facedetailer node.

skyger I meant the workflow of the images that you posted here, I can just drop your images into ComfyUI and use it. That's why I said I don't see any extra V-Pred stuff, even though it used V-Pred models. ;)

Of course I use a FaceDetailer(even an EyeDetailer), almost every zoomed out image needs it, but with your model even the FaceDetailer couldn't really work with what was given. It gave me stick figure faces. xD

I would need to denoise and completely refactor the face basically.

But it's nothing tragic, every model has it's strengths and weaknesses, so don't mind me too much, I'm just more of a zoomed out guy, but many others aren't. :)

Khazar123 you can also try lowering the bbox threshold, the sam mask threshold, and the sam threshold. doing this will make it easier for the model to find the face in the image, although if the subject is very very tiny, it may fail. a few examples i've posted have hit the face even when its very tiny, but if its a landscape image, thats gonna be rough no matter what you do.

loving the colors and details, sadly the backgrounds usually gets all blue, red or black somehow and yes still with adetailer eyes are problematic, will post examples but cant wait for more of the model, its pretty awesome already

yes for sure reccommend a facial detailer for v4.

@skyger damn thanks for thr buuuz, hope you can fix the red blue background bug, for a first version its crazy already

alternative_Universe check my other comment of my testing of this version. it describes indetail how to tone things down.

skyger hey there🫡, yeah I read it, I didn't understand a single word if I'm honest lol, but I saw your new examples with different samplers, but I'm still excited about a new version because this model is quite different with the general aesthetic

also for background help, emphasizing higher up in the prompt things like 'scenery' and 'landscape' or 'buildings' or even the sky details goes a LONG way. putting your character up first in the prompt prioritizes it heavily under any sdxl model.

loras also go a long way. i've been testing with this one from TijuanaSlumlord and the industrial / post apocalyptic stuff it helps guide the model with is pretty amazing.

https://civitai.com/models/1265827?modelVersionId=1427616

love the style.

so a few thing i'm finding with v4 from testing (and NAI in general):

if you drop the main ksampler down from say, full denoise, to 85% or so, your colors are going to get muted just a little. its going to desaturate and also prevent so much 'blueness', or also even darkness itself to permeate and dominate the images.

if you further go lower on the main ksampler, to say, 0.75, you will start to see a bit of color fade on the top and the bottom of the images, giving a film grainy/mist look.

i think the color palette on nai is just extremely strong or biased towards very very saturated colors with very high contrast. my recommendation is to experiment mostly with the main ksampler settings, especially the denoise. if you're chaining it into another latent upscale (which i highly recommend) it is going to make a second pass over the colors again darkening them further, even if the initial latent skipped over some denoising of the initial image.

i know this sounds complicated, but I'll try to give a few examples.

this image was set to .8 (or 80%) denoise on the main ksampler. notice the soft white light at the bottom and top of the image: https://civitai.com/images/90022696

this image is another set to .7 (or 70%) denoise on the main ksampler: https://civitai.com/images/89879249

the only issue i have with condoning this practice is that what the ksampler decides to actually denoise can vary if its not set to 100%. in the second image i linked, it decided completely to ignore her mid section, which honestly its fine, a lot of the berserk / vampire hunter d images in the kegant training data tends to actually block those out conveniently from the manga because the original mangas were in black and white anyway.

TLDR: try out lowering the denoise of the main ksampler. i'm finding somewhere between 85% to as low as 70% is a very good sweet spot for this checkpoint, depending upon of course, what your goal is in the composition. it will inadvertantly brighten your images and also tone down the saturation.

I'll have to see how it pairs myself, but I know Volnovik has worked on some LoRAs meant to tame some of the color casts and ridiculous contrast biases of NoobAI V-Pred: https://civitai.com/models/1555532?modelVersionId=1893263

Kaligo731 yup do give it a try. i tested the lora there and while it does seem to brighten images a bit, the blue tint is definitely firm. try using a main sampler at less than 100%, i believe its skipping steps that are causing noobai to be so prone to high saturation/contrast.

I love this model a lot, however I cant seem to get even close in quality with Forge, did anyone manage to get the right settings?

i'll try setting up forge or reforge today. i have no idea what i'm doing in it but years ago i did use a111 so hopefully i remember a thing or two from it and see if i can get a workflow working for you similar to the fuckery im doing in comfyui. if anyone else knows feel free to comment but this is a new model and i'm still learning stuff even in comfy with it.

here you go. i tried to annotate it the best i can. i'm not a reforge user but did the best i can as fast as i could. https://civitai.com/images/91441455

if someone more knowledgeable on the subject wants to add additional information by all means go ahead, but i am a comfy user trying to help those who aren't.

For anyone else trying the img2img strategy and wanting a hi-res fix equivalent, hi-res fix is just upscale + img2img. You can do this by

- Sending your img to the Extras tab to do an upscale with the upscaler of your choice, and then sending back to img2img. Hi-res fix in txt2img is literally an automated version of this when using non-latent upscalers.

- Doing the upscale from within the img2img tab itself. You'l need to configure a A1111/Forge setting to allow for selecting a custom upscaler though, check out the beginning of this guide to see how: https://civitai.com/articles/4560/upscaling-images-using-multidiffusion (you don't have to follow the rest of it if you don't care about multidiffusion)

- Installing an extension like this one to basically add a hi-res fix section to img2img that automates all of the above. I've heard this extension doesn't play well with Adetailer though, so keep that in mind.

Note that the above is strictly for pixel space upscale. Latent upscale in img2img is a little trickier--the UI does have a "Just Resize (latent)" option, but it doesn't let you choose which latent upscale technique to use like hi-res fix does. I believe this extension adds the ability to choose, but it hasn't been updated in a while and I've not tried it myself.

skyger thank you for the effort of doing that, I'll experiment a bit more

Very cool model, it creates compositions far more creative than anything else on offer in the illustrious/Noob ai library. That itself is quite the achievment. That being said, anatomy isn't great, and female characters look very masculine, with strong jawlines and sharp chins (very Kawajiri-esque, ninja scroll style). Though despite this, It's a fantastic checkpoint that deserves a lot of love. Great job & look forward to its continued development.

agreed

rocklaw Hey, what's good bro, lets see what we can cook up with this dope model ^_^

anatomy is difficult. it was trained on kawajiri heavily, as well as amano, miura, other minor influences from kawamoto, nightow, and sadamoto. i was tired of seeing the same cute chubby anime faces plastered all over civitai and wanted specifically strong jaw lines, longer bodies (in general) and more adult looking anime. thank you for trying out the checkpoint and your honest review and i hope you like it.

skyger Appreciate your work on this and your ethos of directing efforts towards Seinen styles.

syndon haha whats up brotha yup yup working on it rn! cant wait to see what you make!

skyger I appreciate the hard work thanks for bringing this to the scene cant wait for what you make next!

i have attached a decently working workflow for comfyui users tailored to v4 linked and seperated from the v1-v3 workflow. it includes links to the bbox and sam models i use for face detector stuff from huggingface, and all the other nodes that are foreign to vanilla comfy should be found fairly easily within comfy manager as they're fairly popular nodes.

Looks awesome, is available for on-site generation. If not. Is gonna be in the future?

@ShishigamiDono this model requires some additional wrestling to really get good images out of it. it is not an eps model. it is a vpred model. Civit does not natively give you the tools to really utilize the checkpoint out of the box the way it is intended, with things like direct sampler control you can do within comfyui.

However, if you want to bid on the model its there. @ecaj did so about a month ago and it enabled on site generation. Many thanks to @ecaj as I love seeing what others can create with a model I've worked blood sweat and tears on.

I love your checkpoint! Why do I suddenly have a nostalgia for an anime that doesn't exist!?

This takes me back to my childhood and the wide-eyed look I had seeing my first anime! I can't say enough good things about it.

Keep up the good work! ❤️🙏

for those genning on v4 looking for inspiration instead of the generic '1girl' or '1boy' prompt, this is a great resource for what characters it knows about:

https://www.downloadmost.com/NoobAI-XL/danbooru-character/

also if you're more interested in furry, this section is there too!

https://www.downloadmost.com/NoobAI-XL/e621-character/

remember also you're not limited to just these chars. you can throw two of these chars into the prompt and add a 'solo' tag and see what the ai tries to conjure up mixing two of them together.

cool

Is this finetuned to 90s anime?

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.