Note: the last version of the workflow has been greatly simplified to use the ComfyUI sub-workflow system. In consequence, it has less features than the previous ones, but is more stable. I will try to re-include these features over time.

Introduction

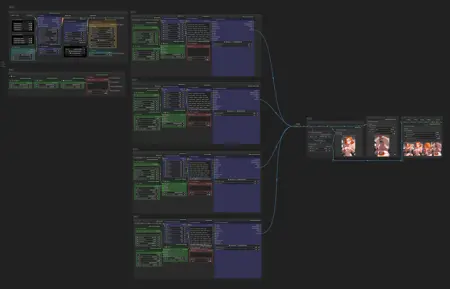

Here's my Scene Composer worklfow for ComfyUI.

The main goal is to create short 5-panels stories in just one queue. For that, it chose randomly parts of the prompt that are used for generation, like:

Character (e.g. hair, eyes, attitude)

Clothes & Underwear

Sexual position and action

To keep consistency, it also keeps certain parts of the prompt to inject it across all scenes (like the environment, the main character and their clothes). You can read my Overview & Usecases article for more explanations!

If you're looking for a simpler workflow, check my Main ComfyUI Workflow. I also suggest to have a look at my Prompt Notebook to better understand how I structure tags.

If you have any comment or request, please feel free to share!

Features

Note: features in gray are in progress and need to migrate to v1.x

Random procedural generation of prompts

Main character (e.g. body, hair, eyes, tattos, piercing, horns, tail,…)

Attire (e.g. clothes, swimsuit, underwear, uniform, accessories.…)

Environment (e.g. place, daytime, nighttime, weather,…)

Action in scene (e.g. starting scene, sexual encounter, ending scene,…)

Predefined personas (demon, goblin, furry, slime, etc)

One place to control all scenes parameters

Seed, steps, CFG, image size, etc.

HighRes-Fix (2nd Pass)

LoRA stacker

Keep control over scenes

Re-generate one or many elements by changing its seed

Re-generate one or many scene by changing its seed

Overwrite and compose the final prompt with variables

Scenes consistency

Tags update dynamically according to the scene (e.g.

wetis added if there's rain)Attire state stay across scenes (if character lose clothes, it stay lost)

If clothes are torned, they stay so

Bondage ropes stay on character, with clothes

Output

Upscaling, pre-processing

Images scene merged into one

CivitAI metadatas & workflow embedded

Setup

Simply import the workflow.json file attached to this article in ComfyUI. You can also drag-and-drop the workflow image directly in the interface.

I personally don't have a very powerful computer. For people in the same situation, check my environment on my Main ComfyUI Workflow article, I explain how I rent and setup machines on remote.

Models

In theory, the workflow can work with any models that use Danbooru-like tags. I personally use Illustrious/NoobAI/Pony-based models and mix with some anime-oriented LoRAs. Have a look at my last images metadatas if you're curious!

Custom nodes

To achieve this workflow, I developed the comfyui-scene-composer extension. You can use as standalone in your own workflow. If you have trouble setting things up, check the repository.

Description

Scene Composer v1.0 is out! The workflow use the Scene Composer custom nodes, especially made for it. It has less features than the old versions for now, but it's far less messier! The custom nodes will allow for more improvements in the future.

FAQ

Comments (15)

so awesome! Quick question, I can't seem to understand why the HighRes-Fix script in 1.0 isn't running? I have to bypass it for it to go

Right click > Fix node. Make sure to have an upscaler and a control net model (even if you set use_control_net to false). Set use_same_seed to false and put a positive value to seed.

Let me know if it's still not working :)

I had to fiddle a bit with comfyui to get it to work but man your 1.0 is amazing, but I have to ask I only see four scene composer instead of five? Is that omitted for reasons or did I miss something?

Though I did just drag the example image to comfy

Thanks, glade you like it! What troubles did you had before making it work and how did you fixed it?

I need to tweak a bit the workflow to make a fifth scene with the new custom nodes. I'm also working on a way to create more scenes with different "stages" available :)

@taches The Output group just had a hiccup with naming files I think, Fix node was enough to get it working again.

I'm looking forward to future updates, but I'm new to Comfy so I'll also be experimenting on my own.

Like the workflow but had to make some modifications. The Scene node seems to insert random category inputs when a string input is missing? Caused some confusion when I was doing some modifications.

Indeed, the Scene node is supposed to generate a scene on its own. The given inputs are just to "overwrite" a component. But I understand it can create confusion. I'll think on a way to make it more intuitive. Thank you for the feedback!

Marking the inputs as optional would help hint at it. Possibly a minimal quick fix

Missing nodes:

Random Line

Prompt Parser

Multi Text Merge

Any Converter

For v0.4

Not available to install through Comfy Manager, any idea where I can get these from? I tried searching for them and can't find any gitHub repositories.

I tracked these missing nodes to this github: https://github.com/tudal/Hakkun-ComfyUI-nodes but I am un able to git pull or install them via the instructions they've provided (putting the .py file in custom nodes). They don't show up in Comfy Manager either. Do you have this node package available?

Update: Files from this link resolved the missing Hakkun nodes https://civitai.com/models/118863/comfyui-custom-node-prompt-parser

Hey! Glade you were able to debug this. Last time I build the v0.4, a simple "Update missing custom nodes" would work just fine. Maybe the new version of ComfyUI make things a bit more difficult

@taches Must be! They were no where to be seen in the Comfy Manager!

Hi. I am having issues generating anything other than a woman holding a camera, each generation seems to be the same and no randomness is being added. I am a bit lost in how to use the workflow properly, and I've looked at your article about the workflow.

Also, how do I add my own character to the scenes? I've tried the overwrite and the variables, and opened the character node and put in my own info. The output prompts never seem to change.

Thank you.

Hi. Which version of the workflow are you using? Did you change the seeds?