HUNYUAN | AllInOne

no need to buzz me. Feedbacks are much more appreciated. | last update: 06/03/2025

⬇️OFFICIAL Image To Video V2 Model is out!⬇️ COMFYUI UPDATE IS REQUIRED !

Get files here:

link 1 paste in: \models\clip_vision

link 2 or Link 3 paste in: \models\diffusion_models (pick the one that works best for you)

⚠️ I2V model got an update on 07/03/2025 ⚠️

This workflows have evolved over time through various tests and refinements,

thanks also to the huge contributions of this community.

Requirements, Special thanks and credits above.

Before commenting, please keep in mind:

The Advanced and Ultra workflows are intended for more experienced ComfyUI users.

If you choose to install unfamiliar nodes, you take full responsibility.I do this workflows for fun, randomly in my free time.

Most issues you might encounter are probably already been widely discussed and solved on Discord, Reddit, GitHub, and addressed in the description corresponding to the workflow you're using, so please..Read carefully..

and consider do some searches before comment.I started this alone, but now there's a small group of people who are contributing with their passion, experiments and cool findings. Credits below.

Thanks to their contributions this small project continues to grow and improve

for everyone's benefit.Fast Lora may works best when combined with other Loras, allowing you to reduce the number of steps.

- Wave Speed can significantly reduce inference time but may introduce artifacts.

- Achieving good results requires testing different settings. Default configurations may not always work, especially when using LORAs, so experiment to find settings that fits best.THERE'S NOT UNIVERSAL SETTINGS THAT WORKS FOR EVERY CASES.

- You can also try to switch to different sampler/scheduler and see wich works best for you case, try UniPC simple, LCM simple , DDPM, DDMPP_2M beta, Euler normal/simple/beta, or the new "Gradient_estimation"

(Samplers/schedulers need to be set for each stage and mode; they are not settings found in the console)

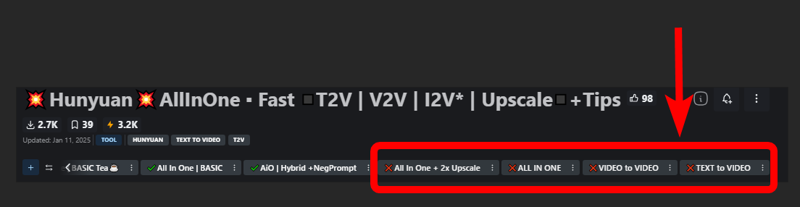

Legend to help you choose the right workflow:

✔️ Green check = UP TO DATE version for its category.

Include latest settings, tricks, updated nodes and samplers, working on latest ComfyUI.

🟩🟧🟪 Colors = Basic / Advanced / Ultra

❌ = Based on deprecated nodes, you'll have to fix it yourself if you really want to use

Quick Tips:

Low Vram? Try this:

and/or try use GGUF models avaible here.

and/or try use GGUF models avaible here.

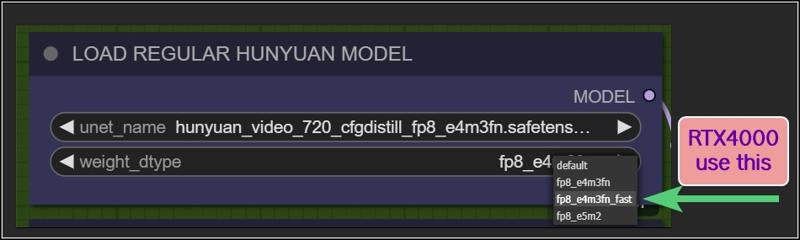

Rtx4000? use this:

Want more tips?

Check my article: https://civarchive.com/articles/9584

All workflows available on this page are designed to prioritize efficiency, delivering high-quality results as quickly as possible.

However, users can easily customize settings through intuitive, fast-access controls.

For those seeking ultra-high-quality videos and the best output this model can achieve, adjustments may be necessary, like Increasing steps, modifying resolutions, reducing TeaCache / WaveSpeed influences, or disabling Fast LoRA entirely to enhance results.

Personally, I aim for an optimal balance between quality and speed. All example videos I share follow this approach, utilizing the default settings provided in these workflows. While I may make minor adjustments to aspect ratio, resolution, or step count depending on the scene, these settings generally offer the best all-around performance.

WORKFLOWS DESCRIPTION:

🟩"I2V OFFICIAL"

require:

llava_llama3_vision: ➡️Link paste in: \models\clip_vision

Model: ➡️Link or ➡️Link (pick the one that works best for you)

paste in: \models\diffusion_modelshttps://github.com/pollockjj/ComfyUI-MultiGPU

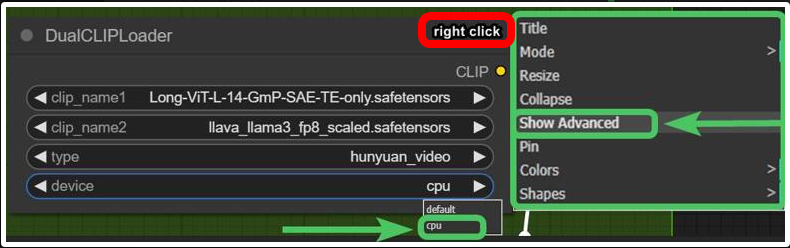

The following node is for SAGE ATTENTION, if you don't have it installed just bypass it:

🟩"BASIC All In One"

use native comfy nodes, it has 3 method to operate:

T2V

I2V (sort of, an image is multiplied *x frames and sent to latent, with a denoising level balanced to preserve the structure, composition, and colors of the original image. I find this approach highly useful as it saves both inference time and allows for better guidance toward the desired result). Obviously this comes at the expense of general motion, as lowering the denoise level too much causes the final result to become static and have minimal movement. The denoise threshold is up to you to decide based on your needs.

There are other methods to achieve a more accurate image-to-video process, but they are slow. I didn’t even included a negative prompt in the workflow because it doubles the waiting times.

V2V same concept as I2V above

require:

https://github.com/chengzeyi/Comfy-WaveSpeed

https://github.com/pollockjj/ComfyUI-MultiGPU

🟧 "ADVANCED All In One TEA ☕"

an improved version of the BASIC All In One TEA ☕, with additional methods to upscale faster, plus a lightweight captioning system for I2V and V2V, that consume only additional 100mb vram.

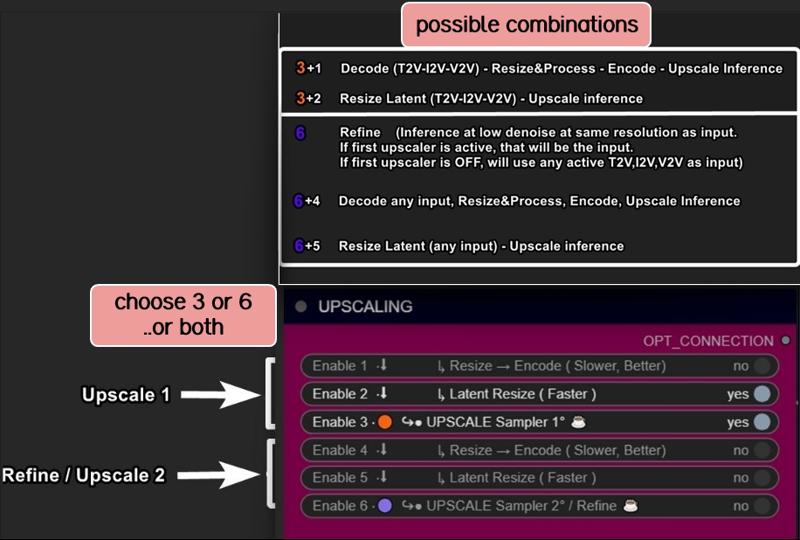

Upscaling can be done in three ways:

Upscaling using the model. Best Quality. Slower (Refine is optional)

Upscale Classic + Refine. It uses a special video upscaling model that I selected from a crazy amount of multiple video upscaling models and tests, it is one of the fastest and allows for results with good contrast and well-defined lines. While it’s certainly not the optimal choice when used alone but when combined with the REFINE step, it produces well-defined videos. This option is a middle ground in terms of timing between the first and third method.

Latent upscale + Refine. This is my favorite. fastest. decent.

This method is nothing more than the same as the first, wich is basically V2V, but at slightly lower steps and denoise.

Three different methods, more choices based on preferences.

Requirements:

-ClipVitLargePatch14

download model.safetensors

rename it as clip-vit-large-patch14_OPENAI.safetensors"

paste it in \models\clip

paste it in \models\ESRGAN\

-LongCLIP-SAE-ViT-L-14

-https://github.com/pollockjj/ComfyUI-MultiGPU

-https://github.com/chengzeyi/Comfy-WaveSpeed

Update Changelogs:

|1.1|

Faster upscaling

Better settings

|1.2|

removed redundancies, better logic

some error fixed

added extra box for the ability to load a video and directly upscale it

|1.3|

New prompting system.

Now you can copy and paste any prompt you find online and this will automatically modify the words you don't like and/or add additional random words.

Fixed some latent auto switches bugs (this gave me serious headhaces)

Fixed seed issue, now locking seed will lock sampling

Some Ui cleaning

|1.4|

Batch Video Processing – Huge Time Saver!

You can now generate videos at the bare minimum quality and later queue them all for upscaling, refining, or interpolating in a single step.

Just point it to the folder where the videos are saved, and the process will be done automatically.

Added Seed Picker for Each Stage (Upscale/Refine)

You can now, for example, lock the seed during the initial generation, then randomize the seed for the upscale or refine stage.

More Room for Video Previews

No more overlapping nodes when generating tall videos (don't exagerate with ratio obviously)

Expanded Space for Sampler Previews

Enable preview methods in the manager to watch the generation progress in real time.

This allows you to interrupt the process if you don't like where it's going.

(I usually keep previews off, as enabling them takes slightly longer, but they can be helpful in some cases.)

Improved UI

Cleaned up some connections (noodles), removed redundancies, and enhanced overall efficiency.

All essential nodes are highlighted in blue and emphasized right below each corresponding video node, while everything else (backend) like switches, logic, mathematics, and things you shouldn't touch have been moved further down. You can now change settings or replace nodes with those you prefer way more easily.

Notifications

All nodes related to the browser notifications sent when each step is completed, which some people find annoying, have been moved to the very bottom and highlighted in gray. So, if they bother you, you can quickly find them, select them, and delete them

|1.5|

general improvements, some bugs fixes

NB:

This two errors in console are completly fine. Just don't mind at those.

WARNING: DreamBigImageSwitch.IS_CHANGED() got an unexpected keyword argument 'select'

WARNING: SystemNotification.IS_CHANGED() missing 1 required positional argument: 'self'

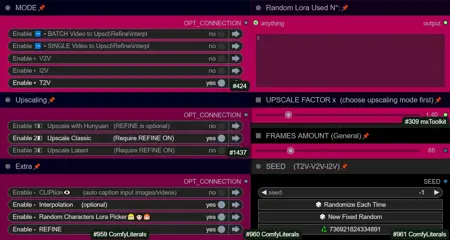

🟪 "AIO | ULTRA "

Embrace This Beast of Mass Video Production!

This version is for the truly brave professionals and unlocks a lot of possibilities.

Plus, it includes settings for higher quality, sharper videos, and even faster speed, all while being nearly glitch-free.

All older workflows have also been updated to minimize glitches, as explained in my previous article.

From Concept to Creation in Record Time!

We are achieving world-record speed here, but at the cost of some complexity. These workflows are becoming increasingly intimidating despite efforts to keep them clean and hide all automations in the back-end as much as possible.

That's why I call this workflow ULTRA: a powerhouse for tenacious Hunyuan users who want to achieve the best results in the shortest time possible, with all tools at their fingertips

Key Features and Improvements:

Handy Console: Includes buttons to activate stages with no need to connect cables or navigate elsewhere. Everything is centralized in one place (Control Room), and functions can be accessed with ease.

T2V, I2V*,V2V, T2I, I2I Support: Seamless transitions between different workflows.

*I2V: an image is multiplied into *x frames and sent to latent. Official I2V model is not out yet. There's a temprorary trick to do I2V here wich require Kijai's nodes.

Wildcards + Custom Prompting Options: Switch between Classic prompting with wildcards or add random words in a dedicated box, with automatic customizable word swapping or censoring.

Video Loading: Load videos directly into upscalers/refiners and skip the initial inference stage.

Batch Video Processing: Upscale or Refine multiple videos in sequence by loading them from a custom folder.

Interpolation: Smooth frame transitions for enhanced video quality.

Random Character LoRA Picker: Includes 9 LoRA nodes in addition to fixed LoRA loaders.

Upscaling Options: Supports upscaling, double upscaling, and downscaling processes.

Notifications: Receive notifications for each completed stage, organized in a separate section for easy removal if necessary.

Lightweight Captioning: Enables captioning for I2V and V2V with minimal additional VRAM usage (only 100MB).

Virtual Vram support.

Use the GGUF model with Virtual VRAM to create longer videos or increase resolution.

Hunyuan/Skyreel (T2V) quick merges slider

Switch from Regular Model to Virtual Vram / GGUF with a slider

Latent preview to cut down upscaling process.

A dedicated LoRA line exclusively for upscalers, toggled via a dedicated button.

RF edit loom

Upscale using Multiplier or "set to longest size" target

a button to toggle Wave Speed and FastLoRA as needed for upscaling only.

Ui improvements based on users feedbacks

- Sequential Upscale Under 1x / Double Upscaling

You can now downscale using the upscale process and then re-upscale with the refiner, or customize upscaler multipliers to upscale 2 times.

New Functionality:

The upscale value range now includes values as low as 0.5.

Two sliders are available: one for the initial upscale and another for the refiner (essentially another sampler, always V2V).

Applications:

Upscale, Refine or combine the twoUpscale fast (latent resize + sampler) or accurate (resize + sampler)

Refine (works the same as upscale, can be used alone or as an auxiliary upscaler)

Double upscaling: Start small and upscale significantly in the final stage.

Downscale and re-upscale: Deconstruct at lower resolution and reconstruct at higher quality.

Combos: Upscale & Refine / Downscale & Upscale

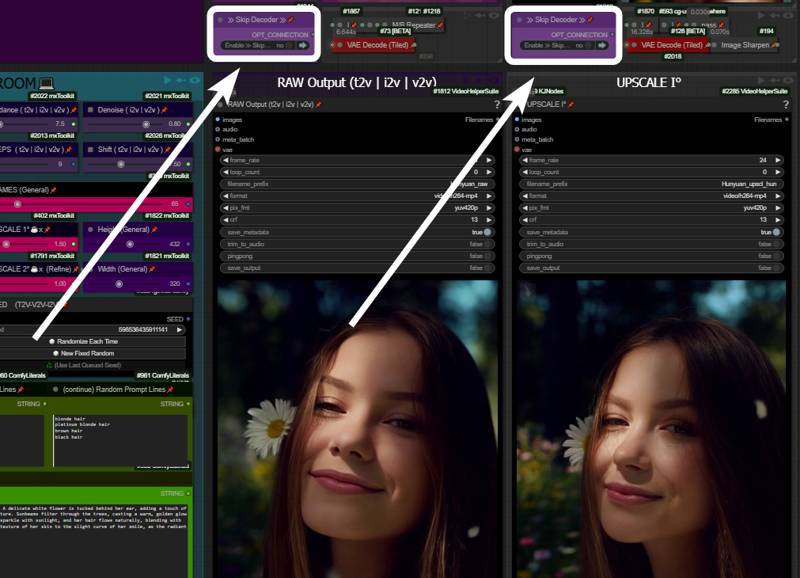

- Skip Decoders/Encoders Option

Save significant time by skipping raw decoding for each desired stage and going directly to the final result.

How It Works: If your prompt is likely to produce a good output and the preview method ("latent2RGB") is active in the manager, you can monitor the process in real-time. Skip encoding/decoding by working exclusively in the latent space, generating and sending latent data directly to the upscaler until the process completes.

Example:

A typical medium/high-quality generation might involve:Resolution: ~ 432x320

Frames: 65

One Upscale: 1.5x (to 640x480)

Total Time: 162 seconds

In this example case, by activating the preview in the manager and skipping the first decoder (the preview before upscaling), you can save ~30 seconds. The process now takes 133 seconds instead of 162.

Bypassing additional decoders (e.g., upscale further or refinement) can save even more time.

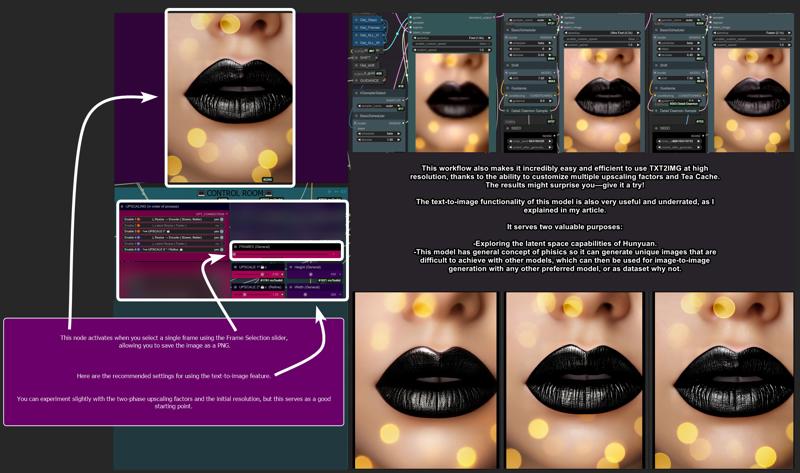

- Image Generation (T2I and I2I)

Explore HUN latent space with this image generation capabilities.

When the number of frames is set to 1, the image node activates automatically, allowing the image to be saved as a PNG.

Use the settings shown here for the best results:

T2I Example Gallery: Hunyuan Showcase

- Structural Changes / Additional Features

Motion Guider for I2V

This feature enhances motion for image-to-video workflows, lowering chances to get a static video as result.

9 Random Character Loras Loader: Previously limited to 5, now expanded to 9.

Random Character Lora Lock On/Off:

By default, each seed is set to corresponds to a random Lora

(e.g., seed n° 667 = Lora n° 7).Now, you can unlock this "character Lora lock on seed" and regenerate the same video with a different random Lora while maintaining the main seed.

Clarifications:

Let’s call things by their real names:"Refine" and "Upscale" are both samplers here. Each optimized for specific stages:

Upscale: Higher steps/denoise, fast results, balanced quality.

Refine: Lower steps/denoise, focused on fixing issues and enhancing details.

Refine can work alone, without upscaling, to address small issues or improve fine details.

UI Simplification:

The "classic upscale" is now replaced by a faster and better-performing resize + sharpness operation and hidden in back-end to save space.Frame Limit Issue (101+ Frames):

Generating more than 101 frames with latent upscale can cause problems. To address this, I added an option to upscale videos before switching to latent processing.

- Bug Fixes

Latent Upscale Change:

Latent upscaling now uses bicubic interpolation instead of nearest-exact, which performs better based on testing.

"Cliption" Bug Fixed

201-Frame Fix:

Generating 201-frame perfect loops caused artifacts with latent upscale. Switching to "resize" via the pink console buttons now resolves this issue.

- Performance and other infos:

Once you master it, you won’t want to go back. This workflow is designed to meet every need and handle every case, minimizing the need to move around the board too much. Everything is controlled from a central "Control Room."

Traditionally, managing these functions would require connecting/disconnecting cables or loading various workflows. Here, however, everything is automated and executed with just a few button presses.

Default settings (e.g., denoise, steps, resolution) are optimized for simplicity, but advanced users can easily adjust them to suit their needs.

-Limitations:

No Audio Integration:

While I have an audio-capable workflow, it doesn’t make sense here. Audio should be processed separately for professional results.No Post-Production Effects:

Effects like color correction, filmic grain, and other post-production enhancements are left to dedicated editing software or workflows. This workflow focuses on delivering a pure video product.Interpolation Considerations:

Interpolation is included here. I set up the fastest i could find around, not necessary the best one. For best results, I typically use Topaz for both extra upscaling and interpolation after processing but is up to the user to choose whatever favourite interpolation method or final upscaling if needed.

Requirements:

ULTRA 1.2:

-Tea cache

ULTRA 1.3:

-UPDATE TO LATEST COMFY IS NEEDED!

-Wave Speed

-ClipVitLargePatch14

ULTRA 1.4 / 1.5:

-UPDATE TO LATEST COMFY IS NEEDED!

https://github.com/pollockjj/ComfyUI-MultiGPU

https://github.com/chengzeyi/Comfy-WaveSpeed

https://github.com/city96/ComfyUI-GGUF

https://github.com/logtd/ComfyUI-HunyuanLoom

https://github.com/kijai/ComfyUI-VideoNoiseWarp

NB:

The following warning in console is completly fine. Just don't mind at it:

WARNING: DreamBigImageSwitch.IS_CHANGED() got an unexpected keyword argument 'select'

WARNING: SystemNotification.IS_CHANGED() missing 1 required positional argument: 'self'

Update Changelogs:

|1.1|

Better color scheme to easily understand how upscaling stages works

Check images to understand

|1.2|

Wildcards.

You can now switch from Classic Prompting system (with wildcards allowed)

to the fancy one previously avaible

|1.3|

An extra wavespeed boost kicks in for upscalers.

Changed samplers to native Comfy—no more TTP, no more interrup error messg

Tea cache is now a separate node.

Fixed a notification timing error and text again.

Replaced a node that was causing errors for some users: "if any" now swaps with "eden_comfy_pipelines."

Added SPICE, an extra-fast LoRA toggle that activates only in upscalers to speed up inference at lower steps and reduce noise.

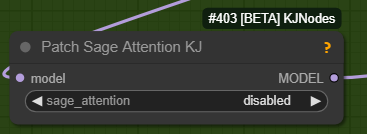

Added Block Cache and Sage to the setup. Users who have them working can enable them.

Changed the default sampler from Euler Beta to the new "gradient_estimation" sampler introduced in the latest Comfy update.

Added a video info box for each stage (size, duration).

Removed "random lines."

Adjusted default values for general use.

Upscale 1 can now function as a refiner as well.

When pressing "Latent Resize" or "Resize," it will automatically activate the correct sampler.

A single-frame image is now displayed in other stages as well (when active).

Thanks to all users that contributed on discord for this workflow improvements!

|1.4|

Virtual Vram support

Hunyuan/Skyreel quick merges slider

Toggle to switch from Regular Model to Virtual Vram / GGUF

Longer vids / Higher Res / extreme upscaling now possible

Default res changed to 480x320 wich looks like a balanced middle way for lowres quick vids and most users should be ok with that.

Latent preview for skip preview mode

Switch toggle to enable/disable Exclusive LoRA for upscalers

RF edit loom

V2V loading time improved

Upscale to longest size target

Fixed slider upscale mismatch

info node moved

clean up and fixes

better settings for general use

upscale one can now use "resize to longers size" optional slider

added extra wave speed toggle for upscalers

added exclusive loras line for upscalers

general fixes

Ui improvement based on users feedbacks

fixed fast lora string issue on bypass in upscalers

more cleaning

changed exlusive loras for upscalers again, main fast lora is NOT going to pass in that line, since it has already a separate toggle (upscale with extra fast lora) previously called SPICE FOR UPSCALING.

fixed output node size for videos

moved resize by "longest size" toggle in extra menu

added extra wave speed toggle

control room is finished.. for now. I dont want to stress Aidoctor further. He already did a great job

lower fast lora default value now to 0.4

fixed VIDEO BATCH LOADING

|1.5|

general improvements, Ui improvements, some bugs fixes

leap fusion support

Go With The Flow support

Bonus TIPS:

Here an article with all tips and trick i'm writing as i test this model:

https://civarchive.com/articles/9584

if you struggle to use my workflows for any reasons at least you can relate to the article above. You will get a lot of precious quality of life tips to build and improving your hunyuan experience.

All the workflows labeled with an ❌are OLD and highly experimental, those rely on kijai nodes that were released at very early stage of development.

If you want to explore those you need to fix them by yourself, wich should be pretty easy.

CREDITS

Everything I do, I do in my free time for personal enjoyment.

But if you want to contribute,

there are people who deserve WAY more support than I do,

like Kijai.

I’ll leave his link,

if you’re feeling generous go support him.

Thanks!

Last but not least:

Thank this community, especially those who given me advices and experimented with my workflows, helping improve them for everyone.

Special thanks to:

https://civarchive.com/user/galaxytimemachine

for its peculiar and precise method of operation in finding the best settings and for all the tests conducted.

https://civarchive.com/user/TheAIDoctor

for his brilliance and for dedicating his time to create and modify special nodes for this workflow madness! such an incredible person.

and

https://github.com/pollockjj/ComfyUI-MultiGPU

Also special thanks to:

Tr1dae

for creating HunyClip, a handy tool for quick video trimming. If you work with heavy editing software like DaVinci Resolve or Premiere, you'll find this tool incredibly useful for fast operations without the need to open resource-intensive programs.

Check it out here: [link]

Have fun

Description

FAQ

Comments (80)

Honestly, I don't even remember which settings I used when I wrote that in the guide, but for sure, it was lower steps and resolutions compared to the settings you’ll find when loading the latest workflow I’m sharing here. I’ve changed the settings a lot over time, and the latest settings I’m leaving in the workflow are more generally oriented for a 'wider audience.' The ones you're talking about were probably even Kijai nodes, not the native ones. I don't have much time in the next few days, but I'll see if I can provide an advanced workflow with Kijai nodes instead of the native ones that everyone seems to be using now. Damn, making and adjusting workflows is so time-consuming that I’ve done nothing except connect noodles in the last few days. I need a break 🤣

I’ll start by saying that I really appreciate the way you work and what you’re offering FOR FREE to everyone. However, I’d like you to take this message as friendly feedback from someone who uses ComfyUI in a very "plug and play" way, so to speak...

That said, while version 1.4 is well-structured and efficient, I must admit that I currently prefer and use version 1.2. Despite being more "basic," it’s the one I feel most comfortable with, precisely because it’s cleaner.

I also want to emphasize that this is just feedback, not criticism, especially because version 1.4 is really cool. However, in my opinion, it’s a bit too packed with "non-essential" elements and features, which might intimidate the average ComfyUI user.

Anyway, great job and thank you! 😉

yeah i gues that is why the basic version is there XD

You're absolutely right @BobDF . In fact, I was thinking of giving these latest versions different names instead of always calling them "Advanced" with sequential numbers.

Each version, after all, adds something extra, and I’ve reached a point where it’s no longer worth going further on the intimidating side, as you said. Exactly.

Thanks for the comment.

At this point, I think I’ll work backwards to improve the previous versions

since some of them contain small bugs.

This way, you’ll also be able to benefit from the 1.2 you’re comfortable with 💪🤍

You can now set the length by replacing the LATENT SIZE (T2V) node with an EmptyHunyuanLatentVideo node. However, Get_Frames will no longer be able to connect. Will this cause any problems? (Will I2V become unusable?)

@sand8029728 Generally speaking, if you replace the sampler (in this case, I used the tea cache) with another sampler of your choice, and you need the number of frames to connect to "get frames,"

just right-click on the sampler and select "Convert widget to input."

So lets say your sampler or whatever node has a bounded "frames" , you can set it to receive a value from the "Get_frames"

I got past the errors, using default settings i2v, but the video output for me is just a blur. Too low resolution output to even tell what it is. What am I doing wrong? Btw, thank you for your work on this amazing workflow; can't wait to get it going!

the default settings works here. what is the final res you are getting?

maybe try change resolution? i don't know your settings, loras, prompts, lenght.. tell me more

@LatentDream just want to say thanks for all your detailed documentations LD.I have had blurry outputs also but will start a new thread on that as i think i might have solved it.

@vorpuser how you solved it? i just got the same problem

@madisonross i dont know if i have solved it but i posted another clean thread this morning here in the discussion that described what im trying and whats working

@vorpuser were is this thread?

This is me running everything default. Is this how it's supposed to look? https://imgur.com/wKWAClY

@Cyph3r are you sure you are ssuing the FAST model and not the default one?

@LatentDream I had same issue on another workflow and changed it to euler and got clear videos. although hit or miss cartoony or real.

@LatentDream i believe so. here's my settings. they are default except my models are organized in subfolders only. atm i'm just trying to do default settings on t2v: https://imgur.com/tKDZPSJ and Latent Size node set to: 544x960. I'm sure your workflow is perfect. Problem is probably on my side.

Is this about what the quality should look like at that resolution (544x960)? https://imgur.com/9tFYEsl

@Cyph3r exactly the same for me. Have you tried flipping the ratio to 16x9? Also are you on windows or LInux ?

@Cyph3r please try to flip your ratio to 16x9 from the default of 9x16. You do this by looking for the width and height in the latent node. It’s not a fix obviously as 9x16 should work like it clearly does for others. But for me at least i could move forward with sharp outputs.

@vorpuser will try. i'm on windows. any idea what would cause it to work horizontally and not vertically?

@Cyph3r not yet. but i suspect it might be the order i installed some of the requirements in the virtual Env. Or a specific mismatch of versions between the requirements.

yes @bvcx1984 cartoony or real is normal from hunyuan and really depends on prompting, is not about this workflow. however it should work with euler beta, simple and normal, wich are the 3 i use most

@Cyph3r is this happening with other workflows too?

@LatentDream yes it is. So I tried on another PC (a file copy paste), re-downloaded all models, reinstalled TeaCache node, reloaded workflow fresh, and it's working! No idea which thing fixed it, just glad it's working. It was never an issue with your workflow, though. So now the first raw video output is great, but the refinement introduces ghosting and artifacts. Setting?

Could you please make a low-VRAM version of i2v with Lora support?

(using for example fast-hunyuan-video-t2v-720p-Q4_K_M.gguf)

just swap the model loader and the sampler, is pretty easy

Thank you for the quick reply. I'll try to do it per your advice as soon as I figure out how to unlock the nodes.

@LatentDream what do you mean by sampler? Isn't euler good by default?

@brunotumtay210 no, i mean sampler from the native nodes instead of TEA cache sampler, since tea cahce may eat more vram

This is amazing and super comprehensive - a YouTube tutorial walking through installation of the models and common errors would be amazing (Think Jboog's or Enigmatic's vid2vid workflow from a while back)

ty

i chatted this to one of your users thinking they created it. they credited you for the work. reposting:

i finally got the t2v section working to recreate your video. i've only been using comfyui about a week now because it supports hunyuan. i previously used webui based image generators like auto1111, forge and easy diffusion. moving to comfyui has been painful and addicting. as someone in a reddit forum said, 'it's like crack for the brain...'. thx for attaching your workflow to the video. it's going to take me a while to figure it all out and install all the goodies, but it's an awesome start. i can't imagine how many hours you spent building it. a labor of love. it generates some very nice videos. a true work of art...

thanks! i suggest you to start with basic workflows, is the same thing, just less options and more clear to understand. I need anything else just ask, no problem

@LatentDream yes, i'm using some simpler flows, but i enjoy a good tech challenge. i'm new to comfyui so it's good to see what kind of creative things can be done with it.

Thanks LD. Your thoughts and evaluations and workflows are priceless. I had a Blurry outputs issue with my Ubuntu 24.04 install that might be worth discussing. I carefully installed a fresh stem of CUI. And it ran without errors but the outputs were always blurry. Out of focus. The eyes would move the same way as i could see in your demo but was out of focus. I changed the ratio from 9x16 to 16x9 and it worked much better. What do you think is causing that ? I also saw a quality shift if i changed the scheduler from BETA to NORMAL.

here are my install specifics. i read somewhere that numpy should be 1.26.4. Is there a better place to discuss this stuff ? Discord ? thx again man.

Python version: 3.12.8

pytorch version: 2.5.1

Set vram state to: NORMAL_VRAM

Device: cuda:0 NVIDIA GeForce RTX 3090 : cudaMallocAsync

Using pytorch attention

CUDA Version: 12.4

SageAttention 2.0.1

pytorch pytorch::pytorch-2.5.1-py3.12_cuda12.~

torchvision pytorch::torchvision-0.20.1-py312_cu1~

numpy 2.0.1-py312hc5e2394_1

numpy-base 2.0.1-py312h0da6c21_1

pytorch-mutex 1.0-cuda

torchaudio 2.5.1-py312_cu124

@vorpuser send a discord invite so i can see some examples and we can discuss

Could you help me to create a video with a good upscale using this workflow?

The workflow has multiple upscale methods built directly into it. Turning them on only takes one click and then having it save them takes a second click.

General use question: I've messed with seed and guidance and I still always get almost the exact same generation. Even tried to adjust the prompt for clearer instruction. Is there some other cfg equivalent I should be adjusting here?

Great workflow.

If you didn't change the workflow and used the same models, the parameters should "just work" and the seed -1 should change the output pretty dramatically between runs. I would double check you are using the original workflow and its models.

hard to tell maybe you messed with settings, no idea. as @nojojos2234 sayd, try the "default" workflow see if works.

I have zero clue what I'm doing wrong, but all I'm getting are either black videos or caleidoscope-looking latent mess.

Played around with VAE's, clips, loras, nothing... Any suggestion?

Updating Pytorch to 2.5.1 and downgrading Numpy to 2.0 did not seem to help, either.

I had the same issue with the 1.2 version and spent hours changing things around. I had assumed it was a mismatched version of something as well. Will give 1.4 a try here in a bit and see if I figure anything out.

@GhostOfToshiba Thank you, I'll greatly appreciate if you'll find a moment to let me know if you solved it. Meanwhile I'll try non-tea version when I'm home and we'll see if that helps.

Double check you have the right llava llama, I had a couple that were wrong but it seems like this is the guy you need.

https://huggingface.co/calcuis/hunyuan-gguf/blob/main/llava_llama3_fp8_scaled.safetensors

@GhostOfToshiba I have not done anything with regards to llava llama, using the one that the Manager downloaded... I'll have a go, will update. Thank you!

@GhostOfToshiba That was precisely it, thank you so, so much!

Is there a discord to discuss issues tweaks and fixes? I cant get the batch method to work in advanced tea version it only does one from a batch then finishes.

you need to set queue n° in comfy according to the number of videos you want to process.

here : https://i.vgy.me/xkqHsN.jpg

@LatentDream thanks, will try that.

@LatentDream No facility to unlock the server because cannot react to the welcome post in Firefox Discord

HELP!

i updated timm to the latest version to try to resolve an issue i was having with another workflow, and it seriously broke your workflow. all the control options are gone. can someone tell me what version of timm they are using for this workflow? thanks in advance.

timm? is that Timm image reward? this workflows do not use this, am i missing something? maybe is some dependencies i'm not aware about

after updating when i load the workflow it crashes with:

Loading aborted due to error reloading workflow data

TypeError: word.trim is not a functionTypeError: word.trim is not a function at http://127.0.0.1:8188/extensions/EG_GN_NODES/wbksh.js:49:38 at Array.filter (<anonymous>) at ComfyNode.outSet (http://127.0.0.1:8188/extensions/EG_GN_NODES/wbksh.js:49:16) at nodeType.onConfigure (http://127.0.0.1:8188/extensions/EG_GN_NODES/wbksh.js:69:18) at ComfyNode.onConfigure (http://127.0.0.1:8188/assets/index-QvfM__ze.js:185030:18) at ComfyNode.configure (http://127.0.0.1:8188/assets/index-QvfM__ze.js:54547:23) at ComfyNode.configure (http://127.0.0.1:8188/assets/index-QvfM__ze.js:193420:15) at LGraph2.configure (http://127.0.0.1:8188/assets/index-QvfM__ze.js:64402:17) at LGraph$1.configure (http://127.0.0.1:8188/assets/index-QvfM__ze.js:201005:26) at LGraph.configure (http://127.0.0.1:8188/extensions/ComfyUI-Custom-Scripts/js/reroutePrimitive.js:14:29)This may be due to the following script:

/extensions/EG_GN_NODES/wbksh.js

Ngl thanks for the work but 16 custom nodes (with one obscure deforum one) for a worklflow is a tad too much

wich is the obscure one? get one of the basic workflow if you think this is too much for you ;)

the very dumb deforum node that has not been updated in 7 month that needs an ancient numpy version that is not compatible with any other node and breaks comfyui installs maybe ? Don't be pissed about it mate, this is not a skill issue this is a "my coreworkflow is based on a funky node that has not been updated in 7 month" issue.

@darias234377 no problem 😁 i still do not understand wich is. I want to try see if i can swap with something else. can you please point me to this node?

Error

Failed to validate prompt for output 82:

* UNETLoader 12:

- Value not in list: unet_name: 'hunyuan_video_FastVideo_720_fp8_e4m3fn.safetensors' not in []

* HunyuanVideoLoraLoader 253:

- Value not in list: lora_name: 'lora Hun\hyvideo_FastVideo_LoRA-fp8.safetensors' not in []

---

what exactly should I download or do?

check setup at top left, be sure you loaded correct model and lora

i want inserted two lora. Where i insert there name ? i have to create node ? where are they connected ? thanks

top left, theres the lora section

thanks. i have to "reload node" to activate them ?

@gandolfi2004987 they are bypassed.

select one node and press CTRL+B to activate how many node you want to use

@LatentDream thanks. CTRL+B seems same as "reload node". Your workflow is complete and compelx with a lot of possibility.

Another 'work'flow that doesn't work. Just generates kaleidoscope noise.

Edited: We are all in this new technology together and it can definitely cause hair pulling and all the stress. I have been helped by so many amazing creators and I appreciate when others ask questions because then I learn something new too. Thank you all on every side of the technology for the help and hard work!

This workflow works exceptionally well for me. Might've needed to change some models here and there, but all in all it's great.

thanks guys. I do my best to share my experience and knowledge in hope to be helpfull and grow all togheter so everyone have benefit. I don't do it for buzz or money.

@Minase460 i already read somewere someone else having kaleido issues. just curious to know if a basic workflow works for you, even the native comfy one. double check setup files. in case you solve please write us what you did

@LatentDream I'm sorry for the passive aggressive comment, and was quite frustrated at the time after trying 6 different workflows and none of them worked. I was able to fix the issue. The issue seems to be related to model/VAE. The default settings did not work. I'm not exactly sure what I did to fix it. The workflow is very well laid out and professional. I used the Tea 'basic' workflow. These are my expreimentation results (text to video only):

I currently have it working with hunyuan_video_fastvideo_720fp8 as the model, weight type default.

Official FP8 model generates only kaleidoscope noise. Official BF16 model gives results, but I can tell no difference in quality whatsoever and the generation time is much, much (insanely moreso) longer.

clip_1 for clip 1, llava_llama3_fp8_scaled for clip2.

hunyaunvideosafetensors_VAEFP32 as VAE. If I alter VAE to to BF16, it just generates a blank black result.

Altering any of these settings seems to break it. If I change the clip at all, such as the one you recommended in the description of the workflow, " Long-Vit-L-14-GmP..." it just generates errors.

All lora nodes working as intended.

I have also noticed that you cannot alter the total pixel size of the LatentVideo node, you can only swap the 480 or 320 dimensionally, as using any other size will break it and generate an error.

But yeah, other than changing those models and settings, and restarting comfy loads of times, I'm not sure precisely what was done to correct the issue. The workflow with the right settings and the fastvideo model is generating very fast on a 4090, with about 90 seconds from input to result, and the upscaling and interpolation looks great, in most cases. I am going to try some other workflows for a second time but this is the first one I've actually gotten to work and the results seem to be very fast and look good. I saw mention somewhere on another workflow of a new node that just came out that has a big effect on quality with little to no impact on performance, but I can't remember at the moment what that was called.

I'm also unsure how to implement the model mentioned in this section of your decsription, as it seems unavailable for download anywhere.

"💥 INCREDIBLE MODEL JUST CAME OUT 💥 I'm speechless."

@Minase460 I apologize as well. I have updated my initial comment.

I have found these workflows work well only with the fp8 fast model or the fp8 distilled model. When using the fast model I use the fast lora and I turn it off for the fp8 distilled model. I have really not found any benefit to running it with the BF16 model, I have tried over and over again and I think it just wasn't built for it. This was initially meant (I believe) by the creator to be more of a balance between fast and good. But it wasn't really meant to be a 24gb vram or more workflow for people with more powerful cards like you have.

Clip name 1 you mentioned should be the 324mb file from Hugging Face. I know you mentioned you tried it but just wanted to make sure we are talking about the same one, because that is what works for me and was the one recommended in setup.

https://huggingface.co/zer0int/LongCLIP-SAE-ViT-L-14/blob/main/Long-ViT-L-14-GmP-SAE-TE-only.safetensors

Clip name 2 is the llava_llama3_fp8_scaled.safetensors file. I have tried the fp16 one as well but it doesn't seem to change anything.

https://huggingface.co/calcuis/hunyuan-gguf/blob/main/llava_llama3_fp8_scaled.safetensors

For the video size node, I have pushed it up to 720w x 1088h on a 4070ti super 16gb vram and it only takes about 2 minutes longer, but produces a much higher quality video the first time. This may be a node update issue? I know that this stuff just keeps coming out so quickly and I have gotten used to updating my nodes/comfyui every day.

I don't know if any of that will help but LatentDream has been helpful to me with some of my questions so they may be able to help more directly if needed.

And the INCREDIBLE MODEL JUST CAME OUT was there from when the Hunyuan model was initially released. It just takes you to the information page about the people who created it. But it just is talking about the Hunyuan model so you aren't missing anything there, it just made more sense 2 months ago.

This thread also was having trouble with the Kaleidoscope problem and they seemed to have found a solution.

https://civitai.com/models/1007385?dialog=commentThread&commentId=670910

@Cyphermodx Thanks for your reply and that information. Do you have any insight as to how to fix this error generated when altering the video node pixel size? The error is generated once it reaches the upscaling step of the process.

TeaCacheHunyuanVideoSampler

Sampling failed: shape '[1, 12, 32, 44, 16, 1, 2, 2]' is invalid for input of size 1105920

ComfyUI\custom_nodes\Comfyui_TTP_Toolset-main\TTP_toolsets.py", line 1063, in sample

raise RuntimeError(f"Sampling failed: {str(e)}")

RuntimeError: Sampling failed: shape '[1, 12, 33, 46, 16, 1, 2, 2]' is invalid for input of size 1227264

etc

On the kaleidoscope thing, I did read that comment thread, but the clip he is suggesting to use is the one I am already using, so that isn't the problem

@Minase460 Are you using text 2 video and then upscaling or one of the other methods? Just need to know at what point the problem is triggering.

And that is crazy on the kaleidoscope thing. I honestly am just guessing at what that means, but I assume super distorted colors and no "real" video output, just kind of random noise? That's crazy that it keeps happening. I would tag @LatentDream , they are busy and only get on a little while each day but they have helped me a ton.

@Cyphermodx It happens when using text 2 video with the "basic tea" workflow. Some sizes seem to work, some generate the error. I have tried using an aspect ratio calculator to maintain the same aspect ratio while only increasing the number of pixels, but this does not seem to matter. I did try the 720w x 1088h you suggested and my gen time increased a crazy amount and it still generated the error.

As for the kaleidoscope thing, it is even mentioned on the main page for the model:

https://civitai.com/models/1018217/hunyuan-video-safetensors-now-offical-fp8

"Even on a 8GB card I can render in FP8 at a decent speed (5-30 Seconds Per IT) however like many of you I am getting rainbow static. I am not sure if this is do to bad attention such as SAGE (An old Build) or a OOM issue that is not prompting the OOM error message"

@Minase460 I definitely believe you on the kaleidoscope thing, I just have been extremely lucky I guess and not actually experienced it yet.

I'll see if I can find anything but unfortunately that's what stinks about being early adopters, not a lot of help places to go for easy information. Hopefully someone else will be able to chime in with what fixed it for them!