Over 60% faster Rendering with enable_sequential_cpu_offload set to false

For >=24gb vram

You can now prepare your image with my Outpainting FLUX/SDXL for CogVideo

Animate from still using CogVideoX-5b-I2V

Make sure all 3 parts of the .safetensors down loaded models/CogVideo/CogVideoX-5b-I2V/transformer

diffusion_pytorch_model-00001-of-00003 4.64 GB

diffusion_pytorch_model-00002-of-00003 4.64 GB

diffusion_pytorch_model-00003-of-00003 1.18 GB

https://huggingface.co/THUDM/CogVideoX-5b-I2V/tree/main/transformer

I found this on the github page it looks like the error some people are having:

https://github.com/kijai/ComfyUI-CogVideoXWrapper/issues/55

if taking a long time to render : in CogVideo Sampler try changing the "steps" from 50 lower to something like 20 or 25 you may get very little motion but it might work.

It looks like it only wants 49 in "num_frames"

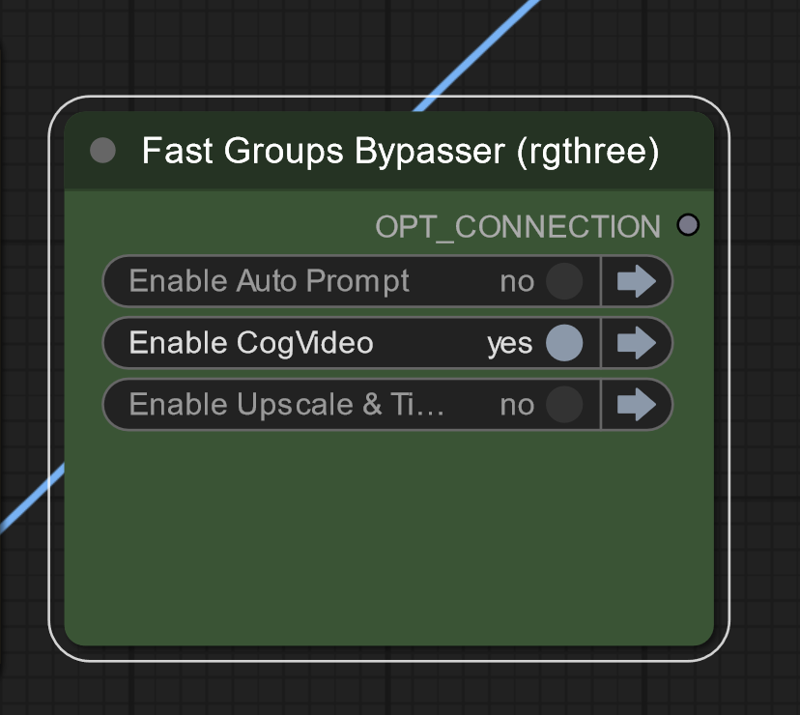

On lower vram systems run groups separately

On lower vram systems run groups separately

Description

Runs slower than 3.0

Update broke old workflow

this also renders faster

FAQ

Comments (9)

Oh there's a version 3 now! I'll have to give it a shot.

Have you found new movement prompts for animating img2vid differently? I'm guessing all type of camera movements would work?

I am still try to figure that out, changing the seed affects it alot

Prompt outputs failed validation CogVideoSampler: - Value 16.0 bigger than max of 1.0: denoise_strength - Value not in list: scheduler: 'DPM' not in ['DPM++', 'Euler', 'Euler A', 'PNDM', 'DDIM', 'CogVideoXDDIM', 'CogVideoXDPMScheduler', 'SASolverScheduler', 'UniPCMultistepScheduler', 'HeunDiscreteScheduler', 'DEISMultistepScheduler', 'LCMScheduler']

Hi! I got an error... Could someone help?

DownloadAndLoadCogVideoModel

Torch not compiled with CUDA enabled

I am not getting any animation? Where do you set the frames?

Seems to be some kind of connection issue. Took a screenshot of the CogVideo Sampler and CogVideo Decode nodes (https://ibb.co/VN8pqgy).

I get the error:

Failed to validate prompt for output 833:

* CogVideoDecode 839:

- Exception when validating inner node: tuple index out of range

If I disconnect the vae input from the Decode node, I can't connect the top sample output again. Not sure what's going on there.

Hello there, did you fix your issue ?

@HyperFire I ended up moving to Hunyuan so I'm using very different workflows now. Thank you for checking in.

I seem to have all the models ready but I keep getting consistent error from CodVideoDecoder: Exception when validating inner node: tuple index out of range