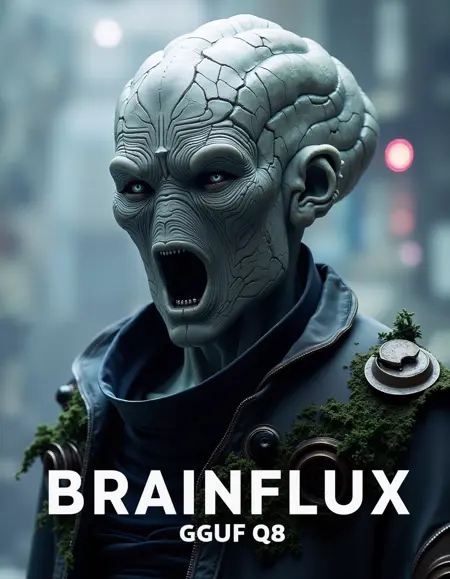

This model is an actual fine-tune of Flux dev (No merged LoRA), tailored towards generating content with a focus on science fiction, and a cinematic feel. I have tried improving anatomy and I wouldn't say I've succeeded but I think there are improvements over the base model.

Unlike the base Flux model, which I feel tends to have a green tint, this Brainflux version leans more towards a blue hue. It also tends to produce much darker results in shadowed or low-light scenes, while brighter scenes remain unaffected.

Brainflux is the result of multiple failed attempts at fine-tuning the model and I have spent too much time and resources to not share the results with the community...so here it is. I have learned a lot along the way and I'm hopeful whenever time allows for a V2, there'll be significant improvements over V1.

THIS IS JUST THE UNET, PUT THE .SAFETENSORS in your ComfyUI/models/unet directory and use it like the other UNETs.

This model isn't necessarily an improvement over the original Flux; rather, it's an alternative that offers different outputs. It is far from perfect, and users should consider it a starting point—something to experiment with and build upon. Use this model at your own discretion, knowing it might not fit every project or preference, but it could be interesting for those exploring darker, more sci-fi-oriented content.

Training Details

{

...,

"discrete_flow_shift": 3,

"double_blocks_to_swap": 6,

"flux_fused_backward_pass": false,

"fp8_base": true,

"fused_backward_pass": false,

"fused_optimizer_groups": 0,

"guidance_scale": 1,

"learning_rate": 1e-05,

"learning_rate_te": 0,

"model_prediction_type": "raw",

"optimizer": "Adafactor",

"optimizer_args": "relative_step=False scale_parameter=False warmup_init=False",

"timestep_sampling": "sigmoid",

"train_text_encoder": false,

...

}Description

GGUF quantized to Q8_0

FAQ

Comments (16)

Would you like to share which program you use to fine-tune the full fp16/8 flux model? Your work looks particularly great!

Thank you @FishEyeG , I have used Kohya for fine-tuning, most important features settings are shared in the description of the model but it's at the bottom of the description. Would that be sufficient? You'd like a bit more of a step-by-step walkthrough?

@braintacles No no, what you shared is very good and detailed ~ Thank you again!

already tried, absoultely fantastic model

Thank you for the feedback @RizhanZaradi , feel free to leave a review so other people give it a try too!

Having problem with the q8 qquf:

mat1 and mat2 shapes cannot be multiplied (4096x64 and 256x768)

using comfyui and flux dev q8 was running smoothly

I have received a similar comment from another user. My friends have been using the model without any issue so I would recommend:

1- Updating ComfyUI

2- Using the Comfy-recommended GGUF custom node (https://github.com/city96/ComfyUI-GGUF)

3- Making sure you are using the proper CLIP models, just in case.

@braintacles update the custom node solved the problems, thanks!

@Mumu1188 glad to hear it! @sargantanas you might want to do the same!

Any chance of an NF4 version @braintacles ?

@Mirabilis consensus seems to be that GGUF is better...but I'm not sure. Have you tried it?

I'm more than happy to offer something for those low on VRAM but if I'd rather have either NF4 or Q4_K_S GGUF than both just for the sake of simplicity. I'm open to suggestions and discussion!

@braintacles Unfortunately, I can't get the GGUF version to run in Forge, so that's why I'm asking. I get this error message about Mats every time and Forge is fully upto date.

RuntimeError: mat1 and mat2 shapes cannot be multiplied (4032x64 and 256x768)

NF4 runs no problem in Forge. I am on an 8GB 3070ti so that might factor into things.

Still no biggie. I just saw your aliens and thought about my recent Mode Magazine Post and thought it would have been quite cool to try out the model and see how the outputs came out.

I'd vote for the Q4_K_S version people on low vram.

I have added a Q4_K_S quant of this to huggingface I hope this is ok by you. https://huggingface.co/skunkworx/brainflux_v10-Q4_K_S

Thank you! I appreciate you letting me know! Totally fine by me, thanks for the link back to this page! If you don't mind adding a link to my huggingface profile as well that'd be awesome! https://huggingface.co/braintacles

@braintacles link added.