Introduction

Here's my compact ComfyUI workflow. I try to keep it as intuitive as possible. Like prompting: less is more. It should be straightforward and simple. I build it with a left-to-right logical flow in mind, that you should easily read and understand.

Features

Prompt Composer

Img2img with mask

Integrated Painting editor

Unified ControlNet

IP-Adapter

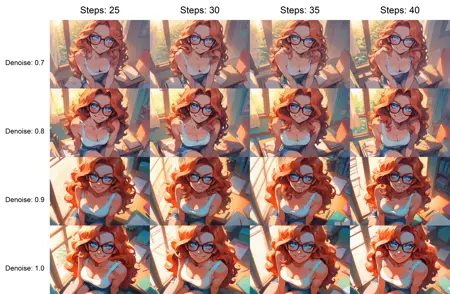

XY Plots

HighRes-Fix (2nd Pass)

Upscaling

Image Comparer

CivitAI metadatas output

Nodes

Description

Clean the workflow and fix some details, like metadatas

FAQ

Comments (21)

Do you have any idea how to put openpose?

You should be able to use it via ControlNet. With the right model, a openpose of the input image could be preprocessed. If you want to use a openpose images directly, you can bypass the preprocessor.

Excellent Workflow, has all the important parts to get started generating in ComfyUI. Very Clean and easy to adapt and a guide to help with how to use it. Also Taches is a very nice and helpful person

Thanks Svarttjern! :-)

Hi, the flow wont run because of the Hi Res Fix script not working :(

Hello! Try to right click > Fix the node. Put a positive value in the seed field

@taches @Kerisable I ran into the same issue. You just have to enable the "controlnet" option in the Hi Res Panel and change the controlnet model to a random one you have so it updates the file path. I guess Comfy thinks it's enabled. Maybe setting that option to None will fix it on future releases.

i have this error:

HighRes-Fix Script 1432:

- Value not in list: pixel_upscaler: '4x_NMKD-Siax_200k.pth'

but i can't find any "pixel_upscaler"

Hello! Try to right click > Fix the node. Put a positive value in the seed field

I ran into the same issue. You just have to enable the "controlnet" option in the Hi Res Panel and change the controlnet model to a random one you have so it updates the file path. I guess Comfy thinks it's enabled. Same with the Upscaler on the far right. Just try and update all areas you can find with model inputs.

I wonder if there's a way to add either asagi4's promptcomposer nodes or Shinsplat's nodes to support the BREAK syntax that is present in A1111 where it separates the tags before and after BREAK into different token chunks. If you have any ideas let me know, I'm really liking this workflow.

nevermind I figured it out

I didn't know there were nodes that include BREAK syntax. I'll look into it, thanks

Hi, i have issues with the hiresfix node here (not the same as the seed one).

So i downloaded your workflow, set it up by downloading the missing nodes. I downloaded a checkpoint and an upscaler because i didn't have any.

I had the highres controlnet/seed issue which i think i managed to fix.

But i still have this :

"

Failed to validate prompt for output 1220:

* HighRes-Fix Script 1490:

- Value not in list: pixel_upscaler: 'None' not in ['RealESRGAN_x2.pth', 'RealESRGAN_x4.pth']

Output will be ignored

Failed to validate prompt for output 1434:

Output will be ignored

Failed to validate prompt for output 618:

Output will be ignored

"

From what i understand it has to do with paths or something, but comfyUI and stable diffusion in general is mostly wizardry for me so i have no clue how to troubleshoot that.

I used some other workflows in the past but it wouldn't hurt to have a short tutorial on this page for setting everything up and understanding the specifics.

Thanks for your work !

Hi! I responded in another thread, but I'll post it again here. Try the following:

- Right click > Fix the node

- Disable "use_same_seed"

- Put a positive value in "seed"

- Load a ControlNet and upscaler model, even if you disable "use_controlnet"

I will put a little "disclaimer" directly in the workflow for the next update

@taches Thank you, as i told you, i've already tried this. Edit : Following the errors, i downloaded the exact models that were missing so i wouldn't have to look into the details.

@Pandebilus It seems to be a general issue from what I saw from the repo of the author. I will see what I can do from my side to avoid this set up and add documentation

It works very slowly compared to the others. I turned off all the additional features, but he still draws one picture for 5 minutes. The output is very bad. There is clearly something wrong with the resolution, as if Upscaler is working anyway. And it's also very bad that there is only one window for your lora

I don't have any speed issue. 5 minutes for one picture is really long, I usually wait around few seconds.

What are you using as hardware and checkpoint models? Speed and output quality can be directly linked to those. Keep in mind the resolutions are standards for SDXL models.

And I don't see how the Lora Stacker is a bad practice. Can you elaborate?

@taches Forget it, there may be a lot of nuances. If it works fine for most people, then the problem is on my side. I just changed the Workflow to another one. I am currently at the stage of searching for the perfect Workflow for me and going through different options))

@_Jarvis_ Sorry it didn't work out for you. Let me know if I can help or if you find a workaround, it would still be interesting to understand the source of the problem!