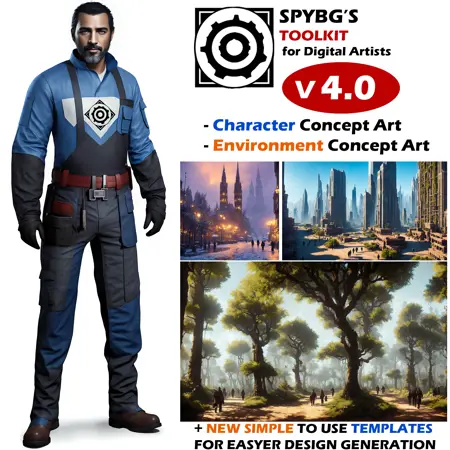

SPYBG's ToolKit for Digital Artists

Official YouTube Channel: [CLICK HERE]

Patreon: [CLICK HERE]

Latest Video:

Hello everyone, my name is Valentin from the Bulgarian AI Art Community, but people know me as SPYBG, I'm a 3D Character Artist by profession, been working this for many years now. For everyone curious what I do professionally you can find my artstation here: https://www.artstation.com/spybg

I was experimenting with AI like many of you when it first came out. And I wanted to create something that will help me with my creativity for my personal projects and so on ,and eventually i saw the potential for artists to use the thing i was making in a professional environment so.. for the last 2 Months I've been creating custom datasets for characters, and after a request of a close friend of mine who is a Technical Lead in one studio where they make environments, I've decided to make environment dataset as well for my custom model.

Since I know a lot of artists who got upset and so on about "people using their art" I went in a different direction. All of my datasets (training images I created for this) are made by me.. and it took a lot of time to make them. But I was smart and used AI tools to create what I need, so all of my datasets (for characters and environments) are AI generated so no

artists input was used in the making of this model, except my own input.

I trained my model on 100 steps, with 1926 images, the model was trained with 194 000 steps in total. (yea I know it's a lot but the results speak for them self's).

Character Dataset: 766 custom made by me images.

Environment Dataset: 1160 custom made by me images.

Special Thanks to Suspirior! He helped me with some tips and tricks, and also ideas. And he was the first one who Beta-tested my model so, big thanks buddy! I'll include some of his tests as well here.

Tips for using my model:

I would recommend using some of this settings, they provide the best results at least for me. But feel free to experiment.

Sampler: DPM++2M Karras

Steps: 150 steps (lower steps also work but for this training data 150 works the best based on my testing)

Recommended Resolution: 768x768 (The model i used as base for training is a custom modified base of Protogen 3.4 merged with older versions of my toolkit (v2.0), and based on that I've trained my model with 768x768 datasets so, I recommend to use 768x768 and 768x1280, or higher resolutions).

Note: with version 4.0 and above I've used the basic 1-5-pruned model and i've finetuned it properly

CFG Scale: 5 ~ 7 works best

Trigger words: tk-char (for characters) tk-env (for environments) why tk? (tk stands for Toolkit)

IMPORTANT: If you want to get the best results when creating characters use my model in img2img with the images I provided in the templates directory, in order to get much more clean and professional looking images. txt2img while it's great for environments for characters is heavily unpredictable sometimes and when making character concept art we want consistency. So I personally recommend you to use my template images or any of yours. that's why I've provided different character sheets made by me in order to get more consistent results.

Example prompts:

CHARACTER examples:

"photograph of (((male))) tk-char warrior, highly detailed, award winning image, 16k"

or

"photograph of (((male))) tk-char style warrior, highly detailed, award winning image, 16k"

"photograph of (((female))) tk-char warrior, highly detailed, award winning image, 16k"

or

"photograph of (((female))) tk-char style warrior, highly detailed, award winning image, 16k"

while you can use tk-char by it's self as a trigger, you can also use tk-char style as well. Try them both, see what results you get.

Note: Include (((male))) or (((female))) in front of tk-char to specify what character you want when creating the prompt. After that use what ever you want to define the prompt better. And also keep your prompts short, while using longer prompts can be cool, check out some of the templates from my images and you'll see how with little you can get decent results.

Also here is a link to some of my "demo" images, use those as templates in img2img or use any of your images. but mine will give you good results if you're making character concept art (there are two versions available, basic full body with different proportions and silhouette that's 1:1 aspect ratio, and closeup with head variations that's 2:1 aspect ratio)

Link to template images: [DOWNLOAD]

Environment examples:

"photograph of tk-env ancient environment style, Persian city, with people walking in it, in ancient Persia, with palm trees in the city, and flowers everywhere, award winning image, highly detailed"

just include tk-env in your prompt to activate the trained data.

I recommend you to add negative prompts for best results, any will work, but here is the one I use.

NEGATIVE PROMPT: (((signature))), (((text))), (((watermarks))), deformed eyes, close up, ((disfigured)), ((bad art)), ((deformed)), ((extra limbs)), (((duplicate))), ((morbid)), ((mutilated)), out of frame, extra fingers, mutated hands, poorly drawn eyes, ((poorly drawn hands)), ((poorly drawn face)), (((mutation))), ((ugly)), blurry, ((bad anatomy)), (((bad proportions))), cloned face, body out of frame, out of frame, bad anatomy, gross proportions, (malformed limbs), ((missing arms)), ((missing legs)), (((extra arms))), (((extra legs))), (fused fingers), (too many fingers), (((long neck))), tiling, poorly drawn, mutated, cross-eye, canvas frame, frame, cartoon, 3d, weird colors, blurry

Note: With my latest release of my model (v4.5 you don't need to use any negative prompts, yes you heard me correct..) but still if you want to use any those are good starting point.

____________________________________________________________________________

VAE: I would recommend to use the base SD 1.5 VAE from stable diffusion for better results

____________________________________________________________________________

SD UPSCALE & Ultimate SD Upscale: If you want to upscale generated image I would recommend to use the automatic1111 SD Upscale with value of 0.35 (noise strength) scale of 2 and upscale it with R-ESRGAN General 4xV3

for me this gives the best results.

____________________________________________________________________________

Since my model is based on 1.5 all embeddings done with the 1.5 model will work fine with my custom model. I'll include some of the great ones with links bellow and update the list while I go.

EMBEDDINGS:

[SPYBGTK-C-Enh] - My own tool designed to improve even more your character creations used in combination with my model

Note: Lower the strength of the LORA embedding so it fixes some things on your models but it doesn't over-take the design you're going after.

[CharTurner] - Great for generating character concepts from front side and back views (Use it with combination with the templates. (Front_Side_Back) from my template images for even better results!

Note: My model now supports Multiple views of the same character when creating an image in txt2img but still check this addon it's great!

___________________________________________________________________________

Feel free to use/merge and experiment with my model for anything you want.

If you want to credit me for using it feel free, but its all right. All I want is for people and artists to have something they can use in a production pipeline, or just experiment for fun.

This is the closest I got to making it a possibility.

And yes you can train with this model your own images of you or anything you want.

but i would recommend to do TI embeddings of your own images for additional optimal results.

P.S. share your results ,would love to see what you guys make!

Cheers!

Your friendly neighborhood 3D Character Artist

Valentin

Description

To show my gratitude to everybody who are using my model this is for you: https://www.youtube.com/watch?v=HM5aWE-KuR8

I'll be making Tutorials soon discussing my workflows and share some tips and tricks from an Artist's point of view. Hopefully those will help you with your creations.

Version 4.0 Patch notes:

changed trigger word from tk_char to tk-char

changed trigger word from tk_env to tk-env

improved overall image generation even without using trigger words (by finetuning the crap out of this model... but for best results depending on are you making a character or environment I highly recommend to use the proper tag).

Better character generation in txt2img tab (I've finetuned the model from scratch to be able to produce more character concept-art like poses and stuff, but I would still recommend for consistency to use img2img with my templates. speaking of...

New templates to use in img2img. Also updated the link in the Model information page.

Improved environment training dataset (I've created additional 200 highly detailed images for finetuning that improved overall image quality).

Improved character training dataset (created 200 additional highly detailed images to teach the model to create front and back views of the same character more effectively, also that improved character creation in txt2img tab).

Temporary removed .safetensor reason: Some users experience difficulties while using it, I've removed it till I've find a solution to the problem..

Fixed issue that causes embeddings not to work properly with the model. (I've shifted back some weights from the original 1-5-pruned.ckpt, now you should get proper results when using embeddings from Civitai or from your own if they were trained on base 1-5-pruned model).

Transitioned from 1-5vae VAE File to vae-ft-mse-840000-ema-pruned (Simply gives better results so I highly recommend downloading that one from google)

Created YouTube channel after many of your requests how do i get so much detail in my images, I've decided to start to make videos explaining my processes and show you some tips and tricks while we're at it, so feel free to subscribe if you're interested in watching that kind of content.

Started plotting world domination for our A.I. overlords (just kidding).

FAQ

Comments (27)

Someone's gotta explain to me why OP's suggestion says to generate using SDE and 150 steps.

Like, the SDE samplers are designed to work slowly, but work in a range of 5-20 steps, not 150.

hey :) Hi @kl001a , I'll explain soon in a video actually, A lot of people have similar questions, I'll try to explain everything in YouTube :) , reason i use 150 samples is to get little bit more on 768x768 resolution, and it's not a problem since i run 3090 at home, but yes 5-20 steps work, but use at least 30 steps if you want to get good results

Hi, I've been getting black squares after seeing characters being generated at some point in the preview. I have added the yaml file in the same file as the model.

I've also added the argument --disable-nan-check when the console asked me to.

what vae do you use, also what setup do you have, and have you updated your a1111 reacently? and are you using the ckpt version or the safetensors version file?

sorry i reply so late, the civitai website was down all day.

@R6SPY

@ursuperawesome008 yes?

try this things, remove the --disable-nan-check , and in the arguments add only --xformers and try again, if this issue persists try adding --no-half that also may or it may not help i don't use it you should not use it too, also in the settings select the ft-mse-840000-ema-pruned vae should work better. I'm not sure what is the problem but those are my settings for my setup at home where i'm using it. Also make sure you're a1111's up to date. and finally if you're having problems with the .ckpt file download the .safetensors file from the download botton. And let me know did any of this things helped

@R6SPY Hi, I’ve updated my auto1111, and have been using a safetensors file. I have using been the Anything v3 and SD 2.1. I’ve been under the impression that VAEs are model-specific — are they not?

Thanks and looking forward to enjoying your great contributions!

@R6SPY Same here, added to command args but receive error msg. When added to line call webui.bat --xformers --medvram --no-half --disable-nan, the render results in a black image.

@R6SPY I removed --disable-nan-check and now the console is giving me this: modules.devices.NansException: A tensor with all NaNs was produced in Unet. Use --disable-nan-check commandline argument to disable this check.

@R6SPY same issue here. I added some more details for your inspection:

1) it always renders black output image for any txt2img and img2img when "--disable-nan-check" is used, otherwise, it failed to render and throw the exception of "modules.devices.NansException: A tensor with all NaNs was produced in Unet. ..."

2) this issue only happen on v4.0 safetensors version for me, v4.0 ckpt version works fine

3) tested with latest version of auto1111 (last commit: c81b52f), use vae-ft-mse-840000-ema-pruned.ckpt as vae, tested with --xformers only and with --no-half as well, same issue happened

Confirming that NAN error using .safetensors but using the .ckpt works.

@starcoder this works!

problem comes from the safetensor file i'll investigate tonight after work and i'll get back to you guys once i have a solution to the "issue" but we'll fix it.

good news everyone, I've found out the problem, and I'm working on resolving it (its a issue with the .safetensor file) I'll resolve the issue and re-upload the model once I have, and I'll leave a comment here again so you'll know that's been updated.

There is some general problem with the safetensor's files even happening on my machine, for the time being, I've deleted it so it woun't cause confusion with the people who want to use my model, and I'll upload a newer version of a safetensor file as soon as it's working but this may take a day or two.// meanwhile try downloading the .ckpt version and use this one. It's not yet scanned by Civitai but you have nothing to worry about. Or if you're worried you can wait till they scan it if you have any concerns. Apologies for the inconvenience.

Any chance to get the safer safetensors format and, if possible, the pruned version of this incredible model ?

I'll see what i can do tonight my friend, had some issues regarding the safetensor i'll try out to figure it out.

also you can work with the pickletensor file for the moment (it was scanned by civitai finally so people can feel safe downloading the ckpt file :)

@R6SPY OK, thanks !

need safetensor no ckpt

I had issues with the safetenor, that's why I removed for the moment, you can still download the pickletensor, it's scanned and it's safe, 4K+ people already downloaded it. So you're safe. Otherwise I'll figure out what's the problem with the safetensor when I have the time, but since I work fulltime I'm really busy and I'll try to figure out why it gives errors when you generate stuff. Once I fix it, I'll re-upload it.

Hi, I can't seem to get this to produce a lot of anime characters even with hard prompting. Any tips?

the model is mostly oriented towards character concept for production, regarding quality and everything to look "usable" by artists, It's not specifically designed for anime, but let me check and I'll leave you a comment here how to get some good results, let me check

try some prompt like this: a photo of (((anime))) male japanize warrior, tk-char, anime style (its just for test but you'll get the idea.

also you can try "a manga photo of (((anime))) male japanize warrior, tk-char, anime style, manga"

Details

Files

spybgsToolkitFor_v40YoutubeChannel.yaml

Mirrors

gothicNightclubDark_intothe2kgothclubV14.yaml

theOldShadowRunStyles_1990sV10.yaml

intothe2kgothclubV20_intothe2kgothclubV20.yaml

spybgsToolkitFor_v40YoutubeChannel.yaml

spybgsToolkitFor_v35.yaml

rpgGameCharacter_rpgGameCharacterPortray.yaml

hspuCarRender_hspuCarV10.yaml

battletechClassic_mechV10.yaml

spybgsToolkitFor_v30.yaml

battletechClassic_mechV10Ckpt.yaml

mariePussyPiercings_v10.yaml

theOldShadowRunStyles_V10St.yaml

nausicaeAndTheAIOfThe_nauskaavalofwiV01.yaml

impossiblebodyarttattt_ibatv10.yaml

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.