StyleJourney with NSFW

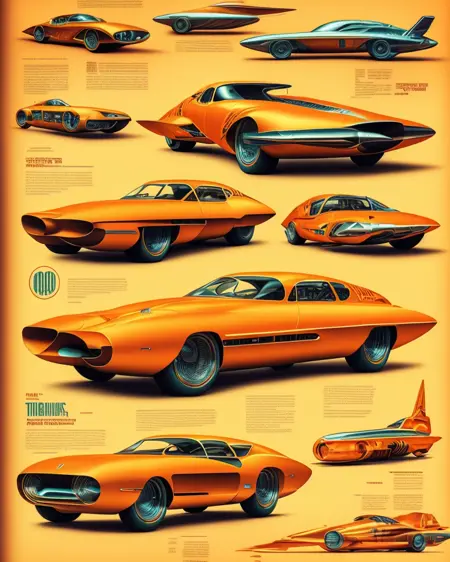

As someone who has gained tremendously from the stable diffusion (SD) community, I am thrilled to have the opportunity to give back by sharing my work. I've fine-tuned an SD model on over 15,000 Midjourney v4 and v5 images, resulting in a powerful tool that captures the unique essence of MidJourney with capability of generating NSFW.

You can run this model on https://randomseed.co/model/43 with GPU acceleration.

By supporting me on Ko-fi (https://ko-fi.com/michelangelofussion), you're not only empowering me to maintain and improve the current model, but also enabling me to expand my research and train even more advanced models on 100k and 1M images. Your valuable contributions will help cover server costs and propel us further on this incredible journey.

My model, trained with offset noise, effectively grasps the MidJourney aesthetic, especially when it comes to generating art-like images. While prompting photorealistic content is possible, it requires more effort due to the model's complexity. The model can generate images in various sizes, but 512x512 has proven to produce the fewest artifacts.

This model is freely available for use under any circumstances, except when utilized as part of merges offered on paid platforms.

Description

FAQ

Comments (32)

Good and well trained model.

If possible, can you add the smaller 2gb pruned-fp16 version ?

Sure, will do soon.

@krodek502 Thanks !

@ritcher1 bad news is model is already in fp16 version. I trained it with kohya with experimantal full fp16 training and it seems it takes as much space as possible. sorry for inconvience.

It can be pruned by anyone using this extension https://github.com/arenasys/stable-diffusion-webui-model-toolkit

It'll remove any of what it calls "junk data". Image quality should be the same.

@Freshl1te I already tried two tools but I'll check this one also

@krodek502 If this can be of any help (no pressure intended, of course):

- https://github.com/Akegarasu/sd-webui-model-converter

"convert to precisions: fp32, fp16, bf16 - pruning model: no-ema, ema-only - checkpoint ext convert: ckpt, safetensors"

- https://github.com/arenatemp/stable-diffusion-webui-model-toolkit

"Cleaning/pruning models - Converting to/from safetensors - Extracting/replacing model components"

And this is a suggestion from another user, posted in another model page:

If you're using automatic1111 webui, you can do the following:

Go into checkpoint merger

Input this model as Model A (nothing else)

Name it (Something like "ModelMerge_Pruned_fp16")

Select "No interpolation option"

Select "safetensors" checkbox

tick the "save as float16" checkbox

Merge

What you'll get is a .safetensors file which is more secure than ckpt, 2GB~ in size and the generation is exactly the same as the .ckpt file.

@ritcher1 I already use flow you describe with automatic1111. Still gets 4GB file.

@krodek502 Ok, thank you for your efforts. Some (few) models are so rich in information that they are not compressible very much. Maybe this is one of them. Thanks anyway.

No sales. Thanks for sharing, but I wish Civitai allowed us to remove no sales models from search.

It's default setting. I didn't think much about actual licencing.

I'm kinda fine with ppl make money on this model, I just don't like when some merge models and sell them as new thing without actual work.

who tf cares? this shit is delusional. the base stable diffusion model was trained on billions of stolen images

@freaky I would also like to put forth another argument. Artists at art school are trained on images they are shown by professors and by a lifetime's worth of experience that includes many unauthorized images. Imagine telling an art student (or anyone) that they are not allowed to get inspired by a piece of art they see in the world, or worse trying to make it illegal for them to even see it. I think a case could be made that it should be legal for AI to train on all images and the judgment about infringement should instead be made on how derivative the resulting work is, similar to how artists are actually judged in the world today. I have hope this will all get worked out as people start to understand this technology.

@krodek502 Thanks!

@ejfmhw568 I go to an art university for my bachelor's in Visual Effects, 3D modeling, and Animation. I also love to draw and paint as well. The way I see it, the world is changing, and the rest of the artists can either sit back and cry or figure out HOW they can use this as a tool in their workflow so that they still have a job or a decent living at the end of the day because, to be honest, I would still talk a beautiful hand-painted canvas to hang up on the wall over something that was just pumped out of the ai, but I guess the other artists don't see it that way and actually think that this is the end of art. I also think that ai presents a good chance to generate inspiration for those that need something new to go paint. The cat is already out of the bag and I think that it can be a great tool once all of the legal stuff is out of the way.

@rtf85 he's not saying "don't use this to make money", he's saying "don't use this to host on your platform and charge people to generate images using my model, keep it free for everyone to use to create."

So you can still use it for whatever you want, prints, video production, etc. Unless you're starting a business hosting an AI platform using models you didn't build, you can put down the pitchfork lol.

i get the following error when trying to load this checkpoint: RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cpu and cuda:0! (when checking argument for argument index in method wrapper__index_select), all other check points fine, any idea why?

You don't have problems with aby other models? Seems like problem with you enviroment? What UI you're using?

@krodek502 just this one, latest version of automatic1111, model downloaded into the models folder so no idea

@krodek502 now I feel stupid, just deleted and downloaded directly into folder without using the helper extension and worked first time, thanks for taking the time to respond, when I should have tried that initially as the liklihood of it not working for only me is pretty low and should have suggested a corrupted download first

@Hbait2 glad it worked!

Can you make one that is compatible with InvokeAI?

Uploaded .ckpt version

@krodek502 I don't know why but the .ckpt one is just generating random images in Invoke AI 2.3.3

@diggydre same

@diggydre @dillfrescott It's working with Automatic 1111

Do you have posibillity to check it with A1111?

@krodek502 Yes, I'm sorry. I got it running in Automatic 1111 last night. For some things, I prefer InvokeAI because their inpainting and outpainting setup is just so simple. I found out that Invoke AI is moving over to diffusers. I didn't try to convert it to a diffuser because I didn't know if it would break anything with the offset noise.

someone please make a google colab webui of this

I have a Google Colab that works with all models, you just paste in the link for the model, but Google colab banned image generation models, maybe 2 days ago it was allowed

you can run them on randomseed DOT co for free

Please make it available on HuggingFace as well. If it's not there of course.

it is super soapy, blurry fuzzy, typical midjorney-like images. Even with "sharp focus" prompt, it will make insane bokeh.

If person head slightly rotated - one eye in focus, other eye blurred.

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.