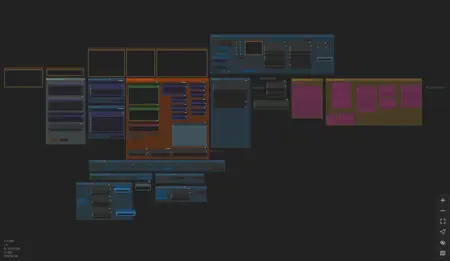

I started writing an article about how to best use older Wan 2.1 LoRAs in the new Wan 2.2 workflows and every time I wrote anything, it got so complicated that the article was nearly unreadable. In the end, I decided it was much simpler to just SHOW rather than EXPLAIN, so I made this workflow. The rough draft of this workflow has been my daily driver for wan creation for a little while now, so it's been proven to work.

1.2 UPDATE: Version 1.2 is released. It's a very minor bugfix version that replaces a LogicUtils node from the old workflow. A few users have mentioned that they are not able to install LogicUtils custom nodes package for some reason, so I swapped out that node for a similar one. This update should solve that installation problem.

UPDATE: I've published version 1.1 of this workflow. It's essentially the same but adds some extra guidance in notes for Wan 2.2 video creation and one new optional feature for long video generation. See the version notes on the right side of this page for more details.

This workflow is the result of a great deal of experimentation with running Wan 2.1 LoRAs inside a Wan 2.2 workflow for maximum effect and accuracy. Older LoRAs work best in the Low Noise model of Wan 2.2 which is the closest to the older Wan 2.1 model. However, the High Noise model is required to give Wan 2.2 videos the enhanced motions, camera control, and prompt adherence that are so much improved over Wan 2.1. This workflow is a compromise between those two competing interests and combines the best of both worlds. It provides all those Wan 2.2 advantages but also presesrves the look and feel of Wan 2.1 LoRAs, particularly character and clothing models, with high accuracy.

This workflow is customized to work very well with the Wan 2.1 models created by darkroast175696 on Civitai but should also work for any other Wan 2.1 models.

If your video doesn't use any LoRAs at all or is only using Wan 2.2 LoRAs, I recommend a 3-stage workflow with a 2-step introduction that doesn't use any LoRAs or acceleration at all, followed by 6 to 10 steps of Lightning enhanced High and Low Noise stages (3 to 5 steps of each).

The workflow includes recommended settings for all of the Wan models I've published. Those settings may not always be the best for whatever video you're making, but they will make a good starting point and you can adjust from there. Some other features that are supported and optional:

smoothing/frame interpolation

dynamic prompts for wildcards and random generation

caption text overlay on final video output

watermark image added to final video output

I hope you can make good use of this workflow and make tons of awesome videos to share with the rest of us.

Description

FAQ

Comments (5)

I haven't tried this yet. But I am curious what minimum system requirements are and if you set it up for running quants or other files. Also, making this comment so I can return and see if I have done anything with it - marking it like a damned dog!

The requirements are the same as for any standard wan 2.2 workflow, which means it depends on what high and low models you use. I have a 16gb video card, so I use q6 gguf models and I normally run around 14gb used by my card. I would recommend picking the biggest gguf model that your card can handle without using shared memory. I don't have any experience setting up workflows with block swapping, so I haven't set that up here.

If this workflow uses too much ram for you, then you can start with a workflow that runs on your system and make a couple of small changes:

* force the high noise ksampler to only use 2 or 3 steps, no matter what

* the high noise LoRA stack should NOT use any lightx2v or causvid or whatever, just plain wan

* the low noise uses lightx2v and only about 6 steps (between 5 and 7 is normally fine)

Aside from that, just use the strength recommendations from this workflow and you're all set. Most workflows automatically divide your chosen number of steps in half, splitting steps between high and low. The main unusual thing about this workflow is that the steps are uneven and most of the work (and most of the LoRAs) is done by the low noise stage.

@darkroast175696 Thanks for that info. I am running another work flow with Q8 ggufs. Without block swapping I would have to run the q6. We aren't much different in rigs - 16GN gpu and 64 GB onboard ram. I might be giving this a shot - always looking to improve. I apreciate you responding!

@hdean Those are pretty much my specs as well. I am using this workflow with a Q6 gguf, with sageattention enabled in the comfyui startup, and 2 steps / 6 steps for high/low. For a 640x1136 video with 3x frame interpolation I can make a video in 440 seconds.

@darkroast175696 Very nice. I can't get sage to work, though I haven't tried too hard. When I get the time, I am gonna check your WF. Thanks again for being responsive.