Goodies taem v3!

One more set of ChatGPT's goodies!

This time it's "latent" image sort script!

It's a python script that sorts images in the folder by content!

It's not particularly smart, as you can imagine, it's more about values and colors. Still, it's a massive help for asorted datasets!

Warning!

Use this one at your own risk!

The sorting is accomplished by adding a numeric prefix to filename followed by a dash '-'. It renames associated .txt files as well, in pairs with images.

DO make a backup of your dataset, before trying.

(you can also show the script to ChatGPT and ask it to clarify)

***

As before, I'm adding it to the same .zip file posing as training data.

There're 3 scripts total.

'imagesort' - sorts images based on content, but does so by looking for "next closest match". This creates chains of similar images. BUT depending on dataset, it can make clusters of smaller gradient chains (from light to dark, and then to light again and such), which is not always preferable.

'imagesort-gamma' - sorting algorithm based on above but with an adjustable 'gamma' value. This will not only sort images based on similarity chains, but will also sort entire dataset based on average value. These two methods are conflicting, so you kinda have to pick the best compromise 'gamma' value to your liking.

'prefix-remover' - script that will remove sorting prefixes created by above mentioned two scripts if needed.

(open script files in text editor, and change target 'image_folder' and 'gamma' as needed)

The promised day has finally come!

I did some extra work on Sigmatron-Base!

Even though, generating previews, I really grew to like the model. It was quite challenging not to ruin it too much.

Originally, I intended to do "single tag finetune" to add more noise to every image and make the model more splashy and closer to Omnitron and Neotron-Unbound. But that did not quite work out. I won't go into details, let's just say, I made some dubious decisions assembling training data, and finetune ended up just converging even more into what Sigmatron-Base already is.

What did I do next? Well, I started a fire and went stirring the good old merging cauldron.

Omnitron already had what I needed, meaning I just had to find right balance between Sigmatron-Base and Omnitron-Extra.

What I ended up with, is not just a straight cheesy merge between the two - it has a bit of Neotron, and a bit of that "extra converged" finetune. But in essence, it's Sigmatron-Base and Omnitron-Extra folded on top of each other several times, trying to find the balance.

We'll call it Sigmatron-Hyper!

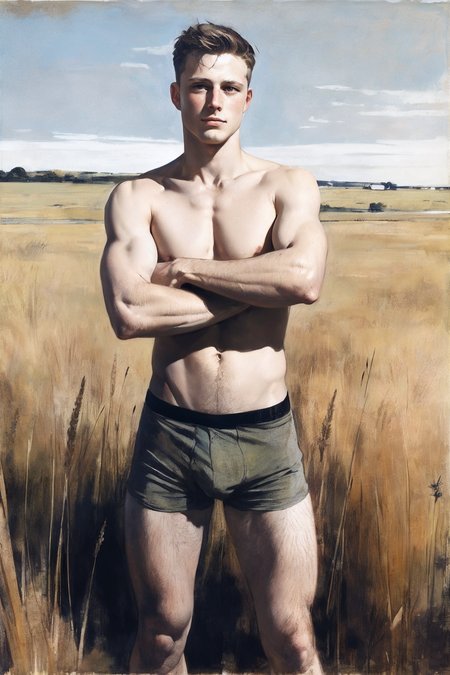

It's extremely similar-looking to Sigmatron-Base, but I wouldn't upload it if I didn't think it was interesting. So, what's different?

Pros

- Deeper colors (Base, was a little muted).

- Splashier output. Cooler abstract word-salad prompts (that I like so much).

- 'body hair', 'chest hair' can be considered fixed.

- Unfortunate 'pov' experiment shows up a lot less, if at all.

- Leaning heavier towards 'chad' output. Accidental 17ish looking males don't show up as much anymore.

- Inherits some of Omnitron's deeper style diversity (retired tags like 'artificial art, niji art, himbo art' can be evoked again, but to a much lesser effect).

- A lot of output is still EXTREMELY similar to Sigmatron-Base, so much so, that it looks like a different seed of the same model.

- Upscales "look" sharper. I'm not 100% on that, but I'm leaning to believe this version upscales better.

Cons

- More averaged faces - unavoidable when merging. Which is why 'body hair' could be fixed. But 'guy's still work.

- Previews will be near identical to Sigmatron-Base. Both a good thing and a bad thing.

Notes

- 'dramatic lighting' is pretty volatile. It can work, but I basically tagged images, I hated the lighting in, with 'dramatic lighting'.

If some other notes turn up - I'll update this. Until then...

Good luck and have fun!

CSA

If you've ever looked at my prompts, you probably noticed - I use one negative prompt a lot:

"low quality, worst quality, low quality, worst quality, AnimeSupport-neg, Anti3dReality, bad_prompt_version2-neg, By bad artist -neg, EasyNegativeV2, FastNegativeV2, NGH, NIV-neg, SimpleNegativeV3, verybadimagenegative_v1.3"

And if you tried, it probably didn't work the same for you. The reason is, because I actually don't have all the negative embeddings from that prompt xD From this list, the ones I have in my embeddings folder are following:

+ AnimeSupport-neg

- Anti3dReality

- bad_prompt_version2-neg (this one is actually called 'bad_prompt_version2.pt', I guess it doesn't load)

- artist -neg

+ EasyNegativeV2

+ FastNegativeV2

+ NGH

- NIV-neg

- SimpleNegativeV3

+ verybadimagenegative_v1.3

Goodies taem v2!

Today I bring you Chat-GPT's "coloradjust" script!

You know how when you finetune, you end up with a yellow model? Well, I'm here to solve all of that!

Finetune color-lean accumulates based on average color of your dataset. If most of your dataset is warm tinted - you get a yellow model! If most of your dataset is monochrome - you get muted colors! And so on...

You can partially counteract that by hardcore tagging color schemes and tints - but that's strenuous hard work and it will still leak overall bias.

Instead, you can just adjust color of your entire dataset to make it lean towards a different average! When it comes to diffusion model training, individual images barely matter - average is what counts.

You can now find another python script posing as training data. This script will go through your selected folder and batch adjust RGB channels and brightness of every image in there!

It's straight forward and non-destructive - creates a separate folder where it saves adjusted files.

Save format is .png, so be ready for a file size spike.

(I can't add duplicate "training data", so I packaged it into the same file as previous script)

Goodies taem!

Chat-GPT's "what's my tag?".py script!

In extra files section you can now find a zip with ChatGPT made python script that will allow you to analyze any number of folders containing images inside them and receive top X tags best matching average content of the images in each folder!

Open script in text editor and adjust 'CONFIG' settings as needed

BASE_DIR = "." # Current folder containing subfolders of images

LABELS_FILE = "tags.txt" # Candidate labels (names, styles, etc.)

OUTPUT_FILE = "results.txt" # Where results will be saved

TOP_K = 3 # Number of top picks per folder

Few notes:

- You NEED to have a .txt file containing candidate "tags". Those can anything you want, newline separated. They will be used to compare image content to.

Example: You have a painterly style image folder, but you don't know what it will train better on 'art' or 'painting'. Make tags.txt containing those two tags and run this script on that image folder!

- This is batch script, so it will run in current folder, and will access every subfolder inside current folder.

- This script pick tags exclusively! If you have similar content of images, picked tags will not repeat.

"In loving memory of ChatGPT-4.

Good night sweet prince."

So, here it is! The model Omnitron and Neotron wanted to be!

I won't go into training detail, otherwise this would be a full article.

But I will give a few notes and pointers:

- It's a "base" model so it's a little stiff. I will make single-tag finetune later, to add more noise and make it more creative.

- DO use negative prompts.

- This has animals and mythical creatures in it (to a lesser extent) in a non-sexual context. They can be cute and 'whimsical', but this is still a NSFW model.

- It's a single gender model, mainly because SD1.5 blends shared tags and I couldn't be bothered coming up with two sets of pose tags (or maybe because I don't have any female datasets). It can probably still generate women (from whatever is left from Ultron underneath), but they have to be explicitly prompted and don't expect female nudity.

- Went back to 'art' tags from Omnitron, aka 'traditional art', 'illustration art' and so on. Because they train better than 'style'.

- It's Text Encoder trained, so '1boy' might come out a bit literal, I suggest avoiding it. Also, I botchered 'body hair', 'chest hair' tags - they work, but are destructive on the face (I'll try to fix it in tag finetune). Instead, use 'hairy' tag.

- Model was trained on my own tagging scheme. And even though I won't yet provide you with a full list of tags, there's really only one thing to know - it's a "dictionary word" tagging scheme minus 'a, an, the, own'. Word salad prompts do work. Tag ex: 'flexing biceps', 'one hand on hip', 'adult man', 'midair', 'on one leg', 'against wall', 'on floor' and so on. I will be using tags in my example prompts - take a peek, if you care enough.

- A few important tags:

'front view', 'left/right three quarter view', 'left/right side view', 'left/right back view' (left/right distinction was made, but I became convinced it doesn't matter in latent space, output ends up 50/50 random)

'eye-level shot', 'low-angle shot', 'high-angle shot', 'tilted left/right shot' ('worm's eye shot' and 'overhead shot' were included, output might not be as expected)

'close-up', 'medium close-up', 'medium shot', 'medium full shot', 'full shot' (these will depend on prompt focus and might be muted; 'long shot' and 'extreme close-up' were also in, but in few instances)

'young man', 'adult man', 'mature man', 'old man' (male subject tags - 'young' doesn't go below 18-20ish; as mentioned, 'boy' collaterally finetuned very young through Text Encoder alignment)

'skinny', 'athletic', 'muscular', 'hyper muscle', 'narrow waist', 'muscle chub', 'fat' (there was also 'average', but you might need negative prompt 'abs', 'muscle' to activate)

'clean shaved' -> 'unshaved face' -> 'stubble' -> 'facial hair' -> 'beard' -> 'long beard'

'laughing', 'smile', 'light smile', 'frown', 'furious' and many more (a bunch of facial expression training)

for hand/arm/leg poses single limb distinction was made as in 'one arm behind head'/'arms behind head', but multi-configuration is not guaranteed, as there just wasn't enough data to facilitate all variants.

fantasy concepts were in, but they should get stronger in single tag finetune. As of right now 'angel/demon/fairy/butterfly wings', 'ram/small/thick horns', 'elf/orc/goblin/dwarf/cyborg/robot/...' - most common stuff should work.

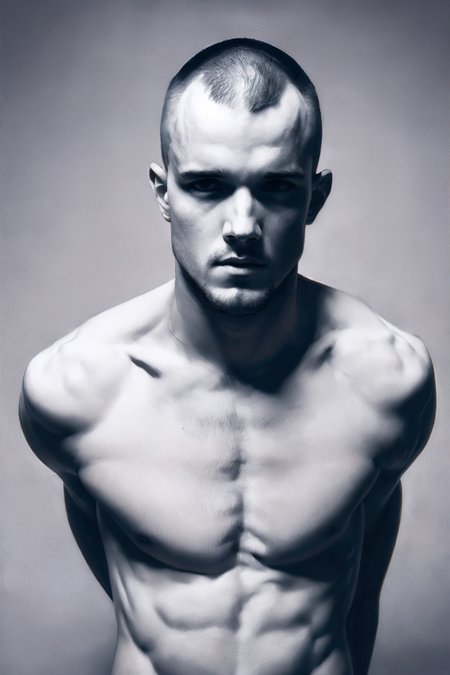

Honorary mention: 'short hair' - actual crew cut, which is usually hard to evoke in SD1.5 models.

Tags are too many to list. On top of that, large batches were subdivided into synonymous tags (something like 'adult man' was trained through ~10 various tags). But try to use tags expressed in normal dictionary words and you might hit what you're looking for. At some point, I might upload a full curated list of what got trained, but that's a lot of testing.

- Overall model came out a little too good, compared to what I normally come out of the woodworks (HA!) with. All thanks to ChatGPT-4, for spilling all the finetuning juice.

- Datasets were entirely erotic in nature, not actual sexual acts were included. Outside of Text Encoder randomly aligning onto some half-arsed imagery, I doubt any sexual prompts will work.

- Training commenced on 15k images at 640x640 resolution.

- As per usual, if you have someone who buys stuff from you that's available for free - have a go at it.

Description

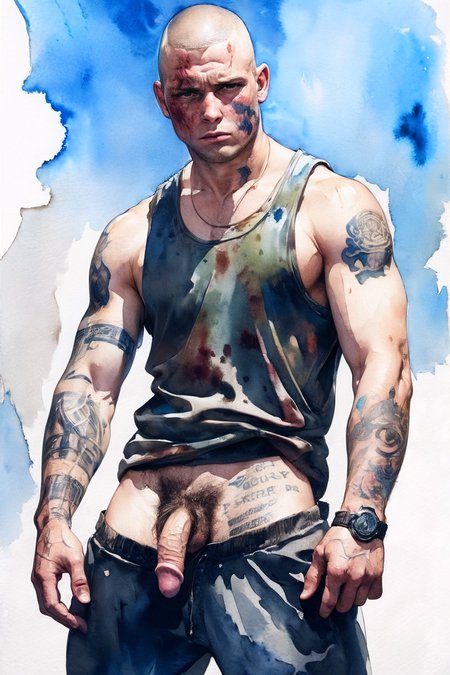

photography, render art, digital art, traditional art, illustration art, pencil art, classical art, chargen art, bara art, yaoi art, concept

flaccid penis, cut penis, soft penis, erect penis, manbutt

('flaccid' is a bit overwhelmed by other types, so do put 'penis' in negative prompt)