The compressed package contains 2 ComfyUI workflows for running:

1.Wan 2.1 T2V: wan2-t2v-upscale-v1.json

2.Wan 2.1 I2V: wan2-i2v-upscale-v1.json

Reference output:

On my RTX4060 8GB vRAM + 32G RAM i2v: Prompt executed in 2807.42 seconds

On my RTX5080 laptop 16G vRAM + 32G RAM i2v: Prompt executed in 1401.00 seconds

Requirements:

Models:

- wan2.1-t2v-14b-Q3_K_M.gguf (T2V) Put in: ComfyUI\models\unet

https://huggingface.co/city96/Wan2.1-T2V-14B-gguf/resolve/main/wan2.1-t2v-14b-Q3_K_M.gguf

- wan2.1-i2v-14b-480p-Q3_K_M.gguf (I2V) Put in: ComfyUI\models\unet

https://huggingface.co/city96/Wan2.1-I2V-14B-480P-gguf/resolve/main/wan2.1-i2v-14b-480p-Q3_K_M.gguf

- wan2.1_t2v_1.3B_fp16.safetensors (t2v model, used in workflow "v2v") Put in: ComfyUI\models\diffusion_models

- umt5-xxl-encoder-Q4_K_M.gguf (CLIP) Put in: ComfyUI\models\text_encoders

https://huggingface.co/city96/umt5-xxl-encoder-gguf/resolve/main/umt5-xxl-encoder-Q4_K_M.gguf

- umt5_xxl_fp8_e4m3fn_scaled.safetensors (CLIP, can use above if you modify workflow "v2v") Put in: ComfyUI\models\text_encoders

- wan_2.1_vae.safetensors (VAE) Put in: ComfyUI\models\vae

- clip_vision_h.safetensors (CLIP VISION) Put in: ComfyUI\models\clip_vision

- RealESRGAN_x2plus.pth (Upscale Model) Put in: ComfyUI\models\upscale_models

https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth

ComfyUI Nodes:

- rgthree-comfy

- ComfyUI-KJNodes

- ComfyUI-VideoHelperSuite

- ComfyUI-Frame-Interpolation

- Comfyui-Memory_Cleanup (Not required if you modify the workflow)

If you have higher performance hardware, you can choose higher quantization models.

Description

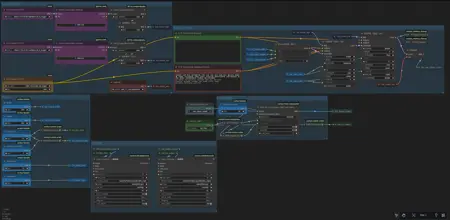

I completely refactored the workflow.

The compressed file contains 3 ComfyUI workflows for running:

1.wan2.2-i2v-gguf-v2.json

2.wan2.2-i2v-v2.json

2.wan2.2-t2v-gguf-v2.json

Reference output:

RTX4060 8GB vRAM + 32G RAM i2v(GGUF Q5_K_M; 81*512*784): Prompt executed in 00:10:14

RTX4070 16GB vRAM + 32G RAM i2v(Remix; 81*576*864): Prompt executed in 325.84 seconds

RTX5080 laptop 16G vRAM + 64G RAM i2v(Remix; 81*576*960): Prompt executed in 377.07 seconds

Requirements:

Models:

Main Process:

- Wan 2.2 (GGUF), Put in: ComfyUI\models\unet; vRAM 16G can use Q8_0, vRAM 8G can use Q5_K_M.

- Wan2.2-T2V.

- Wan2.2-I2V.

Wan 2.2 (safetensors), Put in: ComfyUI\models\diffusion_models

- Remix 2.0 NSFW Version, I2V.

- umt5-xxl-encoder-Q8_0.gguf; (CLIP) Put in: ComfyUI\models\text_encoders

https://huggingface.co/city96/umt5-xxl-encoder-gguf/resolve/main/umt5-xxl-encoder-Q8_0.gguf

NSFW-API/NSFW-Wan-UMT5-XXL

- wan_2.1_vae.safetensors (VAE) Put in: ComfyUI\models\vae

- Optional Loras, speed-up; Put in: ComfyUI\models\loras\LightX2V

Page Link: https://huggingface.co/lightx2v/Wan2.2-Distill-Loras/tree/main

ComfyUI Nodes:

- rgthree-comfy

- ComfyUI-KJNodes

- ComfyUI-VideoHelperSuite

- ComfyUI-Frame-Interpolation

- Comfyui-Memory_Cleanup

- ComfyUI-GGUF

- comfyui-custom-scripts

If you have higher performance hardware, you can choose higher quantization models.

FAQ

Comments (2)

大佬请问一下,我使用的是ITV的工作流,打开配置好以后发现LORA加载器的CLIP没有链接,然后我就把CLIP加载器的CLIP输出链接过来,也能正常运行,但是输出的视频第一秒还是清晰的,后面越来越模糊。到第2,3秒已经完全看不清了。Hi, I have a question. I'm using ITV's workflow. After opening and configuring it, I found that the CLIP in the LoRa loader wasn't linked. So I linked the CLIP output from the CLIP loader, and it ran normally. However, the output video was clear for the first second, but it became increasingly blurry. By the second or third second, it was completely unreadable.

Lora的Clip可以不用连接,采样时一样生效。

模糊是因为采样步数不足。工作流中的6, 2步是 官方原模型/GGUF 版配上 lightx2v 加速Lora,或使用集成了light加速的模型(像Remix)来采样的,加速的步数建议是 8, 4 (CFG:1.0, 1.0),我测试下来 6, 2 (CFG:2.0, 1.0) 效果也可以就以此在工作流了。

如果没有使用加速模型,像t2v工作流中至少需要 10, 4 (CFG: 3.0, 2.5),然后再依据生成效果调整步数和CFG。

目前试下来 Remix 模型的生成效果不错,你可以去 Hugging Face 上下载该模型或 Wan2.2-Lightx2v Lora。