Latent Chimera is an Illustrious based model with an emphasis on semi-realism.

Switching to Forge improved memory management significantly, making SDXL models more practical. After exploring various models, I decided to merge my favorites to experiment with new possibilities. Latent Chimera has non-Illustrious SDXL models mixed back in to boost creativity and reduce overfitting.

This model is the result of combining multiple sources using the SuperMerger extension for A1111. Through methods such as MBW, cosine interpolation, and static weight blending, I aimed to produce a composition distinct enough to warrant sharing—an outcome shaped by ongoing experimentation with merge strategies.

All source model licenses were reviewed prior to merging, and the most permissive terms available under the inherited usage conditions were applied. Merge recipes are included in metadata. VAE has been baked in.

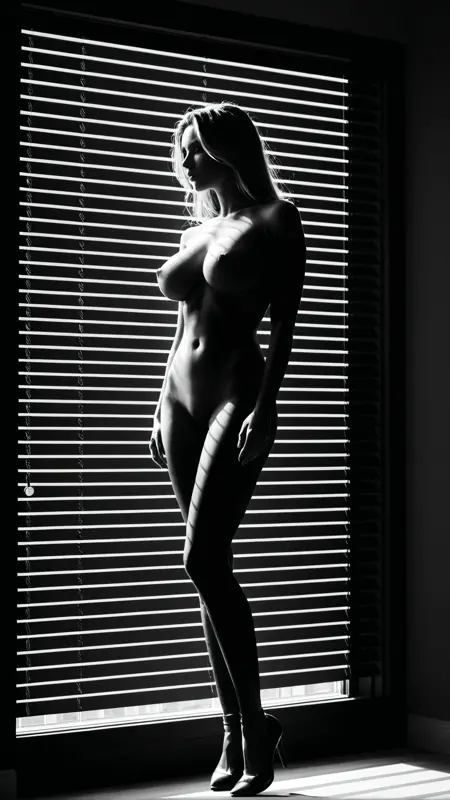

Reality Check

This isn’t intended as a flagship model. My aim here isn’t to drop a “top-tier” release, but to explore merge behavior, stylistic bias, and scene composition in a controlled way. I avoid rushing merges right after donor model releases, both out of respect for the creators and to keep the focus on understanding mechanics—not chasing hype. Most of my excessively long prompts are reused across tests so I can compare bias and behavior between models; while I still make occasional adjustments, I generally post results as they come—flaws, quirks, and all.

Description

MBW with Latent Chimera v1.3, cyberillustrious_v50, and beautifulPeople_v10

FAQ

Comments (12)

since you mentioned supermerger, i have a couple of questions because I have searched far and wide for answers and I just cant find any.

preface: i have a 16gb 4060 ti oc. i also have a 12gb 3060. both rigs have the same cpu and same ram.

on both, with all the latest versions of the main branch of supermerger, loading even 2 models, doing something as simple as a 3x3 alpha beta 0.25-0.75 grid takes over an hour.

im forced to use comfyui and i cant do alpha beta grids as well on it, comfyui sucks

have you encountered this?

nb, gonna try your model, your samples have sold it!

if you’re using the gpu for merging (the "use cuda" box), it can actually slow things down if you're running out of vram. once you run out of vram it starts swapping over pci to system RAM, and that kills the speed. i only use cpu for merges since my 64gb of system ram can cache multiple models at once for three model merging, and the merge is usually done in a few minutes. add on some more time for the image gen and then move on to the next recipe in the grid.

if you want to check, run nvidia-smi -l 1 while merging. if the gpu memory maxes out and your gpu isn’t working hard, you’re probably hitting that bottleneck. try turning off "use cuda" in supermerger and do the merge on cpu instead, then just use your gpu for image gen. also, only save the model when you actually want to keep it and you can save the merge recipe to generated images.

i think GPU-Z also can show what is throttling your GPU with a bit more detail but usually i just run nvidia-smi if its starting to get wonky.

good luck with your testing, im mostly just here to tinker and share the results.

lotophage01134 thats the thing, i do use the "use cuda" checkbox, i can also merge 3 checkpoints easily with supermerger, if I do a one of run. it only starts lagging when I do the xyz, especially since I like to compare alpha beta. for what its worth, I can also use the standard merge checkpoints tab, 2 and 3 checkpoints is no problem. but that means I have to merge 9 checkpoints (if I do 1x9), and then do a xyz grid in txt2img. 9x 7gb is ~65gb

this nvidia-smi -l 1 thing is something I never tried. I will monitor this

also, thank you for your in-depth answer. this is incredibly appreciated, time is precious, and you have given me a small bit of it. thank you.

A_rdatyaksh_I "i do use the "use cuda" checkbox"

just to be clear, i recommend not using that unless you have more VRAM than system RAM. if you do want to use CUDA, you might benefit from using some of the memory optimizations that come with command line options like --medvram-sdxl which i believe attempts to manage memory usage better at the cost of swapping models (instead of layers etc like you might be doing currently).

lotophage01134 I'll try this aswell. Its just a strange thing, I suspect maybe a cuda toolkit incompatibility since I reinstalled a newer version that was recommended for comfy and video generation. not the end of the world, just nice to know how other users experience the tools we have to utilize

@lotophage01134 tested the nvidia smi monitor.

A111 checkpoint merger and comfyui - loading 3x models, doing a merge, generating ~2-3 minutes

I never used more than 90% of my VRAM - fluctuates between 11 and 15gb out of 16, and between 7 and 11 od system ram (makes sense since im offloading the 3rd model) , and thats with normal weighted, sum twice and 3x sum - when I just merge and gen. this is using 2 or 3 models. models load, models merge and generate example. when I dont "use cuda", the merging takes longer, not extremely longer, but longer. same as the other tools.

the problem is solely the XYZ merge within supermerger. 2 models, 3 models, makes no difference. it just takes forever, also freezing the system intermittently.

even did a 10 grid checkpoint comparison in txt2img XYZ, it goes faster to complete the entire grid than waiting for the first image of a 9x grid does in supermerger. guess im just stuck without having the ability to fine tune alphas and betas. which was great in the SD1.5 and pony days.

(fwiw im loading checkpoints from a nvme ssd).

@A_rdatyaksh_I "also freezing the system intermittently"

okay that part is definitely different from my experience since im usually watching youtube with zero issues. i have no issues with system freezing and im wondering if youre having any other system issues at the same time. but you mentioned this happened on two pc's so that seems unlikely. i do remember having some kind of issue with the CUDA toolkit and ended up getting rid of it when they added sysmem fallback policy to the nvidia driver around october 2024.

i used the same process for SD 1.5 merging, the only difference now is that after merging and settling on a recipe/final model, i use Forge for bulk image generation. my process is similar although i use second SSD and not my main system drive. im not sure if that could be related to your system freezing.

im not sure what other advice to give you other than try and see what is happening when those system freezes are going on with generic system diagnosis steps.

@lotophage01134 Its definitely a strange situation. oddly enough, when I was using my secondary ssd for pony and 1.5, on the 3060, even with 12gb, 3 7gb models merging was never a problem, once i even did a 4 into 2 merge on comfy. worked.

before I migrated to the nvme, I used the same ssd for illustrious and noob (eps, cos A1111 cant merge vpred as much as it cant gen) it did the same stuttering. i thought migrating to the nvme would mitigate, making a cleaner link between sys ram, vram and nvme through pci, but it never work. thinking more vram would work, tried with my 4060 machine. same problem. both have the same cuda and drivers - i dont update often. if I find something works, I keep it unless I really need to update.

so far, both have the same cpu and ram, but gpus and ssd's are different. so the only thing thats common is cuda and drivers.

you may have solved the problem. sysmem fallback is forcing a sort of oom which isnt throwing errors because there is enough ram and vram. so it just freezes thinking what to do until it finds a place to put the weights, which is usually vram.

@A_rdatyaksh_I use a disk monitor and see if your system is not caching the models in RAM before sending to VRAM. if its hitting your NVME for each load i can see why that would make it stutter a bit. still doesn't seem like normal behaviour but at least if you can get it to cache the 3 models in system RAM it might mitigate the issue. once i "archive" models im testing to a spinning disk RAID array, my load times go through the roof and takes 1+ min just to load a model. with 64GB of system RAM, even if i load all 3 models loading off the disk, i dont hit slowdowns once they are all cached in memory. double check that AV isn't doing anything first before A1111 gets access to the files.

@lotophage01134 its worth me doing this further im so motivated to find out where the bottleneck is/was since i got some free time after work is done for now. possibly worth rolling back some versions of cuda just to test.

but i think your previous comment highlighted the problem. ✨sysmem fall back policy✨

I decided to go into nvidia and cuda settings using orbmu2k inspector. i did increase cache size from 8gb to 10gb, and forced sysmem fallback policy off. i also changed p2 state to off (essentially forcing gpu as device 0).

i managed to do a 3 model 3x1 xyz in around 230 seconds(loading+merging). which works out to the same speed I get out of comfyui + ~30-45 seconds for generating 960x1440 samples.

this is the speed i know my system can do.

@A_rdatyaksh_I eyyyyyyy thats good to hear! progress! might be worth posting about somewhere so others can learn. the sysmem fall back policy basically trades system RAM swapping for over utilization of VRAM, basically making your PCI bus over utilized as it swaps between the two. the only follow-up i can think of is make sure youre using your full BUS width for your GPU. for example, on my system i have a 3060 and a 1070. my 3060 gets full x16 bandwidth but my 1070 gets x8. this is something GPU-Z shows under the Bus Interface section (the part after the @).

@lotophage01134 yes thats a good idea. maybe reddit is agood place.

i just learnt something new AGAIN! thanks. i must check the lanes. i assumed x16 physical means it utilizes x16 lanes

im sure both my cards on both separate machines are either underutilized of atleast one is somehow for some reason