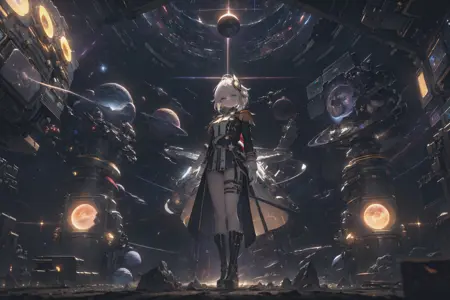

饺子皮是工场做的可是我答应质量很好。比手工还好吃!

Description:

A stylized anime model. This model is the result of attempts to get the textures from MouseyMix, a model that I'm very fond of, on characters with more realistic body proportions. The name 'Gyoza' part of 'Store Bought Gyoza' comes from a shortening of "Gyokai" + "Zankuro", two of the artists MouseyMix is trained on. The Store Bought comes from the fact that the 'bones' of this model, i.e. the primary compositional element is SD-Silicon, or to be more specific Silicon-29. SD-Silicon is made using autoMergeBlockWeight, an automated block merger, hence the dumpling is "made by machines". In other words, 工厂做的,商店买的。

The strengths of this model is that you get a very soft illustrative style model with bright texturing but with very powerful scenery and landscapes. V3 and V3.2 are best for generating generating anime girls. VV(5) is the most powerful but it's also the most wild. Use it if you really want some cool and unique stuff.

Methodology:

Everything is on this HuggingFace repository, along with the recipes. I go over every step and include links to the things used if they're publicly available. If you have anything to ask, don't be hesitant to leave a comment. I believe in an open-source approach to Stable Diffusion model mixing so I'll answer any questions you have on the process. It uses Block Weight Merging pretty extensively, so I would recommend you read up on UNet Blocks as a starting point.

But if you want a general rule of thumb, basically what we're doing is splitting the model mixing process into two parts: a picture composition/landscape arrangement component and a textural component. The former we can make by reverse-cosine Block Weight Merging any sort of highly detailed anime model against Silicon-29. I like to use DetailedProject variants. Then, after we tune up the textual component to get the exact sort of texture we want, we cosine Block Weight Merge the textual component against our compositional component. After that, finetune for taste and we're ready.

Additionally, experimentally in 3.5D and culminating in VV, there is a use of a new block weight formula for transferring the anatomy-sense of model and the style/texture-sense of models used during the mid-mix formula. This allows us to do stuff like move the textural oilpaint-like feeling of various CSRB-based mixes onto the body composition of a more pedestrian anime-mix. See this HuggingFace repository for more details and proof-of-concept mixes of some popular models.

Lastly if you're truly interested in my dumb checkpoint, I store all my work in progress + failed versions here. This is a private repository, you need to join LMFRS to access it.

皮子是买的可是馅子是新鲜的。

Usage:

Basic universal SD1.5 rules apply. Other than that, these are my personal recommendations:

Any sort of Negative prompt should work more or less fine. I like using the AuroraNegative (and KHFB) because this is a very light but powerful negative-embedding that does not distort the image a lot. However, there are a fair bit of 'bad thing' tokens inherited from the mix components and natural negative prompts should get you where you want in most cases.

Clip skip matters a lot. Clip Skip 2 will generally get you your standard 'novelai' like generation, or the closest thing to it, but with later variations and especially with VV Clip skip 1 will make the checkpoint do some truly dynamic and interesting stuff. I recommend trying out Clip Skip 1 if you're going for a wide aspect angle, maybe it will surprise you.

Version 3.2, 3.5D, and V(5) have baked VAEs. Earlier versions uh... I don't really remember if they do, to be honest. V(5) is recommended be used with its VAE, the lighting effect is extremely strong otherwise and you might get an output that's too bright.

Final Notes:

Please don't strip the metadata if you generate with this model, or at least specify that you're using GyozaMix. I don't really care what you do with this model- in any case I couldn't even stop you if I did, but I really enjoy seeing the pretty pictures that people generate with GyozaMix. That's it. Do whatever with this now that I've put it into the world.

Description

Made it just in time for Thanksgiving. Happy Holidays, everyone!

This version is dedicated to UnknownNo3, who makes the best generations with my stuff and also asked for a GyozaMix update. 我做了个新的饺子Mix就是因为你要求。希望你用的很曼怡。

FAQ

Comments (6)

倍感荣幸!💖💖💖

Here we gooooooo~

winter edi is so hard to use but so good for every small detail. 老大还有未来版本吗

Thanks. 可能不会吧。每次调整Quality,Usability好像老往下走。SDXL现在也开始好了。

https://civitai.com/models/216159

我用了这个,行在比SD1.5好了。还是所追有用的是加Token Count。SD1.5要学行的东西就要忘记东西。

反正我写了这么多就是所可以再做一个可是不会全部改进。不知道要是人家会觉得何必。

Thanks. 可能不会吧。每次调整Quality,Usability好像老往下走。SDXL现在也开始好了。

https://civitai.com/models/216159

我用了这个,行在比SD1.5好了。还是所追有用的是加Token Count。SD1.5要学行的东西就要忘记东西。

反正我写了这么多就是所可以再做一个可是不会全部改进。不知道要是人家会觉得何必。

@Jemnite Thanks for the reply.理解大佬的幸苦。我不懂如何调教模型,但单从使用感上这个模型版本确实对很多单词反应太敏锐,会有严重的单一感。如果想出一张想象中的图需要描述很多,描述多了图的质量又下来了然后在skip1.2.3之间反复。但大多数时候出的图就是其他模型比不上的细节度,比很多第一段留下noise然后在第二段的Ksample追加prompt的还要养眼,而且我喜欢这个art style。非常感谢。

为什么我没有加任何背对我的关键词,但是生成的图总是背对我的