Update v3.0 is out. yada yada copy posta from v2 vs v1 but v3 vs v2 etc.

I recomend CFG 2-4

CFG 2 is more realistic, but also vibrant.

CFG 3 gets pretty interesting with glowing and effects.

CFG 3.5 goes a bit crazy.

CFG 4.5 gets its crap back together and starts acting normal.

(if you cannot zoom in on this image, open it in a new tab.)

I have been using around 10-20 steps, 15 should be more than good.

If you use Hires Fix, i would recommend 5 steps then work your way up from there, but generally 6 and above feels "over exposed".

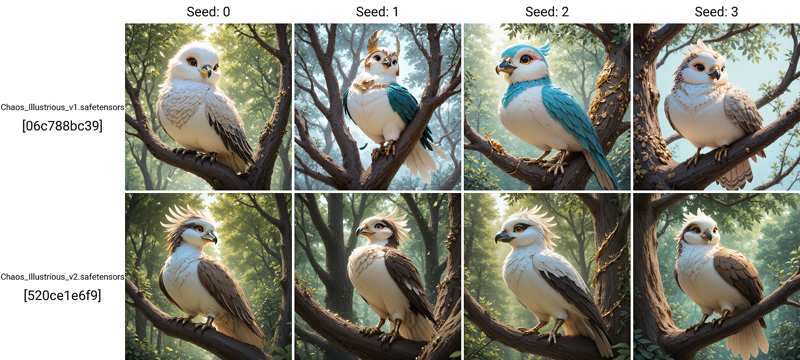

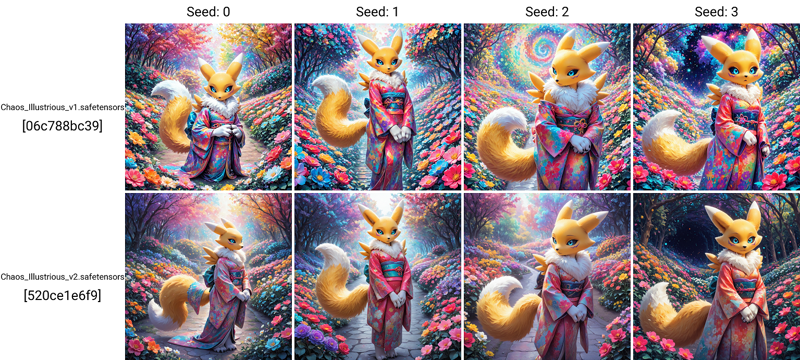

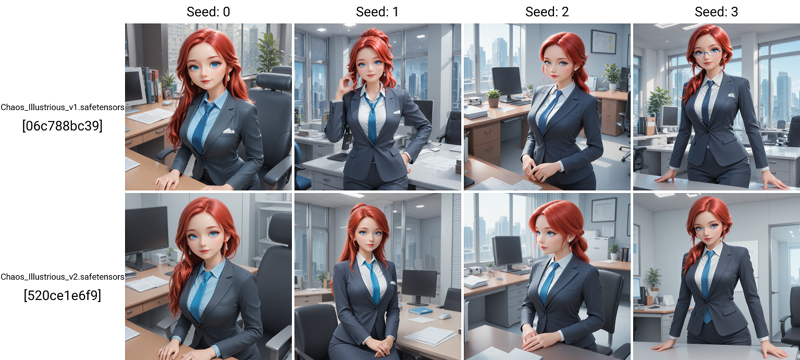

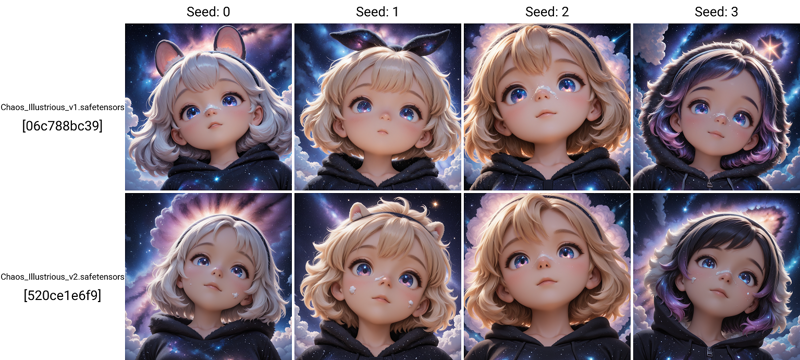

Image Comparison from the versions.

Best sampler combo's

Euler with Karras

Kohaku_LoNyu_Yog with Karras, Normal, Karras Dynamic, or Align Your Steps GITS

Seeds 2 DP with Karras, React Cosinusoidal DynSF, DDIM, Beta, PHI, Laplace, Align Your Steps GITS

Seeds 3 DP with Karras, Simple, DDIM, Align Your Steps, Beta, Turbo

HeunPP2 with Karras, Align Your Steps GITS

IPNDM_V with Karras

DPM++ 2M SDE Turbo (Does not actually use a scheduler so any work)

Sampler / Scheduler samples

(if you cannot zoom in on this image, open it in a new tab.)

Update v2.0 is out. It took me 6 months to complete and boy was it a pain. Do keep in mind that I was not able to get a de-facto 100% better version than v1.0 so I would recommend trying both till you figure out which one you like more.

Version 2.0 is focusing on more character concepts, characters, and solidification of the style more. I think users will find this version more cohesive with lora and other tools. Pictures posted are generally basic and not processed to the full ability of which it could. It drives me crazy when people add a billion lora and fancy processing steps to display image's as I feel its not an accurate representation of the model.

17/20 image's are using old / slightly modified prompts from v1.0 and MANY of these "enhancing terms" are no longer needed.

Version 1.0

Chaos Models merged from other checkpoint's and lora's to focus on magical effects and rings to attempt to fill a gap in what is currently available.

I normally release how this was made, but ill be honest, idk anymore. Somewhere between the merge blocks and hyper tuning I lost my document up to the 90% mark.

This model is based on sudo realistic items with a focus on mythical creatures and furry stuff.

NOTES: some feedback from private channels noted that some lora's based on Illustrious v0.1 may be over powered and you may need to run at lower powers.

Checkpoint is based on Illustrious v1.0 and may be overly sensitive to some keywords or tokens. I recommend starting simple and working up from there.

Recommend CFG 3.0-6.0.

3.0 will be softer than 6.0, but going above 7 may result in over processed looking pictures.

Resolution 1024 to 1536 tested and working fine.

Steps 20 for decent images, 30 is the sweet spot.

Should be fine with most samplers and schedulers. Didn't notice any major issues with random sample testing.

Prompting:

Some issues with the token "eyes" or "eye" noticed in testing. If eye quality is diminished, use "contacts" instead. I have also noticed that this also somehow improves hand quality as well. Idk either.

Example: green eyes -> green contacts

If using "ugly" in the negative, you may have issues generating some races and ethnicities or older / mature looking characters. I would recommend removing this from your negative if you are aiming for mature or realistic characters. I'm not sure why this is. I didn't train that.

Description

Added a lot of new training material

FAQ

Comments (11)

It have some to my attention that v2 may require you to prompt for clothing. Do what you want with that info.....

Some image's are filter locked so be sure you are checking everything if you are interested.

This checkpoint has ComfyUI metadata on it. If anybody wants to tweak the model, they absolutely can.

yea thats the point. Im not trying to keep things secret from the community.

@Delsigina No need to act defensive with your comment, sir. I never knew checkpoints could store Comfy metadata.

@rommix0 Yea i could have worded that better, thought about deleting it and trying again but that may have came off even more passive aggressive. Anyways, yea. I leave the meta data on all of my models for people to check if they want. Im a fan of "using community resources to make a checkpoint is a community resource."

@Delsigina No worries. Anyways, that's pretty cool.

@rommix0 yeee, i mean, nothing in this checkpoing is "mine" as i cant train my own due to bad GPU. I can run local, but thats as far as I can get with a 12gb 2060. If you know of ways i can train on a 12 gb card for checkpoints (preferably using onetrainer), id be happy to make my own, but for now im stuck with merging others stuff. Otherwise I can train my own lora's which I have been doing. Using those to merge in and I have a v3 in the works, but im still tweaking it.

@Delsigina I'll keep you posted if I can figure out a way to train stuff. I've done a lot of model mixing in the past as well. It's a lot of fun. That's why I was excited when metadata could be recovered from checkpoints. A recipe I thought I lost I was able to recover from the metadata alone.

I have a 24GB 3090 for my local setup, so I could potentially easily train a new checkpoint or LoRA with it. I just haven't gotten around to it yet as I've been dealing with other hobbies. I'm juggling between this hobby of creating AI images and doing old school text to speech stuff.

@rommix0 Makes sense, i posted some pictures of the new checkpoint im working on. Feel free to check it out.

@Delsigina For sure man.

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.