📂Files :

Recommendation :

>24 gb Vram: base or Q8_0

16 gb Vram: Q5_K_S

<12 gb Vram: Q4_K_S

For base version

VACE Model: wan2.1_vace_14B_fp8_e4m3fn.safetensors or wan2.1_vace_1.3B_fp16.safetensors

In models/diffusion_models

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

For GGUF version

VACE Quant Model: Wan2.1-VACE-14B-QX_0.gguf

In models/diffusion_models

Quant CLIP: umt5-xxl-encoder-QX.gguf

in models/clip

VAE: wan_2.1_vae.safetensors

in models/vae

ANY upscale model (depreciated):

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

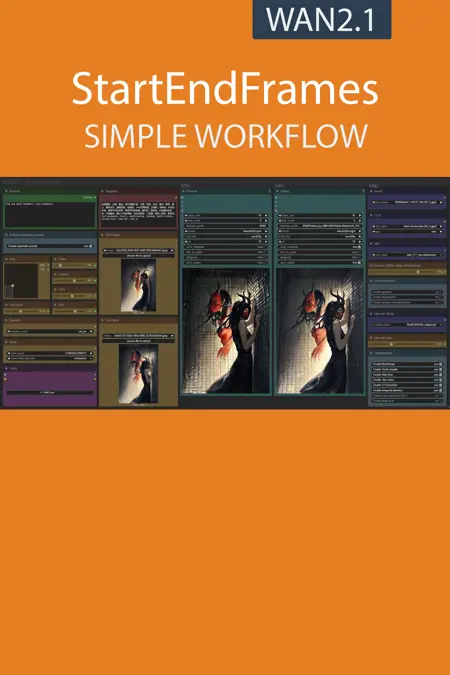

Description

Completely redone version with VACE, and the optimizations present in my new workflow

FAQ

Comments (9)

thanks for this, but how do you install magcache? can't be downloaded from manager and it's not on your nodes auto installer.

i have the same problem

Only working with VACE? Or it can work with the basic Wan 2.1 too?

v3.0 use VACE, older use normal model but worst result

The base version threw up strange errors about not recognizing the model, and I tried them all. I don't understand that one. But the GGUF version is working. FFLF is awesome, perfect for bridging the gap between frames of clips that are not close enough for the usual editing tricks. Looking forward to playing around with this some more. The workflow does have a ton of nodes collapsed that really shouldn't be. Pretty for the wf image, I suppose. There be much expanding to do when you start.

Is it possible to add a background remover?

Have been having an odd issue with this one. The preview shown will have the full completed clip but the saved output will have the first second or so cut off.

actually nvmd they all skip the first .5-1 seconds with just the first frame in there.

I have this error :

SamplerCustomAdvanced

mat1 and mat2 shapes cannot be multiplied (154x768 and 4096x5120)

What can i do ?