📂Files :

Recommendation :

>24 gb Vram: base or Q8_0

16 gb Vram: Q5_K_S

<12 gb Vram: Q4_K_S

For base version

VACE Model: wan2.1_vace_14B_fp8_e4m3fn.safetensors or wan2.1_vace_1.3B_fp16.safetensors

In models/diffusion_models

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

For GGUF version

VACE Quant Model: Wan2.1-VACE-14B-QX_0.gguf

In models/diffusion_models

Quant CLIP: umt5-xxl-encoder-QX.gguf

in models/clip

VAE: wan_2.1_vae.safetensors

in models/vae

ANY upscale model (depreciated):

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

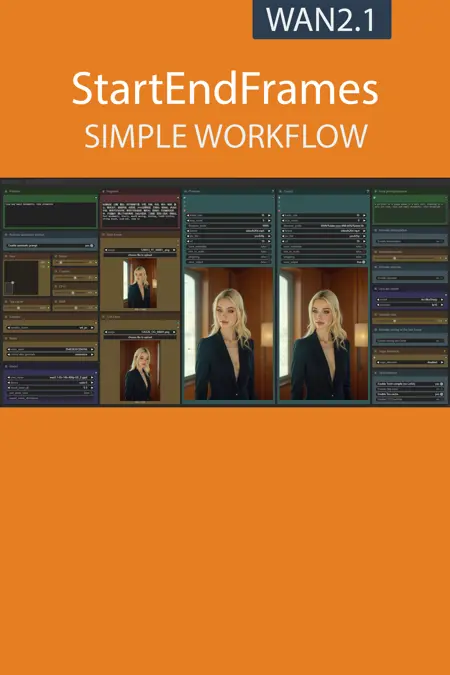

Description

What's new? :

ComfyUi 0.3.29 positive node fix,

LoRA fix with torch compile.

FAQ

Comments (14)

What am I doing wrong?

When loading the graph, the following node types were not found

UpscalerTensorrt

LoadUpscalerTensorrtModel

CFGZeroStarAndInit seems to have disappeared?

I keep getting this error whenever I change the default fames value Node ID:** 374 - Node Type: WanImageToVideo_F2 - Exception Type: RuntimeError - Exception Message: shape '[1, 16, 4, 128, 90]' is invalid for input of size 771840

It seems the WanImageToVideo Node length parameter has to be 1:1 with the value in the Frames node. It's supposed to the same but something is making it different. I set my frames to whatever I want (i.e. 120) and then disconnect the link on the WanImageToVideo node to set 'length' to match the frames value (120), but for some reason it rounds to 121, so right-click the Frames node to set the frames value to 121, then it works.

WanImageToVideo_F2 node is missing, i installed everything right

The missing node comes from this package:

https://github.com/Flow-two/ComfyUI-WanStartEndFramesNative

You also can find it in the manager. My guess is that they changed something since this workflow has been made.

Possible to combine this one with regular I2V workflow? For example, so that one could simply bypass the end frame node and the workflow would act as I2V, instead of start2end frame?

Would be convenient to have it as one workflow.

Конечно можно. просто скопируйте в свой рабочий процесс нужные группы. из i2v с помощью get setnode в процесс Loop отдавайте секвенцию после декодера

Is it normal for this to take much longer than the standard I2V workflow? All settings being equal

Anyone know how to fix the flashing issue at the end of the generated videos?

same problem