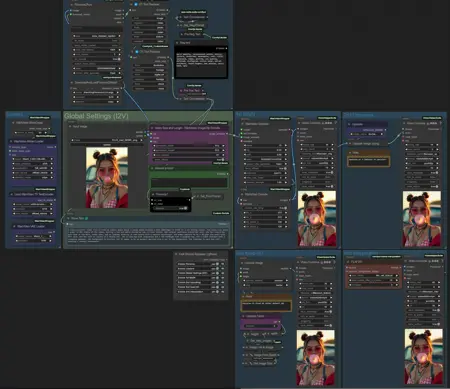

For v1.0

For v2.0

1) I2V Quant Model -> models/diffusion_models

2) CLIP VISION -> models/clip_vision

3) VAE -> models/vae

Prompt can write Florence2 LLM (workflow has toggle between Florence2 and manual user prompt).

Tested on 4080 16GB

v2.0 dev in development. If you have problems with AnyPython node, you should switch the channel recent or/and lower security_level (normal- OR weak) in the ComfyUI/Custom_nodes/ComfyUI-Manager/config.json

Description

FAQ

Comments (6)

Loving it! Do you think Triton Compile and Teacache could get in on the action? or instead of using just an upscaler to upscale, you could use Flux somehow to upscale or would that be too much? IMMA try it w/ your workflow, ha and thank you so much for this!

Oh and I also changed the auto prompt a bit, after florence2 gets the caption and mods it I send it over to ollama advance and w/ a small llm I tell that to "Revise this video prompt to describe the elements of the video, focusing on the motions or actions of the subject in the short clip, as well as the type of camera shot or what the camera is doing. Provide the new prompt in a single paragraph only." So then it's more video focused and not image focused. like I had a photo of a dog and Florence would always say it's mid air and that would always turn into just a dog jumping around to be in mid air...after my ollama add in it would describe the dog as running mostly, also used the pre-prompt to describe it as basic as possible what I wanted "a dog running thru a valley" and it comes out good "A dog running through an open field during golden hour, captured on a sharp 16mm video that highlights its joyful agility and wild energy. The vibrant hues of orange, yellow, and blue add to the dynamic scene, emphasizing both the dog's natural hunting instincts and the natural beauty of the setting."

Wan video came out literally just now so everything is still being tested, I think within a couple weeks the community will have both Triton and Teacache connected. As for upscale by Flux, Wan video is eating up all 16GB, so I'm not sure about that.

Any reason we can't already pass the final frame to another gen?

Nice, thanks for sharing!