Unlock xxx images using flux, Qwen or Wan 2.2.

ATTENTION

Second version for WAN 2.2 T2V works with the usual models BUT for T2V I recommend some different models and LoRas:

https://huggingface.co/lightx2v/Wan2.2-Lightning/blob/main/Wan2.2-T2V-A14B-4steps-250928-dyno/Wan2.2-T2V-A14B-4steps-250928-dyno-high-lightx2v.safetensors instead of wan 2.2 high

https://huggingface.co/eddy1111111/WAN22.XX_Palingenesis/blob/main/WAN22.XX_Palingenesis_low_t2v.safetensorsinstead of wan 2.2 lowRemoved this recommendationNO speed/low step loras on high noise phase

https://huggingface.co/alibaba-pai/Wan2.2-Fun-Reward-LoRAs/blob/main/Wan2.2-Fun-A14B-InP-low-noise-HPS2.1.safetensors with STR:1.0 on low noise phase

https://huggingface.co/lightx2v/Wan2.2-Lightning/blob/main/Wan2.2-T2V-A14B-4steps-lora-250928/low_noise_model.safetensors with STR:1.0 on low noise phase

???

Profit!

All my videos have workflow embedded, the workflow is just a suggestion. But it works, and works very well.

First version for KREA.

Start using: 20 steps, res_2s, bong_tangent, STR 0.8

After that you can experiment with other arguments. It is not very good and deform hands and feet very badly.

ALL PICTURES ARE PNG WITH WORKFLOW INCLUDED

It is not perfect, sometimes it creates monsters, detached members and strange things, just try another seed or adjust your prompt. Eventually it get it right.

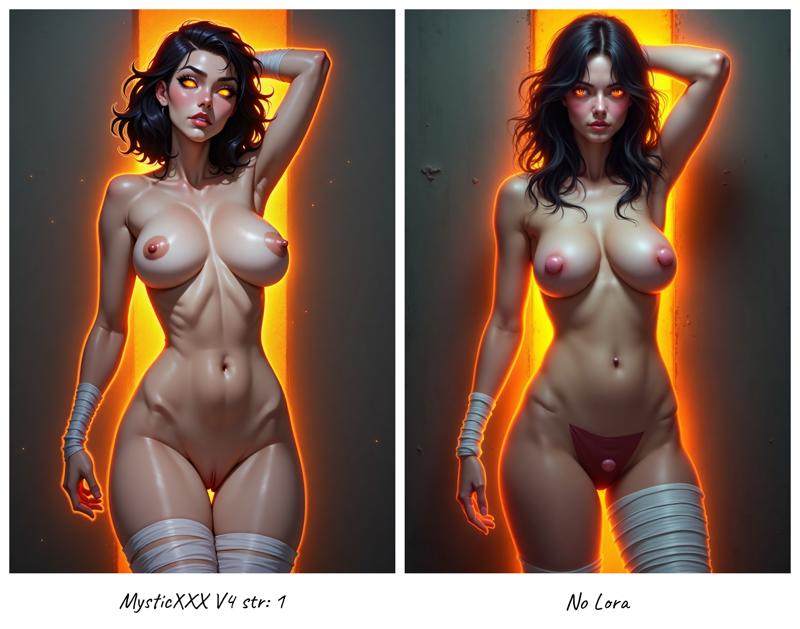

Examples:

Recommended Usage:

Checkpoint: flux1-dev

Strength: 1 (adjust depending on the image)

Sampling Method: DPMPP_2M

Scheduler Type: BETA

CFG Scale: 4

Steps: 35

Feel free to share your creations and feedback so I can improve this model and continue contributing to the creative community!

Description

First version

FAQ

Comments (106)

loaded the work flow from your example videos to se how to use the models you recommend, but its loading as a generic wan2.2 workflow, do you have one with the recomendated models loaded?

That recommendation is for t2v, I'll add some text clarification later. For i2v I'm using the basic models and a lora the example images teach how I create all pictures.

Your suggested model file (https://huggingface.co/lightx2v/Wan2.2-Lightning/blob/main/Wan2.2-T2V-A14B-4steps-250928-dyno/Wan2.2-T2V-A14B-4steps-250928-dyno-high-lightx2v.safetensors instead of wan 2.2 high) does not work for I2V as it is T2V.

Yeah, you are absolutely right. This suggestion is for the wan t2v. I didn't update the text and I'm on cel, tomorrow I will adjust it. I'm sorry.

Funny thing, I just wanted to test all speed-up loras for low noise I've got, sort them by "light", started testing wf and went to sleep. At the morning I was like "wtf, Wan2.2-T2V-A14B-4steps-250928-dyno-high-lightx2v.safetensors crashed comfy after 20 minutes!". And yes, that was not a lora when I saw 20+gb size and I remembered from where I got it -_-.

@forfreelsd368 yes not to mention the way they called the whole model the same naming convention as the loras threw me off.

@FemBro and (just don't tell nobody) almost all loras show same results (and those two or three, which show dofferent - shows same in their group). It's Like there is two or three loras (tested wan2.1 and wan2.2 lightning and lightx2v) from all of them, which are not just renaming or alinement -_-

What is the function of this lora?

Which one? For all non wan to create images, for wan videos and images. If you are asking the last one, it helps on the common motions that we saw in this kind of video. Try it with and without you will see the difference.

@alcaitiff What is the function of the wan 2.2 i2v v1 version? I will try them

@xiaobai9009 It helps on the common motions that we saw in this kind of videos. Try it with and without you will see the difference.

Short version: better motion movement....

https://huggingface.co/QuantStack/Wan2.2_T2V_A14B_4steps_25-09-28_Dyno_High_lightx2v-GGUF

Edit:[Here is the GGUF versions of your suggestions. I found them on accident after looking all over so I came back to share as this is the only way I can do most things and I'm guessing many others are in the same boat.]

Hey this is pretty impressive. I have tried these other universal deals and still they want the trigger words which I have grown to despise, but this actually had my images doing naughty things with no lora and natural language and that is tight so kudos. What even brought me here was seeing some videos in the feed out there that were so unique that I was asking how did they pull that weirdness off and your lora was all they used. =P

Thanks

Absolutely amazing, your lora does what most lora’s can’t, provide the intended action without wildly morphing up the female in the prompt.

How do you get good looking penises in Wan version? It's generating mangled, deformed penises even if there is one in image.

i fixed adding "penislora" at 0.45

Would u mind to tell me that how u caption ur data?Im trying to make a lora like yours for learning purposes.

For the Qwen Ver.

I have an article that explains the method used to the base. After that all manually.

Thank U!So it's like use the tool u made,and manual fix it for better performace?By changing the verb and nous into same words?

@BaShanWu there are details that models always get wrong, position names, etc. So I adjust it.

@alcaitiff Ya,qwen seems dont understand left and right well.

Dear friend,

I have a question

That if I can extract mutiple concepts from one image?

For example, A pic of a nude girl.

I discreibe it as, The girl in the image who's name is "123" (So This can be used in a character lora)

She has ”Type-1“Body Shape.(and this can be use to creat a similar body shape)

She has ”Type-A“ body characteristics。(this is to creat a exact body include tattoo, big or small nipples.hairy pussy or even scars)

She has XXH breasts.

She Has hairless Pussy.

She has super long legs.

By doing this ,i assume with this 1 lora,I can use it as a character lora,and a body shape lora,and body characteristics lora ,and boobs lora,and pussy lora,and legs lora.

And Should I must describe the light so those concepts could be rightfully use in different light condition?

So to bother u for lots of questions.

But I really needa know and cant find answers else where.

THANK U!

@BaShanWu yes you can. But you will need a large set of images with different combinations of this characteristics, your training will be a lot longer than usual and it will take time to get it right.

@alcaitiff 48 pics under different light and stances,front,side,back,and both naked and colthed should be enought?

@BaShanWu 20-40 pics for each concept configuration more or less. To teach several positions and anatomy parts I used from 500 to 3k images. Each model is different so it may vary.

Why are nipples added to the buttocks in the WAN 2.2 model? I've tried to fix it, but I can't find a solution.

lower the low noise strength (or remove it all together)

A bit of a odd question but can I make women lactate and squirt breast milk with this flux and Qwen checkpoint? Or do I need a different Lora.

im surprised there isn't a lora of this yet

I get oversaturated images, why?

strength to high? Test ist with strength 0.5 i know this from Flux1-DEV-SRPO Lora with strength 1.0 used in SRPO for good results 0.6-0.7 strength. And check your sampler Scheduler settings

I tried with the example image workflow, I installed the missing nodes and the result is a total let-down, the image is extremely blurry, you cannot distinguish anything... Anyone got some tips on how to use this? P.S. I am using flux1d

Same. The workflow in the image produces an extremely blurry/hazy output and it doesn't perform any action besides turning left and right a bit. Worst result I've ever seen in a I2V workflow. Something must be wrong in their workflow's settings.

Hi @kudon44, I think there may be a bit of confusion here — one person is referring to Flux1D, while the other is talking about I2V, so the conversation doesn’t quite align.

@danielamocanukty333, you can use any workflow you prefer; this LoRA works just like any other. Just make sure you’ve downloaded the version made for Flux, as we provide LoRAs for several different models. For Flux, please use Flux - v7.

@alcaitiff Hey, would you mind sharing a simple workflow that has this lora working? I tried everything and still don't get any results

@alcaitiff Oh, you are correct. I either missed that or they added it after. Either way oops.

@danielamocanukty333 I’ve shared several different workflows with my images, so it seems there might be an issue on your side. These LoRAs have been used to create thousands of images successfully, so they do work. I’d be happy to help — could you please post one of your images along with its workflow? I’ll download it and take a closer look to figure out what’s going wrong.

This doesn't work on qwen, image doesnt show up, only noise

Oh, how utterly fascinating—might you be suggesting that every image and video I've glimpsed in the gallery is simply a figment of my overactive imagination? And here I thought that the silent endorsement from over 10,000 delighted downloaders was a rather compelling vote of confidence, rather than some elaborate, globe-spanning plot designed solely to bamboozle you. Pray tell, could it be possible that the issue lies not in the tool itself, but perhaps in a slight mismatch—like pairing it with Qwen Edit or the 2509 variant, when it's ever so graciously tailored for Qwen Image alone? Just a thought, of course.

@alcaitiff Yeah sorry, forgot to write, it doesn't work with qwen edit 2509.

@cvengg Yeah, it's not made for it.

I'm cooking a version for 2509. It will take a week if we are extremely lucky training it.

@alcaitiff niiice to hear :)

@p34313 still working on it, started over to many times already, when it learns it fries. Starting with a new configuration today.

@alcaitiff let us know how we can support you :) help labelling or financially or send girls over to realax haha

@p34313 I created a https://ko-fi.com/alcaitiff

@p34313 I'ts done https://civitai.com/models/2163063/qwen-edit-2509-unchained-xxx

这是一个FLUX,wan,qwen都能用的lora?

There are separate versions for each model — FLUX, WAN, and Qwen. They are different files, and you can find the one you need by clicking on the links at the top.

每个模型都有单独的版本——FLUX、WAN 和 Qwen。它们是不同的文件,你可以点击顶部的链接找到你需要的版本。

@alcaitiff 好的,谢谢

What checkpoint do you recommend to use? With your images, do you use just a default checkpoint/model

The LORA works fine, but often the nipples show through the clothes — what could be the reason?

I’ve already tried several settings.

Example IMG

I'm sorry, but this image doesn’t include a workflow, and you also didn’t mention which model or version you’re using. Since I can’t see your prompt, it’s impossible to identify the issue. I recommend downloading an image you like from the gallery that includes the workflow, and using that as a starting point.

Your workflow is not working. Like others have reported, I get an extremely blurry/hazy output with next to zero, and I do mean nearly zero, movement. Person just kind of slightly turns left and right.

I used the workflow attached to the 2nd image, the one with the girl licking her own boob. Kept all settings at default on a RTX 4090 with 64 GB RAM. I've tried more than one image, too.

Any idea what might be up that can be fixed?

The video was created using the workflow, so it should work correctly. Could you please share one of your videos that isn’t working, along with its metadata? I’ll take a look and see if I can identify what’s causing the issue.

I’m curious to know what it will mean to be a result on my femboy’s

This model is excellent for my first attempt on transgender people with chastity cage A big thank you alcaitiff

Is the strength recommendation correct? 1 for both HIGH/LOW?

FOr some reason, I have gotten perfect results even at .50!

where do u have the workflow for your wan 2.2 i2v model i cant figure out how to apply it. i have the default comfyui template with loras between mdoel and sd3 and no nudity is rendered

My videos have the workflow embedded, just drop one on comfyui and you are set to go

interestingly it does not work with qwen edit 2509, BUT it does work with the nunchaku version of qwen edit 2509. Might have something to do with the custom lora loaders for nunchaku

I hope to see this Lora for Z image , I saw great images with it on wan, krea or qwen :)

Yes, I'm close to get it right. But I got some work to do and the training is in hold for a week. I will continue it when I'm done with this job.

@alcaitiff you're not waiting for the Z image base to be released to avoid any problems?

@thierrys29200882 no. I think I can do it on turbo.

@alcaitiff In any case, thank you in advance for this lora; ) AI Tool kit can do the job on destilled version, right ? 'm not at the stage of producing Loras , yet, I'm just trying to get decent images ^^

@alcaitiff is it great for undressing workflow?

@carlitajohn89931 ZIT edit is not released yet, you may use with inpaint. For this you can use qwen edit and prompt "Remove the woman's clothes" or "Make she lifting her shirt exposing her breasts". You can do very funny things with this. Just use your creativity.

@alcaitiff the think is i don't have an opinion on the best qwen lora cloth removing u have a clue?

ZIT XXX is here https://civitai.com/models/2206377?modelVersionId=2484202

@carlitajohn89931Use mine, ask it to remove her clothes and keep the facial and body the same

@alcaitiff glad ur active now hope u still, want to k now if u have the workflow corresponding to and another qustion : do u have a qwen wf with a good qwen lora remove like that actually works? have some qwen char loras to test that why.

@carlitajohn89931 https://civitai.com/images/113354616

How I got it to work:

Comfyui, then download the "high" and the "low" from this website. Then put them in between the main model and the loraupdatemodel in a "load Lora" node. also connect the clip to it. Voila it works.

What is the trigger string for Lora?

None, there is no trigger you only prompt what you want.

Z-Image turbo needs this. BTW your recommendation of Ostris AI Toolkit really helped me. I finally train a local LoRA for Z-Image

Already done

@alcaitiff Oh nice! Is it going to be uploaded with this?

@SaulVulcano https://civitai.com/models/2206377/zit-mystic-xxx

Right click save videos, images as ... no workflow found in comfyui?

I think I already know the answer for this but I have to ask anyways: are you considering a Flux2 version of the Mystic lora? I know it's a beast to train anything on in the first place, was just curious if you had looked into it at all.

No, I'm doing some zit right now. Qwen 2511 maybe, but flux 2 is to big. Almost no one will use it.

@alcaitiff I figured that was the case, thanks!

@alcaitiff too big to train or use? It can be run on 24/32GB quantized. VRAM sizes are only going to get bigger or people will move to the professional cards (which now have 96GB in some cases). I'm training my own flux2 loras because of limited availability.

Is mystic based on a public dataset or a private one?

@alcaitiff I think in due time it will be the most used, unless the other models don't stop coming.

But foreseeably we will never get a better open weight model than Flux.2.

@yorgash Agreed. More and more models are going to start using larger language encoders because they offer so much more control. Now the hardware needs to catch up again.

Flux v7 does its nsfw job, but i feel like a lot of generated images, have same body and face structures, doesnt apply other types of variations for some reason.

Can I find a ready-made workflow for i2v somewhere?

is it working with Qwen2512 T2I? It doesnt work for me, it just gives me a big blob of artefacts

One of the best I’ve seen so far. Can’t wait to use it. To save up some buzz.

You think you could retrain a new T2V version where faces have been masked out of the dataset? If not do you mind sharing the dataset?

As normal lora it doesn't work (i2v). There is also no workflow, I couldn't find it.

KLEIN 9B please sir

https://civitai.com/models/2348977/klein-9b-unchained-xxx

Which workflow do you use to work with this??

Please specify the version of Qwen this is for.

who can explain how to do a good workflow for this

Can we get any information on size of data set and training steps?

I would like to understand the training parameters used in the MysticXXX v3 model, including details such as dataset size, image quality, and other key training configurations. I am working on a similar use case, but I am currently facing issues like anatomical inaccuracies, body distortion, and the generation of extreme NSFW content during image inference after fine-tuning.

Details

Files

Wan2.2-I2V-High-MysticXXX.safetensors

Mirrors

Wan2.2-I2V-High-Mystic2.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

w22_i2v_MysticXXX_H.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

mystichigh22.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

General-NSFW-MysticXXX-Wan Video 2.2 I2V-A14B_HIGH.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Mystic_XXX_I2V_High.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-Mystic.safetensors

Wan2.2-I2V-High-Mystic2.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

MysticXXX-H.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

test-36-high.safetensors

W2.2-High.safetensors

Wan2.2-I2V-High-MysticXXX.safetensors

t.safetensors