Improved Hunyuan Video. FP8 CUSTOM MERGE!

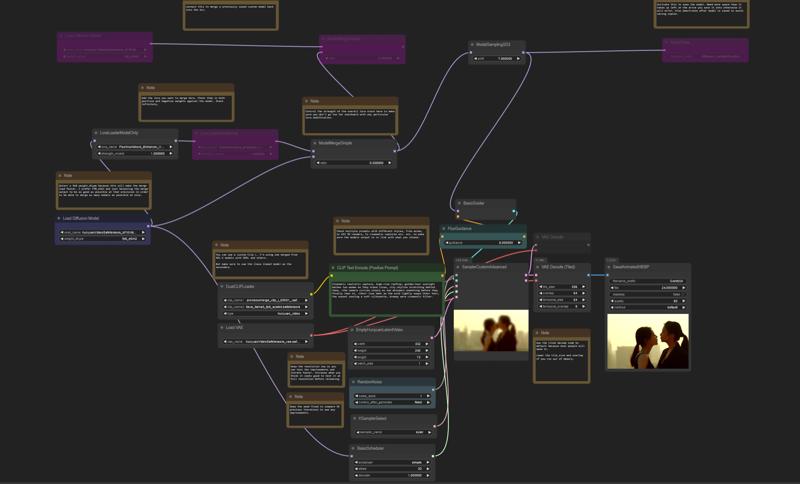

Example workflow for merging available as the 2nd download file. I merged the model with ~65 lora.

I merged the model with ~65 lora.

Before

After

After

Before

After

After

Before

After

After

Description

FAQ

Comments (40)

any other information?

Heh. I went to sleep after upload. Very tired, lol.

@Triple_Headed_Monkey can't be use for HunyuanVideoWrapper? get error message.

@ioritree Hunyuan video wrapper is deprecated and ComfyUI added native support for the models. I won't be making a version for HVW.

The workflow I used is contained in the showcase images. If you use that, it will function.

If it doesn't work, update comfyui.

@Triple_Headed_Monkey i see, loving use HVW for now.

@ioritree Unfortunately because the wrapper is not supported properly in ComfyUI, I can't work with it in the same way that I can the native implementation.

The only reason I am able to create this custom version of the model is because of the native model support without the wrappers.

@ioritree Unfortunately HVW has been deprecated by Kijai which sucks because I like it too and built a custom workflow around it, including some code edits. MOST functionality can be replicated natively, but not yet context windowing or manual block swap. Euler+linear quadratic is equivalent to "FlowMatchDiscrete" from my investigation, use torch.compile node from ComfyUI Essentials. EnhanceAVideo can be gotten from ComfyUI-HunyuanLoom. Tea/First block cache is available too. Comfy's native memory management is pretty reasonable, make sure to launch --use-sage-attention and I get similar performance, if not slightly better.

The crappy thing is the HVW uses a slightly different format of the model. Tencent originally released the model as pt files which can have code and aren't considered safe so community made safetensors. But the safetensors Kijai produced is slightly different from the Comfy produced one and they don't interchange. That means as custom models come out, they will only support whichever base model they were merged against... so for sanity sake it's prolly best to go native in the near future. :(

Edit: On the plus side HVW nodes not playing nicely with other types Comfy nodes will be a thing of the past!

@blyss sounds good, have workflow?

@ioritree I haven't made anything powerful yet as I JUST started migrating(I liked my HVW dammit, though native is proving highly legit!), I've just been using the one from https://comfyanonymous.github.io/ComfyUI_examples/hunyuan_video/hunyuan_video_text_to_video.json and I added torch.compile node from ComfyUI-Essentials and HY Feta Enhance node from https://github.com/logtd/ComfyUI-HunyuanLoom and I changed the saving node from the default WebP one to the VHS VideoCombine node from ComfyUI-VideoHelperSuite e.g. the one HVW normally uses! That's all! Also for LoRA, use the loader from https://github.com/facok/ComfyUI-HunyuanVideoMultiLora so you can select your target blocks like with BlockEdit. Also I made a PR to it to make it load Musubi Tuner format LoRA natively without conversion, so hopefully that gets accepted XD

@blyss I have set euler and linear_quadratic, and did not enable teacache, but the resulting video quality is a bit poor. Did I configure it incorrectly

@ioritree To be honest I haven't fully figured it out yet. My quality is okay but I'm having issues where if I use LoRA, it produces a slow motion effect kind of like I sometimes had with Teacache when using HVW. I'm not sure what's up with that. Oh do make sure to set the steps on the BasicScheduler to 50 or so, the default of 20 is definitely too low unless using FastVideo LoRA. That's all I can really think of, sowwy!

Edit: At least for my issue, the torch.compile node from Essentials breaks HunyuanVideoMultiLora. I'm looking into what can be done about that! Oh my PR got accepted though yay!

Edit2: Need "Patch Model Patcher Order" from KjNodes to fix the LoRA/torch.compile issue but it's only compatible with KJ's torch.compile nodes. I've got a modified one in the works for Hunyuan since KJ hasn't done that yet and I might PR it.

Edit3: Done ( https://github.com/kijai/ComfyUI-KJNodes/pull/186 )

@blyss One more thing is BlockSwap (loaded into RAM), which I personally find very useful.

@ioritree Yeah I also personally find it /very/ useful. Even with everything else set up perfectly, I can't go as high of resolution in native as I can in HVW :( It's not trivial to implement such a thing in native, though I've been considering how. It would require at least two custom nodes, a settings node to provide the block swap settings(easy) and a custom sampling node since it's during sampling that the blocks must be swapped and there's no way to tell the normal sampler to do that. It's possible I could make a combination of the normal sampling node, and the block swap logic from HVW to produce a block swap capable, native compatible sampler node. That's my best thought at least, I may look into it.

I'm still fond of the HVW though and honestly depending on how things go, I might fork it and extend it myself. Well, I already have for instance by adding support for longClip to the TE node and support running TE on CPU etc but those are minor, if I make anything significant I'll either PR or release a fork if Kijai doesn't wanna manage HVW anymore. My big issue with the HVW though is just the lack of compatibility with native nodes, which is why I was working to get native support up to snuff.

@blyss I'm just glad someone other than myself realizes how much Kijai's wrappers need to be made native implementations such that they can actually be used with other workflows.

@Triple_Headed_Monkey I agree yeah. It might be possible to create a custom sampler that's like the one from HVW supporting block swap, maybe context windowing, etc, while allowing it to accept in a normal Comfy model object instead of the HVW specific one as that's the big limiter. That would give us the rest of the featureset in native which would be killer because having this kind of split market is not beneficial overall. I've got a brutal headache today but once that abates, I'll probably look into it.

FWIW I think the reason Kijai did it this way is because he ports his wrapper nodes between various models pretty rapidly(Hunyuan, Cog, Mochi, etc) and it allows much quicker expansion and addition of features when you aren't limited by Comfy specific stuff (for instance, like how we have to patch the Comfy patcher to use torch.compile with LoRA in native comfy). I'm grateful for his work regardless because I wouldn't have known how to implement things into native without it, nor would I have been able to run full res Hunyuan at good speed on consumer HW pretty much as soon as it was released! Kijai (and others too!) took Tencent's "45GB" minimum VRAM requirement and said "lol, I think not"

Hello.

I'm ultra interested to know how you merged lora with the base model, is there a tutorial somewhere ?

Now that the model is supported natively in ComfyUI you can simply merge and save using the standard process for merging Flux and Stable Diffusion models.

@Triple_Headed_Monkey Thank you

how did you merged, what tool used ? If I may ask.

Now that the model is supported natively in ComfyUI you can simply merge and save using the standard process for merging Flux and Stable Diffusion models.

@Triple_Headed_Monkey Oh, can you share a workflow that have the elements to do that (I never merged anything under comfyui) ?

@NoArtifact Yes I have added a workflow. If you check the "download training images" This is actually the workflow file.

@Triple_Headed_Monkey thanks a lot.

Excuse me, I understand you've put a lot of LoRa in the model, but will this effect always be present in the video, the mosaic effect or artifacts?

Those effects are not actually in the videos but from civitai itself.

It's caused by using a bad file format for the showcase image upload.

@Kotoshko Привет! Это Ольга с группы в ТГ (правда меня забанили, не знаю в курсе ли ты). Эти артефакты действительно видны только после загрузки видео на CIVITAI. В живую их нет, так что скачай и попробуй модель, результат отличный.

@Kotoshko проблема именно в формате загружаемого файла. если загружать в формате mp4, то артефактов не будет, если в webp то артефакты на лицо, но это проблема не модели, а CIVITAI. Вот пример видео в формате mp4, но я генерировала в минимальном разрешении, хотя даже так качество хорошее, намного лучше чем в базовой модели https://civitai.com/images/57077348

so you compressed the videos on purpose?

or maybe you have a too much high CRF factor in the video node? like higher than 15

@LatentDream As stated, it's a file format issue when uploading to CIVITAI. it's got nothing to do with compression or any other factors.

Using a different file format with the same settings will yeild better results on CIVITAI. However the workflow metadata is not saved when using other file formats.

first impression, good female anatomy knowledge on the model, but a bit blurry output (maybe too many low resolution loras added in the mix)

In your workflow, i see a pronwowmerge clip. Where/what is that from?

I just added that for you :D

For my setup, it works far better than the recent HV release. Ill have to find a way to sharpen the result, but yeah In my case (16GB vram): Less frustrating superior Model. Thank you!

I searched for "pronwowmerge_clip_I" on the internet but didn't find it. Is it homebrew? Mind to share it? I can sense its purpose :D

@Adaptalab0r Hi. This is a custom CLIP, but you can use any other one, even the basic one for FLUX.

@Adaptalab0r I just uploaded the clip model separately for you!

I'm glad the model works for you nicely though! Enjoy using!

@Triple_Headed_Monkey

Thank you!!! I'm so in love with the model right now. I'll make sure to post a few clips this week or the follwing. Thanks again!

@speach1sdef178

The name "pronwowmerge_clip_I" seemed to be optimized for NSFW, but that might as well be my wild fantasy :-)