Serenity: a photorealistic base model

Welcome to my corner!

I'm creating Dreambooths, LyCORIS, and LORAs. If you want to know how I do those, here is the guide: https://civarchive.com/articles/7/dreambooth-lycoris-lora-guide

I have a Buy Me A Coffee page if you want to support me ( https://www.buymeacoffee.com/malcolmrey ). You can also leave a request there if you want me to do something specific.

HuggingFace model card: https://huggingface.co/malcolmrey/serenity

Here is the process of how I did it:

I was using a script from this repository: https://github.com/Faildes/Chattiori-Model-Merger

and those were the commands:

python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "photon_v1.safetensors" "realisticVisionV30_v30VAE.ckpt" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v0"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "juggernaut_final.safetensors" "wyvernmix_v9.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v1"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "epicrealism_pureEvolutionV3.safetensors" "cyberrealistic_v31.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v2"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "analogMadness_v50.safetensors" "absolutereality_v10.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v3"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "icbinpICantBelieveIts_final.safetensors" "madvision_v40.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v4"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "perfection_v30Pruned.safetensors" "DriveE/civitai3/huns_v10.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v5"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "DriveE/civitai3/metagodRealRealism_v10.safetensors" "DriveE/civitai3/unrealityV20_v20.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v6"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "DriveE/civitai3/succubusmix_v21.safetensors" "reliberate_v10.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v7"python merge.py "WS" "C:/Development/StableDiffusion/stable-diffusion-webui/models/Stable-diffusion" "DriveE/civitai/pornvision_final.safetensors" "DriveE/civitai/edgeOfRealism_eorV20Fp16BakedVAE.safetensors" --alpha 0.45 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-model-v8"python merge.py "ST" "D:/Development/StableDiffusion/SDModels/Models/merging" "merged-model-v0.safetensors" "merged-model-v1.safetensors" --model_2 "merged-model-v2.safetensors" --alpha 0.33 --beta 0.33 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-st-model-v0"python merge.py "ST" "D:/Development/StableDiffusion/SDModels/Models/merging" "merged-model-v3.safetensors" "merged-model-v4.safetensors" --model_2 "merged-model-v5.safetensors" --alpha 0.33 --beta 0.33 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-st-model-v1"python merge.py "ST" "D:/Development/StableDiffusion/SDModels/Models/merging" "merged-model-v0.safetensors" "merged-model-v1.safetensors" --model_2 "merged-model-v2.safetensors" --alpha 0.33 --beta 0.33 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-st-model-v0"python merge.py "ST" "D:/Development/StableDiffusion/SDModels/Models/merging" "merged-st-model-v0.safetensors" "merged-st-model-v1.safetensors" --model_2 "merged-st-model-v2.safetensors" --alpha 0.33 --beta 0.33 --save_safetensor --save_half --output "D:/Development/StableDiffusion/SDModels/Models/merging/merged-st-model-final"As you can see, first I merged 18 models using Weighted Sum, so there were 9 pairs.

Afterwards, I merged triples using Sum Twice which in turn produced 3 models that were again merged using Sum Twice to get the final product.

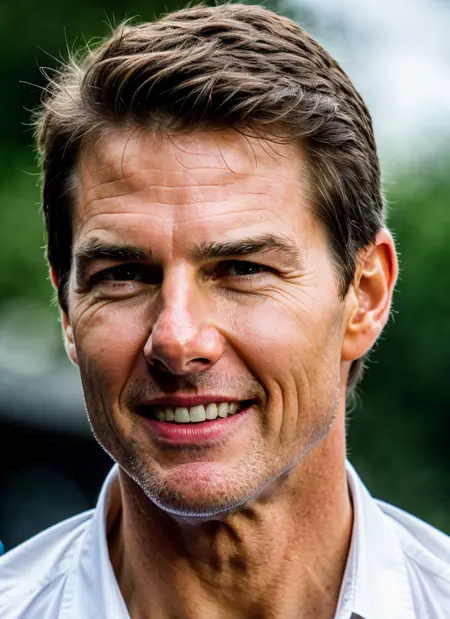

I've selected 18 models that are proven to work well with my LyCORIS and LoRA models. I've applied the same testing that I do to other models and I was happy with the results (not going to rank my model since I'm biased, but I was happy with the good-to-bad ratio).

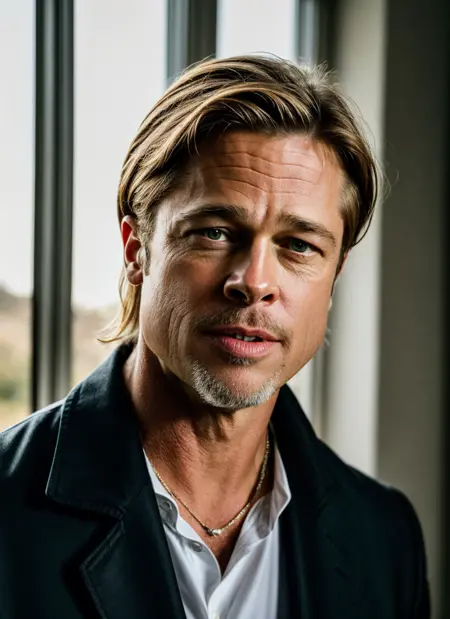

Where this model shines IMHO are the eyes and mouth.

My long-term goals were (are?) to make a decent base model and then further fine-tune it on high-quality photographs of people. But with the SDXL on the horizon, I'm not sure when/if I will have time to do it (fingers crossed?) so at least I'm doing the first part - the base model as a checkpoint merge.

Description

Detailed info about this version is available in this article: https://civitai.com/articles/3198

FAQ

Comments (17)

Hey. I'm having trouble getting V2 to work, I just keep getting this error message: "Error: Could not load the stable-diffusion model! Reason: 'Attention' object has no attribute 'to_to_k'" Any idea why? V1 works just fine.

this is weird

first, try downloading it again

second, where do you want to use it?

not sure if your tool supports diffusers format but i want to put it up, i might do it sooner if that may help you or others

@malcolmrey I'm using it with Easy Diffusion, couldn't tell you if it supports diffusers formats or not tbh heh.

@pmo42 Ok, I see that it is something you can install locally. Not sure when I will get time to do that and check it. I see that they have discord, do you use their discord? Would it be possible for you to mention as a troubleshoot that you are having problem with this model to them? :)

@malcolmrey Diffusers model like you did with v1 would be very useful!

@CanYaRelax i will add it on friday/saturday!

I am also having issues when trying to train Dreambooths in Kohya with v2.0. Continuing to use v1.0 for now.

@pmo42 @CanYaRelax @t1mberwolf

well, bad news, good news.

I'm unable to convert it right now to diffusers, I'm getting the following error:

RuntimeError: Error(s) in loading state_dict for AutoencoderKL:

Unexpected key(s) in state_dict: "encoder.mid_block.attentions.0.to_key.bias", "encoder.mid_block.attentions.0.to_key.weight", "encoder.mid_block.attentions.0.to_out.0.bias", "encoder.mid_block.attentions.0.to_out.0.weight", "encoder.mid_block.attentions.0.to_query.bias", "encoder.mid_block.attentions.0.to_query.weight", "encoder.mid_block.attentions.0.to_value.bias", "encoder.mid_block.attentions.0.to_value.weight", "decoder.mid_block.attentions.0.to_key.bias", "decoder.mid_block.attentions.0.to_key.weight", "decoder.mid_block.attentions.0.to_out.0.bias", "decoder.mid_block.attentions.0.to_out.0.weight", "decoder.mid_block.attentions.0.to_query.bias", "decoder.mid_block.attentions.0.to_query.weight", "decoder.mid_block.attentions.0.to_value.bias", "decoder.mid_block.attentions.0.to_value.weight".

Which is an error that I have seen in the past. It's about different diffusers architecture (old vs new diffusers library).

So, this means two things:

1) I may actually consider doing v3 sooner than I originally thought and this time need to safeguard it at every step of the process (I believe I know how this could happen)

2) I found someone discussing this issue here: https://github.com/invoke-ai/InvokeAI/issues/3561 and someone already made a workaround for it here: https://github.com/invoke-ai/InvokeAI/blob/ae17d01e1d2b4f2ef85a5df6c6e4d7ce0f378ca9/invokeai/backend/model_management/convert_ckpt_to_diffusers.py#L737

which means there potentially exists a converter that would handle this issue. I think I will be able to take a look at it next weekend.

There will be an update from me regarding this!

@malcolmrey That is good news. That is the sort of error which Kohya was returning. Thanks for investigating.

I am keen to see v2 in action. Your v1 model is great for faces. This is evident when generating class images and observing the variety of faces. Can't say the same for most models.

@malcolmrey Any idea if a similar workaround exists for A111?

@Stop_Civvy_Time well, in general you should be able to USE the v2 safetensors for generating images - i do it all the time without issues, so if you can't - please let me know which A1111 version you have and what is the error you get (and what do you do - the more details the betters :P) and we will try solving it out :)

@t1mberwolf thanks :-) this was the main goal when making this model (both versions) and i was mainly focused on testing that aspect :-)

from what I can understand if nothing goes horribly wrong - the fix should be doable

if not, i will heavily reconsider finding out at which step this error occurred and i will try to eliminate it in v3 (or probably called 2.5 as for v3 i plan to do way more fine-tuning :P)

Shiny!

I love a fellow Browncoat! :)

Hi, can I use Serenity v2 as a base for LoRa training in Kohya_ss? I'm trying and finding this error:

Can you help me?

loading u-net: <All keys matched successfully> Traceback (most recent call last): File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\train_network.py", line 1012, in <module> trainer.train(args) File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\train_network.py", line 228, in train model_version, text_encoder, vae, unet = self.load_target_model(args, weight_dtype, accelerator) File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\train_network.py", line 102, in load_target_model text_encoder, vae, unet, _ = train_util.load_target_model(args, weight_dtype, accelerator) File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\library\train_util.py", line 3917, in load_target_model text_encoder, vae, unet, load_stable_diffusion_format = _load_target_model( File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\library\train_util.py", line 3860, in _load_target_model text_encoder, vae, unet = model_util.load_models_from_stable_diffusion_checkpoint( File "C:\Users\halco\Desktop\kohya_ss-22.3.0\kohya_ss-22.3.0\library\model_util.py", line 1015, in load_models_from_stable_diffusion_checkpoint info = vae.load_state_dict(converted_vae_checkpoint) File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\site-packages\torch\nn\modules\module.py", line 2041, in load_state_dict raise RuntimeError('Error(s) in loading state_dict for {}:\n\t{}'.format( RuntimeError: Error(s) in loading state_dict for AutoencoderKL: Unexpected key(s) in state_dict: "encoder.mid_block.attentions.0.to_to_k.bias", "encoder.mid_block.attentions.0.to_to_k.weight", "encoder.mid_block.attentions.0.to_to_q.bias", "encoder.mid_block.attentions.0.to_to_q.weight", "encoder.mid_block.attentions.0.to_to_v.bias", "encoder.mid_block.attentions.0.to_to_v.weight", "decoder.mid_block.attentions.0.to_to_k.bias", "decoder.mid_block.attentions.0.to_to_k.weight", "decoder.mid_block.attentions.0.to_to_q.bias", "decoder.mid_block.attentions.0.to_to_q.weight", "decoder.mid_block.attentions.0.to_to_v.bias", "decoder.mid_block.attentions.0.to_to_v.weight". Traceback (most recent call last): File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\runpy.py", line 196, in _run_module_as_main return _run_code(code, main_globals, None, File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\runpy.py", line 86, in _run_code exec(code, run_globals) File "C:\Users\halco\AppData\Local\Programs\Python\Python310\Scripts\accelerate.exe\__main__.py", line 7, in <module> File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\site-packages\accelerate\commands\accelerate_cli.py", line 47, in main args.func(args) File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\site-packages\accelerate\commands\launch.py", line 986, in launch_command simple_launcher(args) File "C:\Users\halco\AppData\Local\Programs\Python\Python310\lib\site-packages\accelerate\commands\launch.py", line 628, in simple_launcher raise subprocess.CalledProcessError(returncode=process.returncode, cmd=cmd) subprocess.CalledProcessError: Command '['C:\\Users\\halco\\AppData\\Local\\Programs\\Python\\Python310\\python.exe', './train_network.py', '--pretrained_model_name_or_path=C:/Users/halco/SD-WEBUI/models/Stable-diffusion/serenity_v20Safetensors.safetensors',Hey hey! I'm aware of this issue but I haven't solved it yet. I have suspicions on what is causing it and hopefully this weekend I will be able to address it.

took a while but now you can, version 2.1 (diffusers and safetensors) is available and does not have this issue

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.