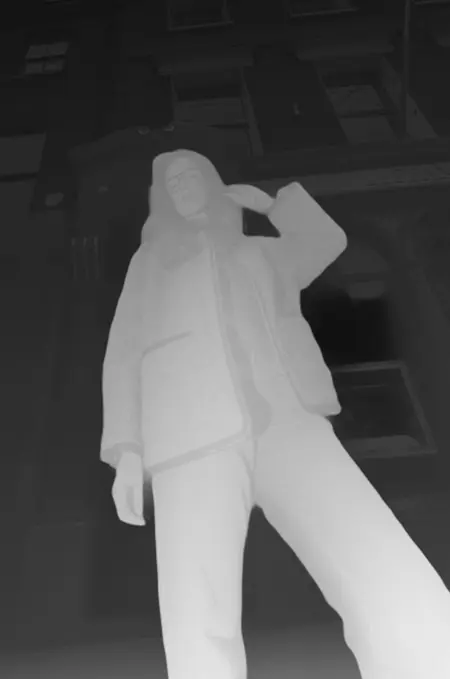

FLUX Depth FP8 (Blockwise)

FP8 Conversion of Flux Dev Depth

Load Cover image for workflow, Example Depth image does not have workflow.

20-40% speed up over full model for older cards or if loading TE's into GPU

Note that this model is in FP8 E4M3 it is possible that a scaled conversion with mixed E5M2 and E4M3 would be beneficial but based off of the info from Blackforest I stuck with the higher precision.

BLOCKWISE

The following blocks where not converted

"time_in.in_layer.bias",

"time_in.in_layer.weight",

"time_in.out_layer.bias",

"time_in.out_layer.weight",

"txt_in.bias",

"txt_in.weight",

"vector_in.in_layer.bias",

"vector_in.in_layer.weight",

"vector_in.out_layer.bias",

"vector_in.out_layer.weight",

"final_layer.adaLN_modulation.1.bias",

"final_layer.adaLN_modulation.1.weight",

"final_layer.linear.bias",

"final_layer.linear.weight",

"guidance_in.in_layer.bias",

"guidance_in.in_layer.weight",

"guidance_in.out_layer.bias",

"guidance_in.out_layer.weight",

"img_in.bias", "img_in.weight"

Description

FAQ

Comments (5)

Do you have this as a safetensor file??

Yes, just converted and everything looks to be in order BF16, FP8E4M3 will run a test then upload

@Felldude Great thanks

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.