Contact me:

QQ group: 571587838

Bilibili: homepage

Civitai: ttplanet

wechat: tangtuanzhuzhu

This is an experimental project focused on Stable Diffusion (SD) models. In a single generated image, the same object or character consistently maintains a very high level of consistency. I had already attempted to address this issue in the SDXL model.

At that time, I used the ControlNet model with decaying weights to achieve good results. By using reference images, it was possible to generate multiple views of a single character at once while maintaining extremely high consistency. Due to a busy schedule, further exploration of this work was put on hold.

Recently, I came across discussions about ic-lora and noticed that it remains a standard LoRA but leverages the DIT model to achieve better consistency and excellent control over image formatting. Utilizing this format control effect, there is hope to achieve consistency in applications. Inspired by the concept of a latent-guided workflow introduced by the netizen "lrzjason aka xiaozhi," I simplified the entire preprocessing logic, thereby developing a highly effective migration method.

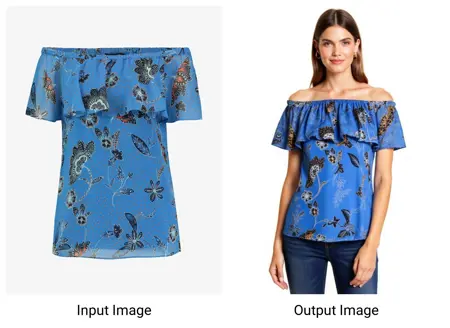

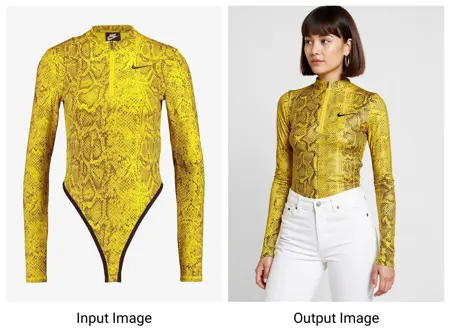

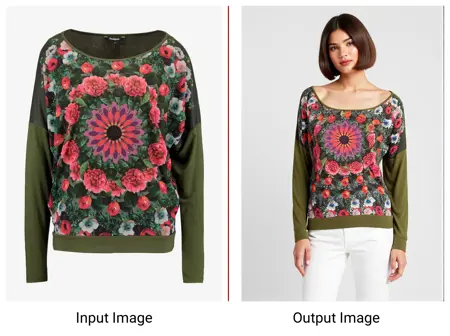

Through this process and methodology, you can achieve surprising migration and generalization effects by guiding the model to focus on the content you need, providing astonishing consistency. Currently, I have developed a matching migration model for clothing, which offers:

Surprisingly Consistent Reference Image Clothing Migration

Cartoon Clothing to Realism or Real Clothing to Cartoon

Control Over Clothing Similarity via Weights to Inspire Design Creativity

More To Do

Transfer of Items with Distinct Features

Transfer of Intricate and Complex Patterns

Face Transfer

Additional Ideas from the Community

How to Use

You can download the model from my Hugging Face project in the Migration LoRA folder. Currently, only the Cloth LoRA is available, but I will add more once they are ready.

Requirements

ComfyUI to run the model with auto process.

Custom Nodes:

TTP Toolset: ComfyUI_TTP_Toolset (Please update to the latest version)

Tag Node: I recommend using my version which provides a custom_modification for extra direction: ComfyUI_JC2

**I also used Alimama flux inpainting model, to get it from here: Alimama_flux_inpainint

Steps

**workflow is over here workflow

**or download here https://civarchive.com/models/950776

Install ComfyUI and the required custom nodes listed above.

Download the Cloth LoRA from the Migration LoRA folder on Hugging Face.

Load the model into ComfyUI.

Use the provided workflow example to achieve the desired results.

Notice select the flux version wisely, as we need to load the Alimama inpainting model at same time, more VRAM needed, try nf8 if you feel slow. or use some momey optimization node like FluxExt-MZ

Feel free to experiment and modify the workflow according to your needs!

Description

FAQ

Comments (10)

这个lora起到的作用是不是跟in-context类似呀

对,就是这个思路

我看小志那个没用阿里妈妈CN直接局部重绘,阿里重绘效果上有区别么?

Amazing! Thanks for sharing!

This kinda works. Are you able to share your dataset on huggingface?

how to fix OSError: Incorrect path_or_model_id: 'E:\AI\ComfyUI_windows_portable\ComfyUI\models\vitmatte'. Please provide either the path to a local folder or the repo_id of a model on the Hub.

please help load_pretrained_model

raise RuntimeError(f"Error(s) in loading state_dict for {model.__class__.__name__}:\n\t{error_msg}")

RuntimeError: Error(s) in loading state_dict for VitMatteForImageMatting:

size mismatch for backbone.embeddings.projection.weight: copying a param with shape torch.Size([768, 4, 16, 16]) from checkpoint, the shape in current model is torch.Size([768, 3, 16, 16]).

size mismatch for decoder.convstream.convs.0.conv.weight: copying a param with shape torch.Size([48, 4, 3, 3]) from checkpoint, the shape in current model is torch.Size([48, 3, 3, 3]).

size mismatch for decoder.fusion_blocks.0.conv.conv.weight: copying a param with shape torch.Size([256, 960, 3, 3]) from checkpoint, the shape in current model is torch.Size([256, 576, 3, 3]).

size mismatch for decoder.fusion_blocks.3.conv.conv.weight: copying a param with shape torch.Size([32, 68, 3, 3]) from checkpoint, the shape in current model is torch.Size([32, 67, 3, 3]).

You may consider adding ignore_mismatched_sizes=True in the model from_pretrained method.

new bee 我猪哥!

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.