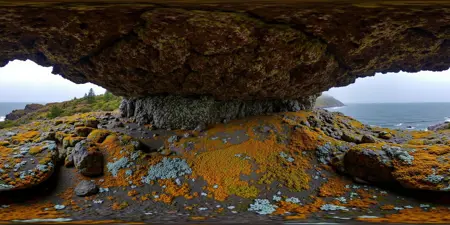

This is a LoRA for FLUX.1 Dev which aims to improve the quality of equirectangular 360 degree panoramas, which can be viewed as immersive environments in VR or used as skyboxes.

Updated example workflow with some more notes here: https://civarchive.com/models/745010?modelVersionId=833115

Information

The trigger phrase is "equirectangular 360 degree panorama". I would avoid saying "spherical projection" since that tends to result in non-equirectangular spherical images. Image resolution should always be a 2:1 aspect ratio. 1024 x 512 or 1408 x 704 work quite well and were used in the training data. 2048 x 1024 also works. I suggest using a weight of 0.5 - 1.5. If you are having issues with the image generating too flat instead of having the necessary spherical distortion, try increasing the weight above 1, though this could negatively impact small details of the image. For Flux guidance, I recommend a value of about 2.5 for realistic scenes.

This is a tool which can be used to view spherical images in a browser: https://renderstuff.com/tools/360-panorama-web-viewer/

To have the image be viewable with an interactive mode like this on websites that support it, you can add the equirectangular projection metadata using exiftool (on Windows) by using a command like this:

path\to\exiftool.exe -XMP:ProjectionType="equirectangular" image.png

Civitai VR native support when? :)

If you have a VR system, you can view equirectangular images as immersive environments in many different VR media players like SteamVR Media Player, Deo VR, etc.

You can also use a Stereo Image Node with a depth map to create a stereo panorama image (with a 4:1 aspect ratio) which can be viewed in VR to have the objects actually appear with the distance determined by the depthmap. I've gotten good results using a standard MiDaS depthmap and the polylines_sharp setting for the fill technique on the Stereo Image Node. Other depthmap methods might provide better results; I'm not sure whether there is a depth model designed for equirectangular panoramas.

Compatibility with other LoRAs seems pretty good, though I haven't tested very many. It works quite well with the dev-to-schnell LoRA if you want faster generation times (about 8-10 steps instead of 20-30), at the cost of making the textures a bit less realistic and a bit more cartoony.

This model has rank 32 and was trained for 24 epochs on 128 training images (3072 steps). This was the point at which it seemed to converge on understanding the equirectangular format, and further training did not seem to help. For captions, I used detailed captions generated by JoyCaption with a bit of manual editing along with some basic information about the structure of the equirectangular projection. Trained using AI Toolkit.

Limitations

This model doesn't fully fix the seam issue. Since Flux is a transformer model, we can't just use asymmetric tiled sampling with clever padding tricks like we could with convolutional models. On the other hand, since Flux is a transformer model, it's able to naturally have long-range attention that causes it correlate the opposite sides of the image, and this attention can be trained.

However, this model does greatly improve the seams in most cases, enough that inpainting the seam after applying a circular shift to the image is usually enough to fix it, in the cases where the objects on either side of the seam aren't completely incompatible. I have provided an example workflow for fixing this seam issue.

Since the majority of the training data consisted of panoramas of landscapes, the model is very good at these (and base Flux isn't as bad at them either). However, indoor scenes had very little representation in the training data. As a result, proportions are often far off for indoor scenes, making objects appear far larger than they should, rooms might not have the correct number of walls, faces may be distorted, etc. I'd like to retrain with a better balance of scenes at some point.

Attribution

This LoRA was trained on equirectangular images freely available online, primarily from the Flickr group for equirectangular panoramas, since it has high quality photos without watermarks. I used the following users' images: j.nagel, Kevin Jennings, Uwe Dörnbrack, Cristian Marchi, Patricia Müller, Tiger Lin Panowork, and Faillace. I'd like to thank them for their excellent photography that makes this work possible. It's highly unlikely that any images substantially similar to the ones used in the training set will show up in outputs of this model, though, and really they were just examples demonstrating how the spherical lens distortion affects images.

Since this model was built on FLUX.1 Dev, images generated with this model still must follow FLUX.1 Dev Non-Commercial License.

Description

Rank 32, should preserve fine details better

FAQ

Comments (12)

Amazing work! Thank you for the detailed description and link to a viewer. Was really interesting to check out the showcase images that way

"Wow, it is truly amazing to see so much great output!"

DALLE 3 supports Equirectilinear images, but if you ask it to do 3D, it will produce 2.5D images, I'd assume this LoRA can't be used to produce 3D VR180 style images. FYI, The purpose of EquiRectiLinear images is to make projection into a VR headset easy with little or no distortion. I have a Quest 3, and anything lower than 4k is quite useless, its preferable to have stereo VR180 at above 5K, 8K preferably. 3K is laughable..

This can be used to make 3D 360 images, and I've been viewing them in VR for immersive scenes which usually don't have much distortion, though it does depend on the subject because of biases from so much base model training on images without the projection. I haven't tried making 180 images with this, but I'm guessing it wouldn't work, since there weren't any training images for them. I'm sure another LoRA/version could be trained for that, though. I can see the benefit of only generating 180 degrees.

For the resolution, you can always generate at a higher resolution or use more upscaling steps. For me it's mostly an issue of how long you want to wait to make one image (and also eventually how much storage space it takes up, the 3D 360 PNG images at a resolution of 8192 x 2048 are already like 30 MB each). I have an Index, so I've been pretty happy with this resolution, but I'm sure you could upscale it more if you want. Maybe use a smaller model for the upscale denoise step to avoid it taking forever.

@SeanScripts well my issue is are they stereoscopic or are they monoscopic..

Why do the images I generate have seams?

Usually creates banana-rooms with only two walls though 😕

So strange... I can run it with GGUF checkpoints, but I can't run it with for example RealFlux. Then there is no panaorama effect at all

Amazing work! Is it any way to get 32 bit from it?

When I open the downloaded workflow and open it in ComfyUI there are 3 Loras that are bypassed. I was able to find flux_realism_lora.safetensors so I used that one. But the only thing generated is 4 images containing noise .

What are I doing wrong here?

The workflow was easy to setup. This will make cube maps easier for game engines. Good Job!

Please. Could you share the link to download the lora flux sch singleblocks f32.sft?

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.