Because this website heavily downscales the previews from 8k i have cropped a few at the full resolution. The images are huge.

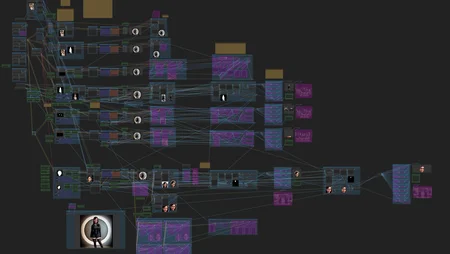

This workflow is capable of creating HD quality 8k images, 44mb+ i don't know how much more it can do, my 12gb card is limited there.

You are not limtied to 8k, anything lower works just as well.

Features

- XL or 1.5 base generation - LCM used can be removed.

- 2 base models - 2 extras for use with enhancers

- Full upscale with 1.5

- Full subject cutout and enhance

- Decent hand fixing with meshgraphormer

- Full face with hair cutout enhance

- inner face enhance

- face features enhance (eyes, nose, mouth)

- Aux detector to enhance something else (breasts, clothing, etc)

- All enhancement steps use cutouts to avoid full scale renders

- Any relevant areas have optional inpainting

- Any relevant areas have optional IP adaptor Refs

- All areas have separate prompts/Lora

Updates

V1.0

- fixed faces not working for multiple people

- fixed padding issues that came up rarely but caused improper blending

- fixed face detection drop size being too large, made drop size a main option due to its usefulness

- face parts enhance each option on its own now. Can do just 1 eye, or just mouth.

- added custom subject paste back to avoid bad paste orders on some photos.

- added 1 extra aux detailer because we all know why you need 2 :P

- added reactor face swap, it's not great on 8k but decent on others

- added model upscale option

- added Downscale for all previews, so my browser would stop freaking out. Turns out having 10 8k previews makes it freak out.

Beta 1 - release

Default detectors needed

Full head

https://civarchive.com/models/326568/anzhcs-head-segmentation-prototype-or-yolov8-or-adetailer-model

hand detector

https://civarchive.com/models/329458/hand-detailersegmentation-adetailer

Control nets used

hand controlnet - makes bare hands

https://civarchive.com/models/262788/controlnet-handrefiner

This can be used to give gloves.

https://civarchive.com/models/9868/controlnet-pre-trained-difference-models

Description

V1.0

- fixed faces not working for multiple people

- fixed padding issues that came up rarely but caused improper blending

- fixed face detection drop size being too large, made drop size a main option due to its usefulness

- face parts enhance each option on its own now. Can do just 1 eye, or just mouth.

- added custom subject paste back to avoid bad paste orders on some photos.

- added 1 extra aux detailer because we all know why you need 2 :P

- added reactor face swap, it's not great on 8k but decent on others

- added model upscale option

- added Downscale for all previews, so my browser would stop freaking out. Turns out having 10 8k previews makes it freak out.

ton of other changes. i think its working really well.

FAQ

Comments (1)

is there any solution for this error?

Error occurred when executing KSamplerAdvanced: index 0 is out of bounds for dimension 0 with size 0 File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\execution.py", line 152, in recursive_execute output_data, output_ui = get_output_data(obj, input_data_all) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\execution.py", line 82, in get_output_data return_values = map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\execution.py", line 75, in map_node_over_list results.append(getattr(obj, func)(**slice_dict(input_data_all, i))) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\nodes.py", line 1416, in sample return common_ksampler(model, noise_seed, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, denoise=denoise, disable_noise=disable_noise, start_step=start_at_step, last_step=end_at_step, force_full_denoise=force_full_denoise) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\nodes.py", line 1352, in common_ksampler samples = comfy.sample.sample(model, noise, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-Impact-Pack\modules\impact\sample_error_enhancer.py", line 9, in informative_sample return original_sample(*args, **kwargs) # This code helps interpret error messages that occur within exceptions but does not have any impact on other operations. ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-AnimateDiff-Evolved\animatediff\sampling.py", line 434, in motion_sample return orig_comfy_sample(model, noise, args, *kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-Advanced-ControlNet\adv_control\sampling.py", line 116, in acn_sample return orig_comfy_sample(model, args, *kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-Advanced-ControlNet\adv_control\utils.py", line 116, in uncond_multiplier_check_cn_sample return orig_comfy_sample(model, args, *kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\sample.py", line 43, in sample samples = sampler.sample(noise, positive, negative, cfg=cfg, latent_image=latent_image, start_step=start_step, last_step=last_step, force_full_denoise=force_full_denoise, denoise_mask=noise_mask, sigmas=sigmas, callback=callback, disable_pbar=disable_pbar, seed=seed) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI_smZNodes\smZNodes.py", line 1447, in KSampler_sample return KSamplersample(*args, **kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 829, in sample return sample(self.model, noise, positive, negative, cfg, self.device, sampler, sigmas, self.model_options, latent_image=latent_image, denoise_mask=denoise_mask, callback=callback, disable_pbar=disable_pbar, seed=seed) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI_smZNodes\smZNodes.py", line 1470, in sample return sample(*args, kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUIwindows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 729, in sample return cfg_guider.sample(noise, latent_image, sampler, sigmas, denoise_mask, callback, disable_pbar, seed) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 716, in sample output = self.inner_sample(noise, latent_image, device, sampler, sigmas, denoise_mask, callback, disable_pbar, seed) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 695, in inner_sample samples = sampler.sample(self, sigmas, extra_args, callback, noise, latent_image, denoise_mask, disable_pbar) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 600, in sample samples = self.sampler_function(model_k, noise, sigmas, extra_args=extra_args, callback=k_callback, disable=disable_pbar, self.extra_options) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\utils\_contextlib.py", line 115, in decorate_context return func(*args, **kwargs) ^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\k_diffusion\sampling.py", line 779, in sample_lcm denoised = model(x, sigmas[i] s_in, *extra_args) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 296, in call denoise_mask = model_options["denoise_mask_function"](sigma, denoise_mask, extra_options={"model": self.inner_model, "sigmas": self.sigmas}) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "C:\AI\ComfyUI_windows_portable_nvidia_cu121_or_cpu\ComfyUI_windows_portable\ComfyUI\comfy_extras\nodes_differential_diffusion.py", line 30, in forward current_ts = model.inner_model.model_sampling.timestep(sigma[0]) ~~~~~^^^

Looks like we don't have an active mirror for this file right now.

CivArchive is a community-maintained index — we catalog mirrors that volunteers upload to HuggingFace, torrents, and other public hosts. Looks like no one has uploaded a copy of this file yet.

Some files do get recovered over time through contributions. If you're looking for this one, feel free to ask in Discord, or help preserve it if you have a copy.