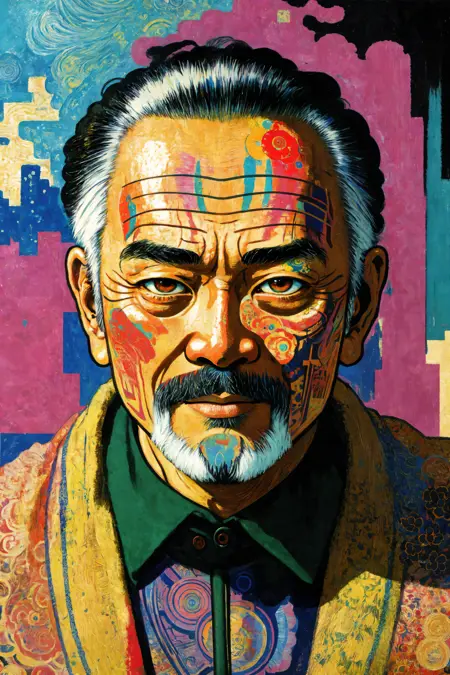

The result of many merges aimed toward making extremely highly detailed images. This can do realistic content, but I prefer to make stylized stuff so that aspect has not been extensively tested.

All example images were generated without any hypernetworks, loras, or inpainting. Everything was genned without high res fix, then upscaled using SD upscale in img2img.

Used SD 1.5 VAE

Unpruned float 32 version of AyoniMix V2 on huggingface:

https://huggingface.co/Ayoni/AyoniMix_V2/blob/main/AyoniMix%20V2%20Float32.safetensors

Description

Very slightly changed one of the merges and clipfixed the final result. The outputs should be more accurate to what is being prompted as a result of the clipfix.

FAQ

Comments (3)

Nice update, Please what is clipfix ? what does it do, where can I find more information ?

When models are merged, the clip values are converted to float-based arithmetic so that they can added together based on the percentage value you are merging. Because float is approximate and not exact, this causes some tensor values to be truncated which can cause broken clip positions. The text in a prompt is tokenized and interpreted by the model. With broken clip values, the the text will not be tokenized properly and can cause stuff to be ignored or changed in strange ways.

You can check the link below for a much more in-depth explaination:

thanks a lot for clarification

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.