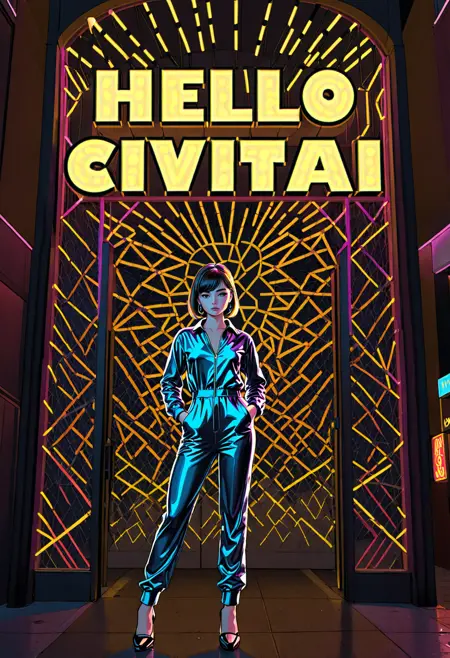

This workflow allows you to generate custom text as part of an image. You can vary text size, color, angle and font.

SDXL only. And it doesn't really work with PonyDiffusion, as you can see in the last image of v1.0.

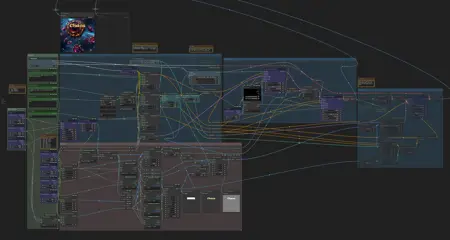

Installation and dependencies

Install WAS Node Suite custom nodes

Install Custom Scripts custom nodes;

Install Allor custom nodes;

Install ControlNet-LLLite custom nodes;

Download SDXL OpticalPattern ControlNet model (both .safetensors and .yaml files), and put it into "\comfy\ComfyUI\models\controlnet";

Download QRPattern ControlNet LLLite model and put it into "\comfy\ComfyUI\custom_nodes\ControlNet-LLLite-ComfyUI\models" folder;

Download and open the workflow.

How to use

Write a prompt for the whole picture;

Write a prompt for text;

Write actual text;

(optional) Set text color;

(optional) Set text font, size and angle;

(optional) Set text offset (0.5/0.5 is center).

Run it.

Notes

Big and bold letters are easier to generate.

Upscaling also helps.

Comment says ControlNet LLLite might cause problems. If so, just bypass it (Ctrl + B).

Description

Initail version of 27.04.2024.

FAQ

Comments (21)

Hi colleague, I am experiencing some errors when running your workflow. I think I have followed correctly all the steps, but when I try to generate, the first Ksampler gives error.

Can you share an error text?

got prompt

model_type EPS

Using pytorch attention in VAE

Using pytorch attention in VAE

clip missing: ['clip_l.logit_scale', 'clip_l.transformer.text_projection.weight']

loaded C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\custom_nodes\ControlNet-LLLite-ComfyUI\models\ioclab_thresholdXL_anime_256.safetensors successfully, 136 modules

Requested to load SDXLClipModel

Loading 1 new model

Requested to load SDXL

Requested to load ControlNet

Loading 2 new models

loading in lowvram mode 3168.4615383148193

loading in lowvram mode 550.178973197937

0%| | 0/10 [00:00<?, ?it/s]

!!! Exception during processing !!!

Traceback (most recent call last):

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\execution.py", line 151, in recursive_execute

output_data, output_ui = get_output_data(obj, input_data_all)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\execution.py", line 81, in get_output_data

return_values = map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\execution.py", line 74, in map_node_over_list

results.append(getattr(obj, func)(**slice_dict(input_data_all, i)))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\nodes.py", line 1403, in sample

return common_ksampler(model, noise_seed, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, denoise=denoise, disable_noise=disable_noise, start_step=start_at_step, last_step=end_at_step, force_full_denoise=force_full_denoise)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\nodes.py", line 1339, in common_ksampler

samples = comfy.sample.sample(model, noise, steps, cfg, sampler_name, scheduler, positive, negative, latent_image,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\sample.py", line 100, in sample

samples = sampler.sample(noise, positive_copy, negative_copy, cfg=cfg, latent_image=latent_image, start_step=start_step, last_step=last_step, force_full_denoise=force_full_denoise, denoise_mask=noise_mask, sigmas=sigmas, callback=callback, disable_pbar=disable_pbar, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 702, in sample

return sample(self.model, noise, positive, negative, cfg, self.device, sampler, sigmas, self.model_options, latent_image=latent_image, denoise_mask=denoise_mask, callback=callback, disable_pbar=disable_pbar, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 607, in sample

samples = sampler.sample(model_wrap, sigmas, extra_args, callback, noise, latent_image, denoise_mask, disable_pbar)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 545, in sample

samples = self.sampler_function(model_k, noise, sigmas, extra_args=extra_args, callback=k_callback, disable=disable_pbar, **self.extra_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\utils\_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\k_diffusion\sampling.py", line 695, in sample_dpmpp_3m_sde_gpu

return sample_dpmpp_3m_sde(model, x, sigmas, extra_args=extra_args, callback=callback, disable=disable, eta=eta, s_noise=s_noise, noise_sampler=noise_sampler)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\utils\_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\k_diffusion\sampling.py", line 655, in sample_dpmpp_3m_sde

denoised = model(x, sigmas[i] s_in, *extra_args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 283, in forward

out = self.inner_model(x, sigma, cond=cond, uncond=uncond, cond_scale=cond_scale, model_options=model_options, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 270, in forward

return self.apply_model(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 267, in apply_model

out = sampling_function(self.inner_model, x, timestep, uncond, cond, cond_scale, model_options=model_options, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 244, in sampling_function

out = calc_cond_batch(model, conds, x, timestep, model_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\samplers.py", line 217, in calc_cond_batch

output = model.apply_model(input_x, timestep_, **c).chunk(batch_chunks)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\model_base.py", line 97, in apply_model

model_output = self.diffusion_model(xc, t, context=context, control=control, transformer_options=transformer_options, **extra_conds).float()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\custom_nodes\SeargeSDXL\modules\custom_sdxl_ksampler.py", line 70, in new_unet_forward

x0 = old_unet_forward(self, x, timesteps, context, y, control, transformer_options, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\diffusionmodules\openaimodel.py", line 850, in forward

h = forward_timestep_embed(module, h, emb, context, transformer_options, time_context=time_context, num_video_frames=num_video_frames, image_only_indicator=image_only_indicator)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\diffusionmodules\openaimodel.py", line 44, in forward_timestep_embed

x = layer(x, context, transformer_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\attention.py", line 633, in forward

x = block(x, context=context[i], transformer_options=transformer_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\attention.py", line 460, in forward

return checkpoint(self._forward, (x, context, transformer_options), self.parameters(), self.checkpoint)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\diffusionmodules\util.py", line 191, in checkpoint

return func(*inputs)

^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\comfy\ldm\modules\attention.py", line 499, in _forward

n, context_attn1, value_attn1 = p(n, context_attn1, value_attn1, extra_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\custom_nodes\ControlNet-LLLite-ComfyUI\node_control_net_lllite.py", line 111, in call

q = q + self.modules[module_pfx_to_q](q)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\ComfyUI\custom_nodes\ControlNet-LLLite-ComfyUI\node_control_net_lllite.py", line 225, in forward

cx = self.conditioning1(self.cond_image.to(x.device, dtype=x.dtype))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\container.py", line 217, in forward

input = module(input)

^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1511, in wrappedcall_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1520, in callimpl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\conv.py", line 460, in forward

return self._conv_forward(input, self.weight, self.bias)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\******\*****\ComfyUI\ComfyUI_portable\python_embeded\Lib\site-packages\torch\nn\modules\conv.py", line 456, in convforward

return F.conv2d(input, weight, bias, self.stride,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

RuntimeError: Input type (float) and bias type (struct c10::Half) should be the same

Prompt executed in 12.28 seconds

And the first Ksampler have purple color.

@Iridium_storm Wow, that's a long message! But I saw a string said:

clip missing:

Maybe you accidently disconnect a yellow noodle? Maybe something wrong with a model?

@Postpos I did not disconnect anything, and the model was correct.

But, what I did do a moment ago was a bypass to the Controlnet-LLLite node. At that moment the Ksampler did start. It has generated an image, although the quality is not very good, but the text is read correctly.

I would like to know how to solve the problem. So I could post a good result in your gallery.

@Iridium_storm I post a new version a minute ago, with better ControlNet LLite version (https://civitai.com/models/178422?modelVersionId=200289).

By the way, I found out that these LLLite models tend to bug with big images. Try to set 1024x1024 size.

@Postpos I'm going to try it

Anyway, bypassing ControlNet LLLite is OK. It's just one of three instruments in this workflow to guide the text generation. Increase the strength in "Apply ControlNet (Advanced)" node a little and you'll be fine.

@Postpos I can't get it to work as you put it, but I can give you some information:

-In the Load controlnet Model node the file: Diffusion_pytorch_model.safetensors causes an error in the Apply ControlNet (advanced) node. ->solvable using the controlnet model: control_v10e_sdxl_opticalpattern.safetensors

Once this step is solved, the following error appears in Load LLLite -> even using qrpatternSDXL_v01256512eSdxl.safetensors

The only way I have found to continue is to bypass that node (LLLite).

@Iridium_storm bypassing ControlNet LLLite is OK.

Error occurred when executing ImageTextMultiline: ImageTextMultiline.node() missing 1 required positional argument: 'font'

Well, looks like you need to choose another font. First widget in "Image Text" node.

By the way, in Windows, you can preview fonts in Windows settings.

And you can even choose custom font, if you put it into "\comfy\ComfyUI\comfy_extras\fonts" and refresh workflow page.

@Postpos this one solved the problem , thanks!

Is that example pic a reference to the Underpants Gnomes from South Park?

no

@Postpos @Postpos https://miro.medium.com/v2/resize:fit:720/format:webp/1*oaJlY6rLTVKnCCwrjCItMA.jpeg Check the similarities. Really old episode.

@Postpos https://miro.medium.com/v2/resize:fit:720/format:webp/1*oaJlY6rLTVKnCCwrjCItMA.jpeg Check the similarities. Really old episode.

@Dustebunz now when you mention it, I understand it's a reference. I never saw this episode and never knew the name of it, but I know the joke.