Trigger: ais-brickz

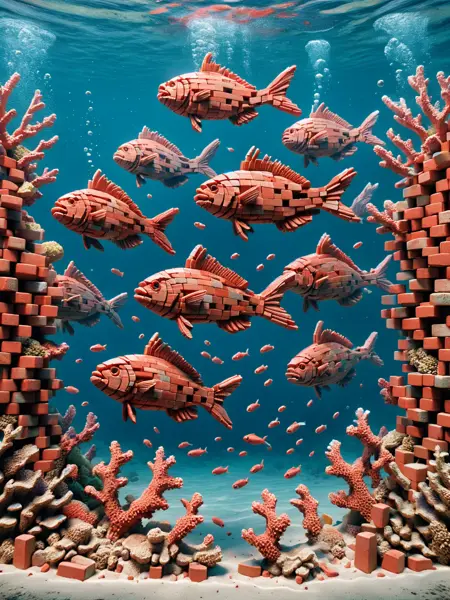

This LoRA just wants to turn things to brick and its preference is a type of brick called Fareham Red, which is what London's Royal Albert Hall is made of.

Use at 1.0 for a full brick effect on subjects/objects.

Reduce where you only want bricks on part of a subject/object. For example, a woman wearing a bikini - at 1.0 she'll be bricks too. At 0.6 you get a cool brick effect on the bikini.

More likely to be effective on subjects/objects, but will give a go to whole scenes - just results will vary a bit more.

Have a play and see what you can create. Please share your creations!

Description

FAQ

Comments (6)

I hope you don't mind a question... Do you start training with just pictures of one brick? The reason I ask is that I would like to try training a similar style, but with a product we sell at my job. I'd love to get some pointers!

Would that be define the style as "laid brick by brick?" Hm... I am also interested lol

@cicerothedog @Kaladae Hey, sorry for the slow response. For this lora I started out with images from DALL:E - it's pretty good at creating unique style images, particularly if you have the time and patience to sift through the results that aren't exactly what you want. I also used Co-Pilot from Microsoft (which uses DALL:E - but I find their images have more jpg artifacts). I think I got about 200 images in the end with a wide range of 'things' made out of bricks. I usually get DALL:E to give it a simple/plain background for most of the images and then a few more natural backgrounds for variation.

The captions were fairly simple: 'photo of ais-brickz sheep, standing in a field or 'photo of car made of ais-bricks, gradient background'.

For other loras I will use Midjourney, or even Stable Diffusion. It depends which one is able to create images as I imagine them in my head!

What I also do if I am struggling to get good images from DALL:E / Midjourny, is train a version of the lora on a small set of images, then create images using an early version of the lora. This can be quite effective and when those images are added to the original dataset seems to improve the overall output.

You could try something similar with a small batch of high-quality photos of products you sell, train a lora, try and get it to generate something you like and then use those to add to your photos.

I'm no expert and only been playing with Stable Diffusion since October, but happy to try and help when I can!

@artificialstupidity Thank you very much, that is super helpful!

@cicerothedog I've tried reducing my training images massively to less than 50 - making sure there's a range of objects etc. It's worked quite well for the last couple of LoRAs!

cute!

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.