Wan2.2-I2V-SVI (GGUF) for Low VRAM (12GB)

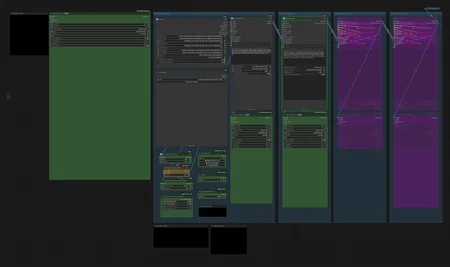

Stable Video Infinity (SVI2.0Pro) Simple Workflow

Generate smooth extended video from a single image

tested on :ComfyUI version: 0.16.0, Python: 3.12.12, pytorch : 2.10.0+cu130

Geforce RTX5060Ti16GB, 64GB System memory

Geforce RTX2060 12GB, 32GB System memory

For older GPUs such as GeForce RTX 20xx, it is more stable and faster to generate the image at a smaller resolution such as 720 pixels, and then upscale or frame interpolate it later.

At 81 frames, extended videos up to 20 seconds long can be generated.

The default setting is 10 seconds of footage (5s + 5s). If necessary, use Video Extend to extend the footage.

Do not turn off BaseSampling 0

If you need a separate Lora, use the Power Lora Loader (rgthree) in each subgraph.

Option for low vram

VRAM profiles: normal / chunked / per-frame loop / CPU offload for memory-constrained systems.

For more information about WAN SVI Pro Motion Control, please see below.

https://github.com/IAMCCS/IAMCCS-nodes#-version-131--wan-svi-pro-motion-control

If you need Video Extend 4 or later, please copy the module and extend it with the following connection:

Copy and paste

Be sure to connect Set_image_out to the Extended_image of the final module.

Or change the length value from 81 to 121 or 161 to extend the time.

Video Combine 🎥🅥🅗🅢's save_outputs can be saved individually

Image Latency Switch

ImageScaleToMaxDimension (false) : Defaults

Note: If you use an image smaller than the default size, it will take longer to generate due to the upscaling.

Main Models: Select your SVI GGUF model

The GGUF model for this workflow is

- wan22EnhancedNSFWSVICamera_nolightninSVICfQ4KMH.gguf

- wan22EnhancedNSFWSVICamera_nolightninSVICfQ4KML.gguf

VAE

Text Encoders

Stable Video Infinity Lora

-SVI_v2_PRO_Wan2.2-I2V-A14B_HIGH

-SVI_v2_PRO_Wan2.2-I2V-A14B_LOW

Lightning LoRA

lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors (Weight:3.0)

lightx2v_I2V_14B_480p_cfg_step_distill_rank128_bf16.safetensors (Weight:1.5)

Use Custom Node

https://github.com/kijai/ComfyUI-KJNodes

https://github.com/city96/ComfyUI-GGUF

https://github.com/rgthree/rgthree-comfy

https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite

https://github.com/IAMCCS/IAMCCS-nodes

Separate Frame Interpolation + FlashVSR Ultra-Fast workflow included

Use Custom Node

https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite

https://github.com/GACLove/ComfyUI-VFI

scale: Processing scale factor (default: 1.0)

Lower values (0.25-0.5) for faster processing

Higher values (1.0-4.0) for better quality

https://github.com/lihaoyun6/ComfyUI-FlashVSR_Ultra_Fast#comfyui-flashvsr_ultra_fast

📢: For GeForce RTX 20xx (Turing) or older GPU, please install triton<3.3.0:

# Windows

python -m pip install -U triton-windows<3.3.0# Linux

python -m pip install -U triton<3.3.0Model file order: Your folder hierarchy may be different, but the high-low order will be like this: