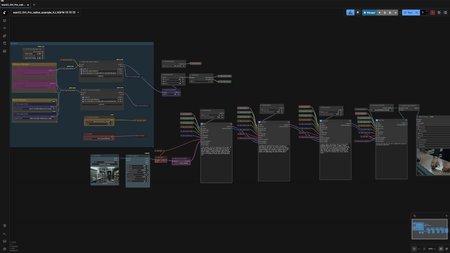

Break the 5-second limit. This custom workflow unlocks the full potential of WAN 2.2 by integrating Stable Video Infinity (SVI) LoRAs with LightX2V lightning models.

Instead of short, disconnected clips, this setup allows you to daisy-chain segments together to create long-form, seamless videos that maintain character identity and lighting consistency. I've also optimized it for speed and lower VRAM usage by incorporating GGUF support and a 4-8 step generation process.

✨ Key Features

♾️ Infinite Length: Uses SVI LoRAs to condition new clips on the previous frames, creating seamless transitions for as long as you want to generate.

⚡ 4-Step Lightning Speed: Integrated LightX2V LoRAs reduce sampling steps from 20+ down to just 4-8, making iteration blazing fast.

📉 Low VRAM Friendly: Native support for WAN 2.2 GGUF quantized models (works on 6GB+ VRAM).

✨ Built-in Upscaling: Includes a dedicated upscaling chain to polish your final output.

🔀 Easy Extension: Modular "sub-section" groups allow you to simply clone and connect nodes to extend the video length instantly.

📺 Video Tutorial

I walk through the entire installation, model setup, and prompting strategy in my YouTube tutorial. Watch it here to get the best results:

📦 Required Models

To avoid red nodes, make sure you have the following in your ComfyUI models folders:

Checkpoint: WAN 2.2 I2V (GGUF recommended for this workflow).

LoRAs:

SVI v2.0 Pro (Image-to-Video).

LightX2V (High/Low).

Text Encoder: UMT5_fp8 Clip.

VAE: WAN 2.2 VAE.

(Detailed links to all models can be found in the YouTube video description!)

🤝 Support & One-Click Installer

If you want to skip the manual installation of ComfyUI, Python environments, and model management, I offer a fully automated One-Click Windows Installer for this exact workflow (and many others).

Get the One-Click Installer here:

https://www.patreon.com/posts/147707880

Enjoy the workflow and share your generations in the gallery!