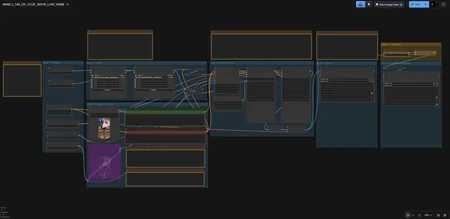

GOONING WORKFLOW FOR THE VRAM POOR!

If you are VRAM poor just like me, this workflow is for you! You can generate NSFW videos with just 8GB VRAM and 32 GB RAM. Maybe even with lower specs if you use lower GGUF models. Everything is written as notes in the ComfyUI workflow but I will write it them again here.

IMPORTANT:

TastySin Q8 GGUF version requires sage attention and nightly pytorch version to be installed. Unfortunately, I cannot provide tech support for those. Please spend some of your time and install them. They are worth it!

Always check the "About this version" from the right side to see the difference between workflows.

STEP 1 - MODELS

WAN2.2 I2V A14B GGUF:

Put WAN GGUF, SMOOTH MIX GGUF, or TASTYSIN GGUF models under unet folder.

I recommend Q6 for 8GB VRAM, you can download smaller versions if you have less VRAM or bigger versions if you have more.

Text encoder GGUF:

I recommend Q5_K_M for 8GB VRAM, you can download smaller versions if you have less VRAM or bigger versions if you have more.

VAE:

STEP 2 - LORAs

LoRAs:

You need this LORA if you want to produce videos with 4 steps only.

If you want to add more LORAs, just add them~!

STEP 3 - IMAGE AND PROMPT

START IMAGE:

The image proportions should be the same as video generation proportions. For example, if you are putting a 16:9 image, your generation proportion should be 16:9. Otherwise, weird things might happen. I always recommend putting a higher resolution image, it will be auto downsized to the generation resolution.

PROMPTS:

Check CIVITAI generations for more prompts and keywords for the LORAs you are using. As for the negative prompt, I have no idea what's best. Chinese? English? Less keywords? More keywords? No idea.

STEP 4 - WAN PROCESS

STEPS ( ! IMPORTANT ! ):

I have not seen much improvement when increasing the steps from 4 to 6. Leave them at 4 or experiment, up to you.

VIDEO SIZE & LENGTH:

The output is much cleaner and crispier if the INPUT images have the same dimensions. Use an image with higher resolution and it will be scaled down automatically. Just make sure the ratio (e.g. 16:9) is the same or similar.

Dimensions must be divisible by 16! For quick generations (testing), use these dimensions:

- 512 x 512 (SQUARE)

- 432 x 768 (9:16)

- 768 × 432 (16:9)

- 640 × 480 (4:3)

- 480 x 640 (3:4)

For final render, this is what my machine was capable of (8GB VRAM / 32GB RAM):

- 864 x 864 (SQUARE)

- 544 x 960 (9:16)

- 960 x 544 (16:9)

- 896 x 672 (4:3)

- 672 x 896 (3:4)

LENGTH:

81 frames for 5 seconds. I do not recommend trying longer or shorter duration using this workflow. It increased the generation time by a lot and the quality degrades. But if you must, the frame length must be divisible by 16 + 1.

KSampler:

Change noise_seed generation from "randomize" to "fixed" if you are happy with the testing result but want a higher resolution. Otherwise, leave everything else as it is if you do not know what you are doing.

Motion Amplitude:

Fixes no motion problem with WAN (e.g. camera rotation), this PainterI2V node is magic!

1.0 (original) > No difference from the original WAN node

1.15 (default) > General use

1.3 > Sports action

1.5 > Extreme motion

STEP 5.1 & 6 - Upscale and Frame Interpolation

DISABLE THESE WHILE TESTING OUTPUT (CTRL+B)

UPSCALE:

This model works great with anime images/videos. Feel free try other models.

FRAME INTERPOLATION

Free FPS increase! If you do not like the results, you can disable the frame interpolation and just save the upscaled video.

Description

Updates (v1.1):

Added support for SMOOTH MIX WAN GGUF

Improved LORA loading

Added Upscaling

Added Frame Interpolation

FAQ

Comments (19)

Awesome, My first try with my RTX 2070 8gb vram, without upscale and interpolation, it took me about 10 minutes for a 5 sec video 480x864 16fps 81frames with the smoothmix 6 steps.

Edit: wow, with upscale and interpolation it got smooth. Ty for the workflow.

With the 10ton girl, I added a bouncy lora but only the smoothmix lora got mentioned during the generation/diffusion of the video in the cmd. Is it not compatible and just skips it? https://civitai.com/models/1343431/bouncing-boobs-wan-14b?modelVersionId=2191217

@robotfromspace First of all, thanks for the feedback! Secondly, make sure the weight of the LORA you are using is 1.0 or the recommended ratio. Finally, not all LORAs work well together. Some LORAs might outright override other LORAs. Try to reduce the strength of overriding LORAs. Not much to do about that I believe :(

@ModFrenzy Ty for the feedback, I think I got it working. Ty again for the workflow!

@robotfromspace One more thing to note, I recommend upscaling and frame interpolation all the time. It does not take much time or does not require much from your PC.

@ModFrenzy Yeah, the upscaling and interpolation just takes about 200 seconds for me. I'm disabling it until I got a nice first preview and then I just activate the upscale+interpol.

I am using the same graphics card, I average 25 min start to finish with upscale, interpol etc plus running 2 and 3 stacked loras in high and low.

@southtownkgw320 To lower the time for the rendering to finish, I've been experimenting with 49 frames and using 4 steps instead of the recommended 6 steps for the smoothmix gguf, works (mostly) ok anyway.

i never even tried the smoothmix

Can it handle 16gb ram and rtx4060, just need enough to run 420p video generation on a loop, 3-5 seconds

I got a 2070 with 8gb and by just following the recommendations in the workflow I can do 864 x 864 with 16 fps upscaled with interpolations to 1728 x 1728 with 32 fps.

Cannot say for sure, there are so many variables to consider. I recommend just trying it out and let us know the results. If you run out of memory, try using a Q4 or even Q3 variant of the models.

@robotfromspace what's your ram? Sh*t hit the fan with ram prices getting insane.

@RexAe14 32gb DDR4 3000mhz. I thought about upgrading my computer but the ram prices just skyrocketed so I'm just thinking of upgrading my GPU and hope my i9 9900k doesn't bottleneck to much.

@robotfromspace well I’m at 16gigs ddr5, can I handle this? If not, what models should I swap around with?

Even worse in my case considering my laptop runs the 8+8 gb slots. I have to buy 2 16 gb instead

@RexAe14 Oh, sorry I thought you meant you had 16gb vram. I don't know, you can just try the different versions Q4 or Q3 and see if it works, the Q5 works fine for me. Your 4060 should be faster than my 2070, yours got better raw computational power (approx. 15 TFLOPS vs 7.5 TFLOPS).

Did you work this on 16 gb ram?

power lora loader is blank and i can't select any loras in it?

Make sure ComfyUI is up to date, all custom nodes are up to date, and your LORAs under "ComfyUI/models/loras" folder. If that does not work, please contact the original author of the custom nodes.