PanelPainter-Project is an open-source initiative to automate black-and-white manga coloring using fine-tuned LoRAs. This project is dedicated to training models that maintain clean line art while achieving smooth, anime-style color fills.

Update – V3.0 Major Release

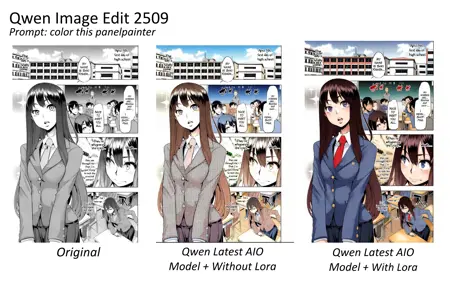

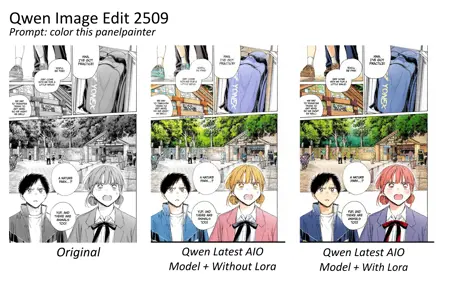

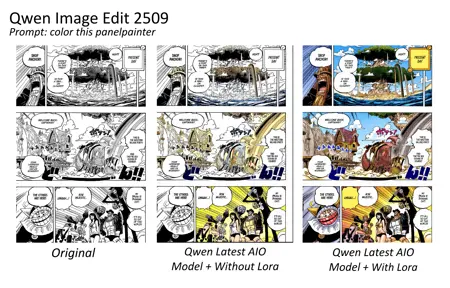

PanelPainter V3 is a major milestone. Unlike previous "helper" versions, V3 is a standalone coloring lora trained on Qwen Image Edit 2511.

Training Data: Trained on 903 hand-picked panels using Natural Language Captions.

Methodology: Combines the "real line art" training method discovered in V2 with a significantly larger dataset to generalize across different manga styles.

Trigger Word:

Color this panelpainter

Workflows & Resources

For the best results, use the dedicated workflows on RunningHub.

PanelPainter V3 (Qwen 2511)

RunningHub: V3 BF16 Workflow (Fast, Balanced)

RunningHub: V3 AIO Workflow (All-In-One)

PanelPainter V2.5 (Qwen 2511)

RunningHub: V2.5 AIO App (With VL Prompting) – Includes Vision Language prompting for better adherence.

Usage Guide

V3 has been tested on various styles including Chainsaw Man, Frieren, Komi Can't Communicate, and Oshi no Ko.

Recommended Generation Settings

LoRA: PanelPainter V3 (Weight: 1.0)

Helper LoRA: 4-Step Lighting (Weight: 1.0)

Steps: 4

Sampler: Euler

Scheduler: Simple

CFG: 1.0

Prompting Use the trigger word in your prompt:

Color this panelpainter

You can also add specific lighting or atmospheric tags:

Color this panelpainter, sunset lighting, warm tones

Project History & Development

Version 3.0 (Current)

Base: Qwen 2511

Summary: Scaled up to 903 images using the "real line art" training method. Solves the generalization issues of V2 while maintaining color quality.

Version 2.0 (Stable)

Base: Qwen 2509 (Compatible with 2511)

Summary: The breakthrough version. Switched from synthetic data to real line art (150 images). Proved that small, high-quality datasets outperform massive synthetic ones.

Version 1.0 (Legacy)

Summary: Trained on 7,000 synthetic grayscale images. Failed to handle real ink imperfections. Kept for archival purposes.

Credits & Acknowledgements

Training: Trained on Musubi Tuner (Thanks to kohya-ss).

Dataset Contributors: Special thanks to @Rox_Jr & @lucifer_brine04 for their help with dataset curation.

Description

Overhaul of V1 Training

Improved color prediction.

Overall works on all type of manga panels unlike V1

FAQ

Comments (23)

Hey. so i saw that comic in the thumbnail (Akarui) so if possible can u do that for the whole pages of it. thanks

🙂↔️ I wish

Good job.

I am using the AIO workflow and selected the recommended model version, but the generated result is a completely black image. What went wrong?I posted my worktable downbelow.

You hidden the major part I want to see anyway use this https://gofile.io/d/PlA9hJ workflow I have updated few things.

@Kokoboy Still not working properly,besides,the generated result became a gray square?I mean i follow the logtable to decrypt the gray spuare,and it shows nothing.

@Kokoboy I find out this workflow can run properly on RH online service,but can not run in local, can u check why?Much appreciated.

@didiasol --precision-full

I know this particular thread is about 4 months old, but I hope it is of help. I once had the same issue when using the batch colorizer workflow version to this. In my experience, this appeared to be the version of sageattention being used. To diagnose if this is the same issue for you, I would recommend disabling sageattention within your workflow and see what happens (or if the Patch Sage Attention Node from KJNodes is not there, I would add that in between the lora loader, and the Qwen Image Integrated KSampler, and disable sageattention with it to start). Once you have verified whether this is the issue when trying to manually specify versions of sageattention to use, copy the contents of the error log (stack trace) into google search and it will point you in the right direction.

From 282mb to 2,2gb lora my man? Why so big? Can someone quantize it please

Qwen-Edit doesn’t understand properly when trained at a medium learning rate, even with a 7K dataset. Now it only trains well at a low learning rate, which obviously results in a big LoRA size. I can’t really do anything about it in this case

Could you please share the process of building the dataset? I would greatly appreciate it.

I selected 150 colored manga panels in total roughly 70% doujin panels and 30% mainstream SFW manga panels. For the doujin set, it was hard to find clean non-color (B&W) versions, so I used Qwen Image Edit 2509: I fed the colored panel as input and generated a matching black-and-white version. That method was used for about 70% of the dataset.

For the remaining SFW panels, I manually sourced both B&W and colored versions.

During training, the colored panel was the target, and the black-and-white panel was the control/input. The LoRA was trained on RunPod A10 using musubi-tuner, and the whole process took around 15–20 hours.

That’s pretty much the full dataset pipeline hope it helps, and sorry for the late reply!

missing tt_img_enc from PanelPainter_Qwen_Image_Edit_2509_AIO – Workflow

https://gofile.io/d/yIOuBr - You can use this workflow its without TT node its only used on RunningHub

@Kokoboy file doesn't seem to exist

@ConnoisseurOfHentai That Workflow is outdated, will provide a new workflows

https://gofile.io/d/FsNZ53 will update other versions by today

@ConnoisseurOfHentai As far i tested this lora was supported 2511 & All AIO Versions

@Kokoboy Also noticed newest AIOs recommend euler_ancestral/beta should I edit the Integrated Ksampler node in any way? Does it now support adding a reference image?

@ConnoisseurOfHentai For coloring purposes, Euler/Simple seems to work better than the recommended sampler. Reference images still have issues, which I expected since the lora wasn’t trained for that way. You can still give it a try and see how it performs.

Consistency remains a large issue. For example, same character hair / clothes color switching between panels. Similar but bigger issue is page-to-page consistency. Sure it's possible to color a character's hair yellow and skin white, but it's noticeably a different shade of yellow and white from one page to another. I don't blame you, it's a decent lora. This just shows the inherent lack of comic page comprehension for the image edit model. It's not a vlm that can understand that it's the same character across many panels hence it can't really color them all the same. The page-to-page consistency problem is part of the larger character / generation consistency problem that ironically image edit models were made to solve. One possible solution is to use a "reference" colored page possibly as 2nd image input to hold all colorings consistent in all pages.

post a workflow that does the same