Introduction

This is an experimental version of ComicCraft, incorporating Latent Consistency Models (LCM).

The intention was to do this in a way that it would still work with most of the regular samplers and still benefit from being able to use less Sampling steps (for example 6, instead of the typical setting of more than 20) to obtain high quality results much faster.

The results are not identical to those of the original ComicCraft model, but this might still be interesting to test with, specially for users of applications that may have limitations with using LoRAs.

Regarding the trigger words for the model, it should be the same as the original ComicCraft model, but some styles are weaker.

As mentioned, most of the standard samplers will work, but A1111 doesn't yet have a default LCMScheduler, but by installing the AnimateDiff extension, it is added as LCM and can produce some medium results from 4 sampling steps, but much better from 6 steps. The DPM++ 2M and 3M samplers might have some issues at lower sampling steps (blurry or "overburned" images), but they can still be useable from 8 steps onwards (for example 10 or 12, depending on the case).

From my tests, using a configuration like this should be ok:

Sampling steps: at least 6

Samplers: LCM works fine and Euler, Euler a and UniPC will work well without doing too much. Others may need higher sampling steps

CFG scale: between 1 and 2

I tested some styles and character LoRAs and they still work ok, but some may need some adjustments. Try lowering the LoRA weight slightly.

ADetailer and other extensions work well and given the speedups of reducing the number of sampling steps, it will still be useful for faster image generation.

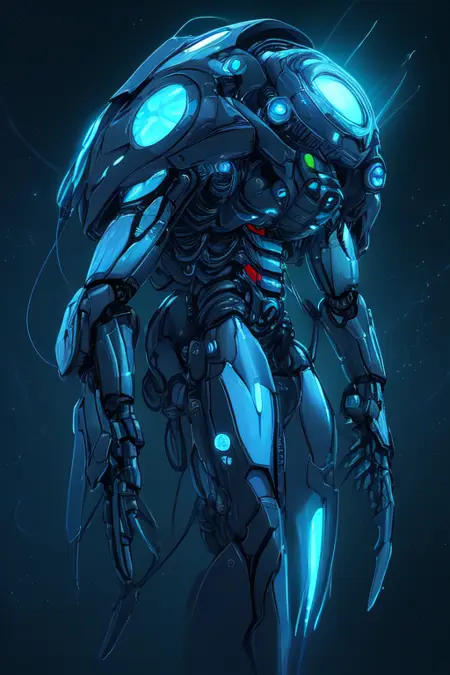

The images in the showcase have a variety of samplers and sampling steps used, so they can be a reference. Hires. fix was not used for any of them, ADetailer was used in a few of them.

I cannot test everything on my own, so if you find out anything interesting, let me know.

Version history

beta2: initial version. First attempt at making the model work with a low amount of sampling steps

beta4: additional fine-tuning. Focus on more detailed (clearer and sharper) images at low sampling steps (6). Comic style now working better using LCM sampler. Some images come out with a strong tint of one color (for example, green or blue), try adding 2 additional sampling steps.

Description

Initial testing version

FAQ

Comments (5)

Doesn't look it it works with the DML version of Automatic1111. Rather, the LCM sampler doesn't.

Hello! This is using the LCM sampler bundled with the AnimateDiff extension? I tested it with both this one and the LCM sampler in ComfyUI and it worked about the same in both, with just 4-6 Sampling steps and CFG 2, results are pretty good

I just added an image from the tests with ComfyUI, same settings as A1111, but using IPAdapter nodes

@victorc25744 From what I can tell, LCM uses CUDA. DirectML uses DirectX. Other LCM models don't work at all when set to the CFG of 2 and low steps, which 'activates' the LCM mode.

@mageofthesands interesting, I didn't know of this DML version! I test on Mac and it works ok there too, there is probably some change that has to be made to the sampler so it works correctly with DML, since it's now integrated in main A1111, it's probably just a matter of time until it is fixed :)