using 4090,

8 steps - 14 sec - 12 images

reduce batch size if you have a lower GPU

I know the workflow is messy

Updated on the workflow of Capybara's SDXL Workflow v1.4

you will need:

LCM lora - Latent Consistency team

Advanced Enhancer XL LoRA Z_phyr

Add More Details - Detail Enhancer Lykon

models used

as base Cardos XL v1 Charsheetanon

as refiner NightVision XL - Photorealistic 0.7.7.0 Socalguitarist

Description

LCM Hyperloop v21.0

FAQ

Comments (9)

there is a 50 sec on rtx4070

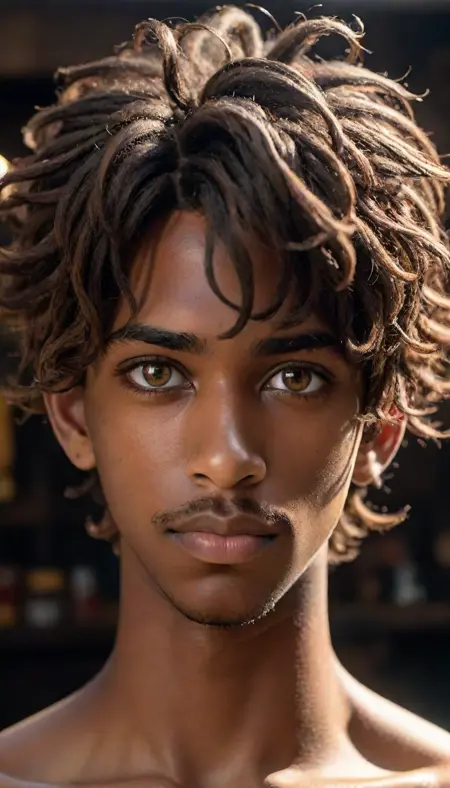

is it doing 12 images in 1 batch ?! i didnt expect that, what do you think about the quality ?

@7sanal7san I just do it with the default settings of this workflow, and it really works, but I tested the generation without an IPadapter, only in txt2img mode, so the quality was not as good as with it

@modzhahead158You're right; that's why I added 3 IP adapters, i usually keep them below 0.20. Try adding a lora; I have had some interesting results. Usually, I keep the base lora low and the refiner lora high; some images were comparable to the 55 steps of Euler-A. i am testing out the Ultimate upscaler i had some good results, but i am still not satisfied with it if am able to

Upload some of the images you made, if possible

@7sanal7san made some curvy girls as test https://civitai.com/posts/815908

when I'm trying to use IPadapter, there is an@7sanal7san error occured:

Error occurred when executing IPAdapterApply: Error(s) in loading state_dict for Resampler: size mismatch for proj_in.weight: copying a param with shape torch.Size([1280, 1280]) from checkpoint, the shape in current model is torch.Size([1280, 1664]). File "C:\ComfyUI_windows_portable\ComfyUI\execution.py", line 153, in recursive_execute output_data, output_ui = get_output_data(obj, input_data_all) File "C:\ComfyUI_windows_portable\ComfyUI\execution.py", line 83, in get_output_data return_values = map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True) File "C:\ComfyUI_windows_portable\ComfyUI\execution.py", line 76, in map_node_over_list results.append(getattr(obj, func)(**slice_dict(input_data_all, i))) File "C:\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI_IPAdapter_plus\IPAdapterPlus.py", line 367, in apply_ipadapter clip_embed_zeroed = embeds[1].cpu() File "C:\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI_IPAdapter_plus\IPAdapterPlus.py", line 161, in init output = output.clamp(0, 1) File "C:\ComfyUI_windows_portable\python_embeded\lib\site-packages\torch\nn\modules\module.py", line 2152, in load_state_dict raise RuntimeError('Error(s) in loading state_dict for {}:\n\t{}'.format(

make sure you have the "IP-Adapter-plus_sdxl_vit-h.bin" add the model in the ipadapter extention folder https://huggingface.co/h94/IP-Adapter/tree/main/sdxl_models I tried it as well with the ip-adapter-plus-face_sdxl_vit-h.bin its working perfectly.

@7sanal7san goo idea, but not: https://i.imgur.com/lSl0d6u.png

i have all ipadapters, and try them all: https://i.imgur.com/W3y6A7N.png

but a miracle happened! workflow good working with ip-adapter_sdxl.bin https://i.imgur.com/Ywm5bzj.png

I'll have to try a combination of different IP adapters with different SDXL checkpoints, maybe the root of the problem is somewhere in the conflict between them