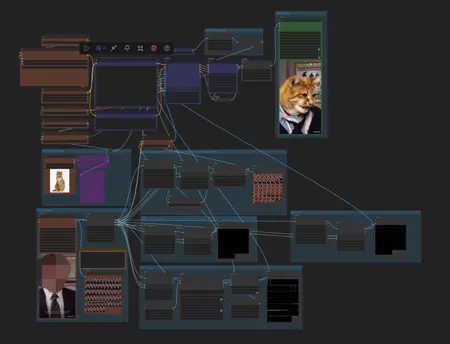

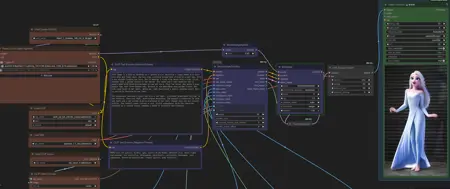

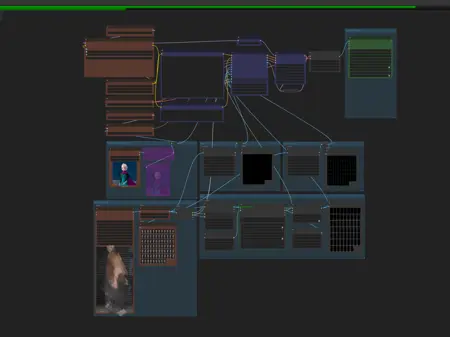

This whole workflow, I put it together knowing basically nothing. I only found out a few days ago that the official Kijai's workflow actually supports GGUF, so we can just use Kijai's official process directly. And ComfyUI even has workflow templates out recently! I want to let more people know about this.

Updated on September 21st.

Minor updates to face and resolution handling.

How to use:

1. Install the missing nodes and update ComfyUI.

2. Update the Florence2 node (Florence2Run).

3. Check and download the models in the workflow. The LLM model will download automatically. If you download it manually,like: put it in "ComfyUI\models\LLM\CogFlorence-2.2-Large.

This workflow is specifically designed for beginners.

It's probably one of the easiest wan_animate workflows to use, while also being resource-light and generating quickly.

Always let's get to playing around first! On my potato PC, it only takes about 3 minutes to complete a 640p resolution video that's about 49 frames long. If you use 720p, the quality will be much better, the lower resolution is just a compromise I had to make.

This workflow is pretty intuitive, of course, there's room for improvement.Maybe I'll make more modifications and variations later.

Be sure to use the 'Upscale Image By' setting in 'Load Videos' to control the size of the generated resolution to avoid crashes or running out of memory (OOM).

If it's your first time using it, it might seem complicated, but it's actually super easy.

Description

FAQ

Comments (8)

1--Low resolution leads to poor image quality. You can adjust the 'Upscale Image By' value to increase the resolution for better results.

2--If you encounter dimension errors, it's a resolution issue. You need to adjust the value in 'Upscale Image By' so that it's divisible by 32.

Can you define "potato PC"? I have 3060 12gb +64gb ram. Is this potato enough?

@Fil_Is_Here his "potato" pc probably have 5070..

@bielzimdag346 Maybe not. Just need to know. Distorch can help in loading models, and interpolations\RIFE can help with quality, but i need to know OP specs to build for myself

@Fil_Is_Here I got a GTX 1080 Ti and 32GB of RAM. I haven't tried this workflow yet, however I am able to load the Wan2.2-I2V-14B model by using a block-swapping node. It takes me ~1h25m to generate a 5-second 720p video. :)

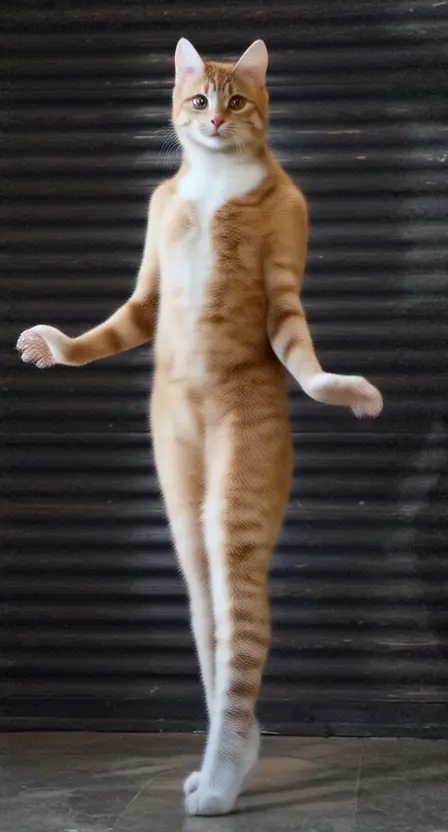

This is not the workflow I'm looking for.. I want a workflow where a static Referece image of a person is animated based on the animation from the reference video I used.. this workflow replace the character from the video with the character from the reference image...

➡Try this, even simpler: https://civitai.com/models/1977337?modelVersionId=2238131

With video gen, low VRAM means buy more system RAM. Unlike LLMs, you do not need the video model to use large amounts of VRAM- indeed you want the exact opposite no matter how much VRAM you have. That means keeping the model in system RAM, and telling Comfy to stream the model from RAM.