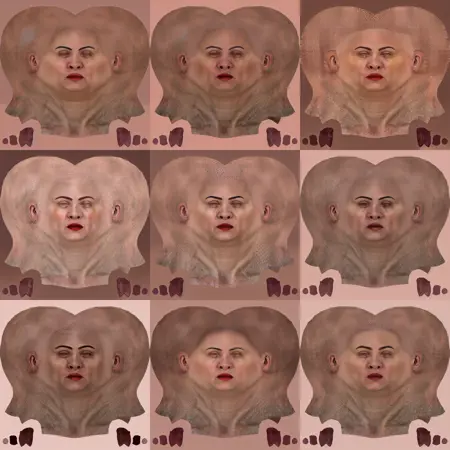

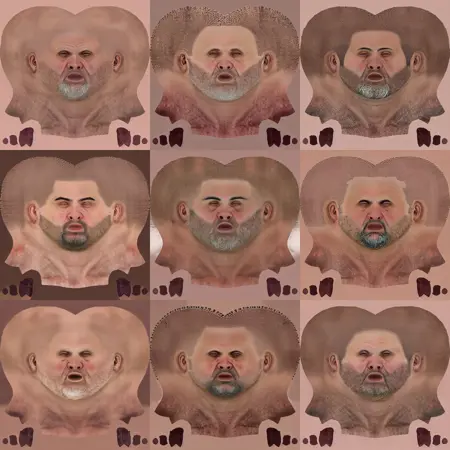

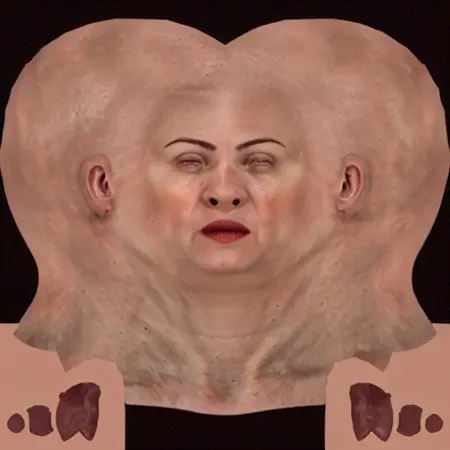

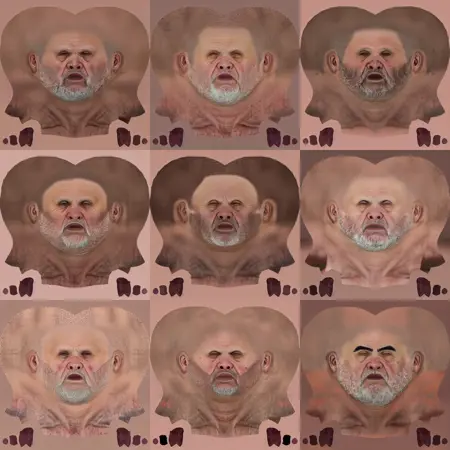

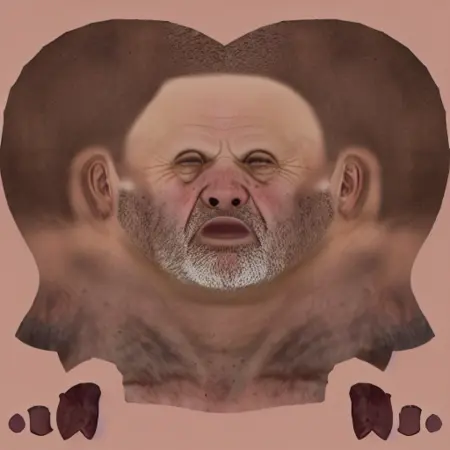

This checkpoint is based on texture page images mapped to the UVW pages of the CC3+ character 3D meshes. For Character-Creator it can generate male and female head textures. it’s just a proof of concept but it works so far surprisingly well. maybe I’m going to build a full SD character editor checkpoint based on this with lots of keywords to play with but for now it’s just what you can download as this checkpoint.

Description

This checkpoint is based on texture page images mapped to the UVW pages of the CC3+ character 3D meshes. For Character-Creator it can generate male and female head textures. it’s just a proof of concept, but it works so far surprisingly well. maybe I’m going to build a full SD character editor checkpoint based on this with lots of keywords to play with but for now it’s just what you can download as this checkpoint.

FAQ

Comments (31)

FANTASTIC, i started something like this and couldn't make it work as an embed, but this is super awesome. Great job!

Great work!

I have a high quality dataset I've been planning on using to fine-tune a model for just this purpose.

Would you be willing to share your training images so I may increase the dataset?

Any lessons you've learned during the training of this model? Did you use captions for each image, or what approach did you take to train?

There’s no need to get my training data, simply start up character creator with a headshot plug-in and run a bunch of AI-generated front facing portray face images through it and you have a good training data set.

@Polygon At first, start with a small dataset of rather similar images with subtle changes. Let’s say something like 10 images for each main keyword. Like woman, man, old, young, make-up, european, east Asian … beard and so on. Make sure that you are tagging the images with the triggering keyword in the text file as the first word inside your description text file that has the same name as the image but is a .txt, after that in the text file separated by commas, the main attributes that you like to have associated with the image so the previously mentioned woman, man, old, young, make up and so on. Make sure that all your initial training data has the same background colour for the area that is outside of the UV boundaries. In order to train the model to not draw details there and get confused. definitely remove any kind of noise, watermarks, or whatever might be outside of the used UV space on your texture model page. If you don’t do this, your training will not generate a clean model that you can properly work with and expand upon later.

do the training on this dataset, run it with a rather low number of iterations, and often generate sample images and intermediate checkpoints so that you can see the point where the iterations over train the network and the results start to degenerate. It’s really easy to unintentionally overtrain a network that is dealing with such delicate changes like those in diffuse textures for a 3D model.

After you’ve trained the first checkpoint and found the sweet spot where it’s not over not undertrained, you have a foundation model that you can use for later reinforcement. Keep all drastic changes to the facial features of your training data set for the reinforcement face when you train on top of an already trained model. Because if your source images in the first stage already have a strong variation, you’re reducing the later ability to edit and utilise the retraining capability.

Don’t be afraid to cancel a training if you see it going off the rails. it’s better to start 20 times over until you find the right configuration than to waste hours on calculating an already failing model training to the bitter end, where it becomes artefacts and noise. If you see signs of overtraining or degeneration, they are just what I did say, and there’s no point in continuing training.

This is cool, I have been thinking about making one for Daz Studio, so if you have any information switch can help me, I would greatly appreciate it!

Doing something like that for DAZ is interesting however they’ve changed the UV layouts between generations so there is a difference between the V4 and Genesis characters and so on so since they don’t have the same UVW layout you would have to pick and choose one of them to do the training on since mixing different layouts in the same training will screw up the alignment.

@ClassicRPGArt I'm also looking into training a model on DAZ's Genesis 9. Would you mind giving a little break down of how you trained this model? Excellent work btw!

@bee @Clare3Dx At first, start with a small dataset of rather similar images with subtle changes. Let’s say something like 10 images for each main keyword. Like woman, man, old, young, make-up, european, east Asian … beard and so on. Make sure that you are tagging the images with the triggering keyword in the text file as the first word inside your description text file that has the same name as the image but is a .txt, after that in the text file separated by commas, the main attributes that you like to have associated with the image so the previously mentioned woman, man, old, young, make up and so on. Make sure that all your initial training data has the same background colour for the area that is outside of the UV boundaries. In order to train the model to not draw details there and get confused. definitely remove any kind of noise, watermarks, or whatever might be outside of the used UV space on your texture model page. If you don’t do this, your training will not generate a clean model that you can properly work with and expand upon later.

do the training on this dataset, run it with a rather low number of iterations, and often generate sample images and intermediate checkpoints so that you can see the point where the iterations over train the network and the results start to degenerate. It’s really easy to unintentionally overtrain a network that is dealing with such delicate changes like those in diffuse textures for a 3D model.

After you’ve trained the first checkpoint and found the sweet spot where it’s not over not undertrained, you have a foundation model that you can use for later reinforcement. Keep all drastic changes to the facial features of your training data set for the reinforcement face when you train on top of an already trained model. Because if your source images in the first stage already have a strong variation, you’re reducing the later ability to edit and utilise the retraining capability.

Don’t be afraid to cancel a training if you see it going off the rails. it’s better to start 20 times over until you find the right configuration than to waste hours on calculating an already failing model training to the bitter end, where it becomes artefacts and noise. If you see signs of overtraining or degeneration, they are just what I did say, and there’s no point in continuing training.

@ClassicRPGArt Wow thank you so much!! Incredible breakdown, really appreciate it. Loving all your experimenting, as someone hoping to join the 3D field I find it very exciting.

@ClassicRPGArt Huge Thanks! I will keep those details in mind when I find the time to start training checkpoints :o Currently working on TI/embedding of my characters.

@ClassicRPGArt Textures for daz are easily convertible between gens, if you can make a model for a gen 2 face udim tile for example you can convert that to a gen 8, 8.1, 9. I would love to see a sd2daz texture creator.

support!

@ClassicRPGArt huge thx for the details! Btw, what kind of toolbox or framework are you using for training? Diffusers[https://github.com/huggingface/diffusers] or others? Keep up the awesome work!

This is a great idea and is actually why I got into SD in the first place - I'll be keeping an eye on this project for sure!

Could you offer some 3D render images with these head texture applied?

Also, they are head textures only. In my experience, body texture need to match head texture's color, which is a lot of work for so many head textures.

So, does all these trained head textures are based on the default CC model's skin color?

ok, did a quick test, the color matching issue is there. good idea though, could be better if they all based on CC4 default character's skin color.

Character studio has a feature that can match the body texture to the face texture if you have the skin generation things available.

I was looking for a way to create texture for now the only way is with depth models, I leave you the link so you can experiment https://github.com/francislabountyjr/Dreambooth-Anything

Thank you for the link I have a look at that git repository

Image to image with depth and controlnet with depth or normal extraction work with any checkpoint. However, the fundamental problem is that as soon as you divert too far or use a complex clip text instruction, the texture pages degenerate. That’s why training a checkpoint that already knows where to place what main feature of the texture page gives better results and can of course, simply can be combined with the aforementioned depth map and as well as, the features of controlnet.

Oh, i was just thinking to use SD for this kind of stuff, it would be cool if we can generate a cross polarized photo scan style of portrait, which can be used to be projected using 3d painter apps

Hello friend

Just two days ago, I was thinking that a AI section should be placed in the CC4 so that it can generate different faces from within the program and place them on the 3D characters like a Headshot plugin.

---

But last night I came across this model of yours by chance and I was very happy!

Currently, I am working on a project where I need to create 100 different characters in CC4

I downloaded your model and used it in Automatic11...

Which upscale method you using to have more detail in final for example 2K output ?

Appreciated your effort

This is important: Your renders absolutely have to be rendered at 512/512px or bad things happen. This is fine, because you can always upscale it.

Do NOT use negative prompts, the normal things you do with negative prompts are likely to break the model and confuse it, as keywords like "deformed" will kick the whole thing back into a depth aware 3d mode.

The eyebrows. I wish it didn't generate the eyebrows like this. Since cc4, eyebrows have been a makeup feature that gets added on after the fact, rather than a part of the base skin layer.

It's prone to generating blemishes and skin artifacts.

Uv's don't always match up, but nearly all of them work. It's just a one off thing here and there that I noticed happening.

In addition to the regular uv maps, it also looks like it's been trained on toon figures.

Now that we've covered that, let's talk about some of the other stuff you need here to make it work. The big thing this model can't do without additional code is give you normal maps. But fear not my friends. For that, we have Normal Maps Online. Just look it up on Google. It'll help you generate all the PBR maps you need for these.

I think my big use for this model is going to be in making face decals. This one's a really useful time saver that relates directly to one of my main use cases, and I feel like I'll be using it a lot.

are you gonna add cc3 bodies? this is awesome. is there a way you could tell me how you made this lora? id like to make a version myself

Can you share a model that is adapted to this generated mapping, I put the mapping on my own character model and the mapping position shown is wrong, this may be caused by inconsistent UV expansion

Use your layout reference base face in image 2 image?

Hi, it looks great, but not sure if this work for Unreal engine METAHUMAN skin textures for faces. Could you please help me with this?. Thanks

These are greatest idea! If you need help you can count on me ! I am intestested.

I cannot generate man faces without a beard. Still, this is awesome.

need inpaint

can you make a pony version of this, will literally pay you money haha

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.