UPDATE!

download my ComfyUI Build https://huggingface.co/datasets/StefanFalkok/ComfyUI_portable_torch_2.10.0_cu130_cp313_sageattention_triton/tree/main , also u need download and install CUDA 13.0 (https://developer.nvidia.com/cuda-13-0-0-download-archive) and VS Code (https://visualstudio.microsoft.com/downloads/)

My TG Channel - https://t.me/StefanFalkokAI

My TG Chat - https://t.me/+y4R5JybDZcFjMjFi

Also big thanks to

for helping with workflows in past!

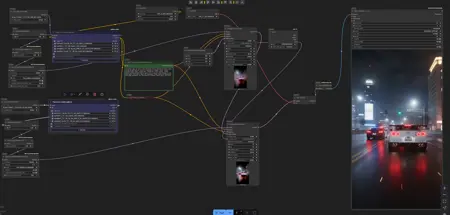

Hi! I introduce my working workflows with Wan 2.2 generation video for ComfyUI

I have included 4 workflows such as t2v, i2v, v2v, flf2v (firstlastframe) and foley audio

You need to have Wan 2.2 10 steps models (https://huggingface.co/StefanFalkok/Wan_2.2_10steps/tree/main), clip (https://huggingface.co/Comfy-Org/Wan_2.2_ComfyUI_Repackaged/blob/main/split_files/text_encoders/umt5_xxl_fp16.safetensors) and vae (wan 2.1 vae)

GGUF Wan 2.2 10 steps models

https://huggingface.co/StefanFalkok/Wan_2.2_10steps_GGUF

Leave comments if you have trouble or you found the problem with workflows

Description

FAQ

Comments (6)

This is really cool, I followed the youtube vid you linked, along with your profile. Even without the loras the time went from like hours on what I tried before to like 3min. How do you make your videos so long? Is it extending them over and over via the first last frame workflow? Thanks :3

I set more length in Latent settings. In my case I set 193 length at 24 fps (8 sec). Honestly, I don't like merge videos by last frame

Long single shot consistent videos with complex detail are the holy grail, and there isn't any single simple method on our low VRAM (32GB or less) consumer GPUs. Nor will there be. As the author suggests, you can simply attempt to render a much longer sequence than 81 frames, at a rez that will fit into your VRAM (likely to be much lower than you want), and hope you get lucky (the models are only trained on 81 frames).

For an animated figure, you can, of course, use an external controlnet video for the motion, like a character doing a long dance. But you will have the issue of consistency of figure and background. Proper VACE, and blending of clips, will be needed here.

If one does simply generate a very long (say 10sec) low rez video, then v2v upscaling becomes useful as a second process. Wan2.2 is fantastic at this job. BUT you'll obviously need to do this in batches- and then consistency is a massive issue. The trick would be to only upscale every second frame, say, and then use VACE to batch upscale the untouched frames from the data in the previously upscaled frames. Of course, WAN2.2 doesn't have a good VACE solution yet.

Honestly, for most people- using the last frame of a video for the first frame of the next video is the only easy method, and is very simple to do. Use a colormatch node to prevent colour drift. And you can bring the clips into a VACE 2.1 workflow to blend the last 16 frames with the first 16 frames of two consecutive clips to try to give them better motion flow.

blobby99, i have 5080 gpu with 16gb vram and i can generate it calmly 480p videos (because I don't see any reasons to generate in 720p, but sometimes i can generate in 540p) with 121 length (5 sec). I can generate videos in 193-210 length, but you're right - the local models got trained generating 5 sec. videos. I'm also waiting WAN 2.2 VACE and maybe WAN 2.2 Fusion. Wan 2.2 is going on to develop and develop, so i hope we'll see VACE, FusionX and more LORA's

Last to first frame is the easiest technique. Then you just concatenate the resulting videos. I like to try different seeds until I find a 5-6 second clip that ends on a good frame. Then upscale + interpolate framerate 2x. And repeat until you have a long video. I have a couple examples of this on my profile. A "good end frame" is one where all the important bits for consistency are still visible. For example, I had a clip where a woman falls backward onto a bed, but the end frame didn't show her face, so in the next clip when she sat up again she was a totally different person haha.

GasparGames you're right, firstlastframe and lastfirstframe techniques are very cool things to create cool videos