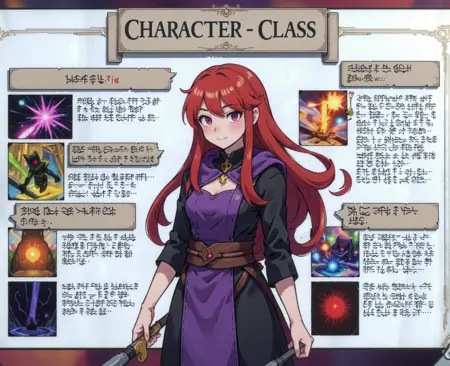

Flat Colour Anime!

A vibrant anime style trained using a high quality dataset captioned in natural language. This is my labour of love and I'm constantly working on improving it.

Please consider following to be notified on updates to this style and many more interesting styles I create ❤️

Important notes below! Please read

Keywords:

Check the version details on the right for the correct trigger words for your version. Currently these are:

"Flat colour anime style image showing" for Flux Dev and Schnell, and Z-Image Turbo

"fca_style" for Pony

"fca style" for SDXL

If you don't use the keyword, you won't get the most out of the style.

Version Key:

✅ Current

⛔️ Deprecated

Select the relevant version for your model from the top.

Update notes for version 3.4:

Now available for both Flux Dev and Schnell

Longer training time with a lower learning rate

Trained at 1024px vs 512px

Improved dataset quality

Added regularisation images to the dataset

Description

Tweaked some of the training data and settings. Hopefully this should have better style adherence and text rendering.

FAQ

Comments (14)

I'm gonna preface this by saying I'm still learning how LoRAs work on Flux... But I remember reading captionless training (just using the trigger word) oftentimes generates better quality LoRAs. Have you given it a try? If so, is your captioning system still providing better results?

Yep. All my other LoRAs are captionless with just a trigger word and turn out better than with captions. For some reason this is the only one that works better with detailed captions.

Damn can't load this and flux at the same time... not enough system RAM

I was having a similar problem today. I know it's a bit of an extreme solution, but if you have an integrated graphics card on your motherboard as well as your 3090 (I'm guessing?), then plug your monitor cable into the onboard graphics instead and don't have any output on the 3090. This will save just enough VRAM to fit it all in.

@CrasH I've got 24gb of VRAM (3090ti, I hope that's enough lol) but I only have 32GB of system RAM (normal RAM)... sometimes I can squeeze a single image out of comfyui before it (or in some cases, my browser) gets killed to free up RAM

@Beeb2 Ah yeah it's pretty RAM hungry too. I have 64GB and even then it likes to go right up to the limit sometimes.

@CrasH I hope this huge ram usage can be fixed eventually... maybe those gguf versions of flux could help... I don't really know what I'm talking about 😅.. thanks for the buzz btw

@Beeb2 I think it's the text encoder (the T5 bit) that takes up the RAM. You can try using the fp8 version of that if you're not already. It might affect image quality a little bit though.

@CrasH Unfortunately it still OOMs with the fp8 too

@Beeb2 I use FastFlux GGUF Q8_0 with the t5xxl_fp8_e4m3fn.safetensors and, including this Lora, it only uses 15GB of VRAM

@dagbs How are the results when using this Lora on Q8? I haven't tested it against any quantised versions of the model yet.

@dagbs I accidentally found out you can change the weight_dtype option to fp8 and it must cast/truncate the weights to fp8, which uses much less ram for me. The tutorial I originally watch implied that it had to be on default. I don't know why there is this option and also an fp8 version of flux. While I can load the t5xxl 16 bit version, it seems to have better results using the same floating point format. The results are still a bit different from the ones here.

@Beeb2 You should be having another 90GB of swap space (virtual memory) to back that up, but i definitely recommend getting 64GB of RAM because that swap will eat your disk.

very good!!~~~~

Details

Files

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.