WAS Dieselpunk

A Dieselpunk Faux Textual Inversion Style

If you would like to use this on your Stable Diffusion generation service or merges, please shoot me a message on Discord: WAS#0263

Usage:

Ending your prompt with the following segments is probably the best method. Name may differ for different versions.

art by WAS-Dieselpunkart by [(WAS-Dieselpunk):0.5](Less effect)art by (WAS-Dieselpunk)(More Effect)art by [((WAS-Dieselpunk)):1.5](Extreme Ultra Mode)... etc

Example Positive Prompt(s):

The following prompt example uses sd-dynamic-prompts and my Noodle Soup pantry converted for it (it has a tool to automatically download from my NSP repo)

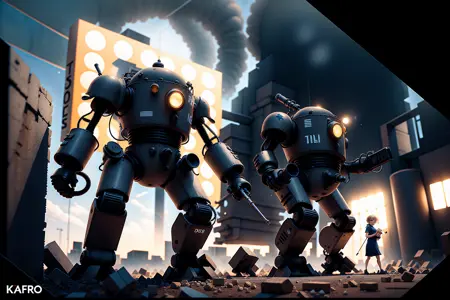

((full body shot)) a giant mech robot, art by WAS-Dieselpunk, good hand, best hand, 4k, high-res, masterpiece, best quality

Example Negative Prompt(s):

NG_DeepNagetive_V1_75T, ((animal face)), paintings, sketches, (worst quality:2), (low quality:2), (normal quality:2), lowres, normal quality

Compatible Models:

TAM_LOFI - TheAlly's Mix III: Revolutions x LOFI (multiplied 0.5 weighted sum)

LOFI

TheAlly's Mix III: Revolutions

Protogen 2.2+

Basically any fine-tune based on vae-ft-mse-840000-ema-pruned or better

Notes:

Model used for previews is "TAM_LOFI" (my name for it) which is a mix of TheAlly's Mix III: Revolutions (for dark outputs) and LOFI model. Multiplied at 0.5 weighted sum.

Has a bias towards orange color, will need to weight in other colors like ((red colored))

❤ Hearts and 🖼️ Reviews let me know you want moarr! :3

Description

FAQ

Comments (15)

Would it be possible for you to add the prompts to the example images? I often like to try to replicate the results of the example pictures with models/loras/textural inversions I download, it's also an excellent way to learn to make prompts.

he uses PNGInfo in WebUI he has mentioned but says to comment on images you are curious about and he may help. He also uses custom models often so prompts won't help reproduce results. He often uses a Idun model which isn't on here.

@3DE So in short, the example images are not reliable?

@MiniMage Nope, and considering they're TI's, to be used in any model, it doesn't really matter. You use it in the model you like. These images are made in the model specified under the description no one reads. Which is TheAlly's Mix III Revolutions and LOFI merged at 0.5 weighted sum. It can't be shared because of TheAlly's wishes on no merge shares. So you gotta do it yourself.

@WAS Since I can't replicate the results, I can not verify if the example images was done with a single generation. Nor can I determine the strength of the embedding itself because you supposedly used many other embeddings to create those example images. I'm sure the style will get across, but it does not actually tell me what it'll look like for the person downloading the embedding. Nor does it say if the example images was tinkered with extensive inpainting, or touched up by photoshop at the end. I just don't know.

In short, the pictures look pretty, but they don't really say how I would be able to achieve something like that. I'm not asking for a checkpoint model, but a prompt for each image would be nice. Demonstrating the embedding with a commonly accessible checkpoint model would also do a lot to showcasing the strength of the embedding itself.

After all, we're not trying to critique your artistry, which is excellent based on the pictures, but we just want a quick idea of how to at least attempt to replicate the results. It does not mean I wont use the embedding though, but I assume that I will get nothing like the example images, and thus will instead rate the quality based on what I first generate instead.

@MiniMage I didn't use "many other" anything. You're just saying falsifiable libel now. There are directions., And this is a TI, and how you will have to weight them, and your prompt accordingly in different models to taste. Different models are skewed in entirely different training directions. For example, all the vectors in my TI could point to Waifu nonsense in a incompatible model, where that sector of the network was overtrained with other content.

TI's are designed to help you prompt things base 1.5 doesn't do well, from there on, it's use in any other model will be subjective, and have different effects. This has nothing to do with me, my prompts or anything like that. It's the architecture. That's why I list models that worked for me.

@MiniMage y do u want to replicate?

@WAS Well, that's what I believed 3DE said, and I just took that as the truth because I had no way to verify if it was wrong or not. Upon closer inspection, I don't to see that part of the comment today though, strange. Anyhow, I had no ability verify anything. The directions does not refute this possibility either.

@3DE By verifying the prompts used in any of the examples, I will know that it's indeed as advertised and thus gets a natural 5/5 from me. If the results are not reproducable, you generally feel a little cheated and would thus shift the rating to a 4/5 or a 3/5.

@MiniMage TI's are inherently subjective though. Different look in different models, etc, and nothing looks good in base 1.5. I'd share the model I use, and like, and find represents most my TIs lately the best, but I can't due to the stipulations of the authors. But that's just it though, these TI's look different in different models. @3DE review has better images then mine imo, and more closely represent what I imagined. TAM_LOFI kinda adds a polish.

This isn't some sort of research website. It should not be about replicating results at all, but artistic aesthetic and what you like as an artist.

@WAS It's not really about research, let me give you an analogy. Imagine you click on a youtube cooking video because you see the title say "how to cook authentic noodles", the preview image is also of authentic noodles. There's vegetables and meat, it looks really pretty. But when the video begins, you get no information of the ingredients. You get informed of the heat and the type of stove used, but no information about the ingredients to cook the dish besides the noodles themselves. They then cut to few examples of the finished result. The cooking video skips the cooking. The creator of the video can defend himself and state that this is just an example of the cuisine, that this is a matter of taste. That you can add your own ingredients and make your own noodles. What people want is the instructions on what to add and how to prepare them to look like the preview image. That's what made them click it, that's what they wanted to try to make. Without that there is a logical disconnect between what made you click the video and what you get out of it. Annoyance will result, you'll feel a bit cheated.

Generally, it's only when you feel comfortable with it, that experimentation happens.

In your case, you've provided a resource. A good resource, but a resource. The images provided are there to represent your resource, and serves both as examples and advertisement for that resource. The more beautiful they are, the higher rated the resource will be. But if the images can't be replicated, it'll feel like false advertisement. Which will make people rate it with lower values instead. If I judged based on 3DE's results, I would probably rate this resource at 3/5.

@MiniMage But people use the best possible representations of their food possible, with high quality cameras, vibrancy boosts, dof focusing, and often, for commercial uses and like food network, use waxed perfect foods like restaurants do as well, or a fast food commercial. Additionally, it's a tutorial that you are attempting. You are likely not going to obtain the end result exactly anyway... as you are doing it. No seed to create the exact same thing. You yourself is doing it alone based off simple usage directions like I have already given.

Additionally, again, this is art. No where do you find art supplies being sold with the directions to recreate the branding art they use to sell it. No where is that a thing. It really seems people don't get art, and are more interested in abusing AI art and doing what everyone else is doing. I won't support that, it's likely these models will be illegal to use soon anyway because of their datasets. Don't need to further give it a bad name. That's not art.

@WAS It's not about achieving the exact result, it's about being given the oppurtunity to try. It's when the user fail spectacularly that the user will ask for help. There might not be much for you to do, maybe a rough pointer in the general direction is what you can do after that. After a certain point, you might simply not be able to help. For the user then, they may still be unable to get the exact result, but they'll get slightly closer. Getting the 1:1 isn't that important, if you've presented the instructions and ingredients and they are still unable; that's a skill issue on their end. The lesson isn't "if at first, you don't succeed, don't try", but "if what you're doing right now isn't working, what's wrong? Check the manual and try again."

The thing is that you're marketing a resource to produce a style. You're not selling pencils, you're selling the potential to produce works at a specific quality and style. The quality of which your resource gets rated for. If you were selling art I would expect your works to be presented at an art gallery. I wouldn't ask an artist how they made the painting in that context. After all, that's not the product you're selling then.

I am grateful for your resource, don't get me wrong, but your resource, your product, is a "how to generate the dieselpunk art style, for dummies". If the dummy in the equation is the ai or the user, that's up to interpretation. I'm still capable of guessing, but this how-to for dummies result feels inadequate if the user has to. Thus the rating tends to fall by the users if the promise you marketed to them is not met. That promise is presented with the example image and the thought in the users mind. "That is amazing, I want to do that, I want to make something very much like that thing, that would bring me so much joy". That's just basic marketing and you did it beautifully.

Do I understand art? Not sure. I can tell if something is pretty, and I can tell if something looks off. But I do enjoy creating stuff with people that want me to create stuff, people who want to help others with the joy of creation and given the chance, I would like to help others if I am able.

Regarding the suggestions given thus far, it's not as much of ingredients, as it is the base trigger words for the embedding to do absolutely anything at all. By the analogy of noodles, it's "Apply it to water, add heat, wait", It gets me about third of the prompt of maybe one of the example images. The level of detail of your works implies far more elaborate prompts.

Also, don't worry. I don't expect ai art to ever be invalidated. Legally it's all greenlit. All those licenes artists tend to sign to be able to submit their works digitally, legally, makes the case that anything sufficiently edited will thus be sufficiently transformed and be considered new works. Thus ai by those licenes alone are already in the clear. Granted, there are cases that may be scrubbed regardless, such as for example gettyimages and the like. Worst case scenario is that some of those simply get scrubbed. Removal of those from the system at this point wouldn't put a dent in its development as sufficient material is being submitted by users by the hour. This would easily plug that gap in less than a day. So you don't need to worry about that one.

@MiniMage you seem to know little about laws and copyright. The legality of AI art is very real. That's why 2.x only uses publicly licensed images, and also why they are under a lawsuit for 1.x.

Also it's weird you say it's not about reproducing, and the immediate say it is by "trying".

Like, almost ALL derivatives are based on 1.x, which is the models in question. And then there is the use of other software like CodeFormer and GFPGAN which is research only anc can't be used for commercial uses, because it contains celebrities and real people without consent.

Just distributing a 1.x model is technically unlawful because of the licensed images used to make it, and therefore a derivative of those copyrighted things.

@3DE We should be able to block users. Some people don't deserve the opportunity to use your stuff.

@3DE Well, if I can get like 80% there, I'm already happy. With the current instructions, I get just about 30% there. As Was stated, 100% is impossible. But the closer people get, the more pleased they get. I asked for ways to get closer, Was said no, that's about the summary of our little chat.

@WAS If it's easier to dismiss my criticism as being ungrateful, then go with that if that makes you feel better. Anyhow, I'll drop a score with my review later. We don't really have anything else to add to this little discussion, since you assume that my criticism is out ungratefulness and/or hostility. If I didn't feel grateful for your contribution, I wouldn't have commented at all in the first place, I would've just grabbed what I wanted in silence.

Details

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.