Version 3.2

A new version has been released. It's not a significant update other than the color correction section has been completely disabled for the time being. I haven't been able to improve the speed of that section, so I just turned it off. Anyone doing a fresh install of this workflow should not have any trouble installing all the custom nodes. I replaced the nodes that wouldn't install correctly, so as of today (25 November 2025) everything works.

Tip for users: Wan 2.2 LoRAs (low noise) work very well in Wan 2.1 workflows. Since this workflow uses Wan 2.1 Vace, it might help you to know that you can use your favorite Wan 2.2 Low Noise LoRA in this workflow.

Important credit for this workflow goes to pftq for creating the seamless video extend workflow. That workflow was the first to show me this feature existed and gave the building blocks I used to make this version. The workflow by pftq uses Kijai's Wan Wrapper nodes, so if you prefer to work with the wrapper nodes in order to make use of all the options and add-ons, then by all means give pftq's workflow a try. This workflow uses all native ComfyUI nodes for the Wan processing.

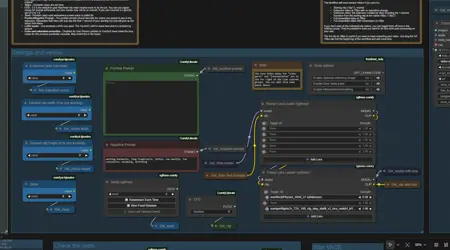

One of my goals with this workflow was to automate the process as much as possible. In order to get Vace to fill in gaps, you must first create two custom videos with masking in the correct places. This is painstaking to do without nice editing software, but the simple extension masks are something ComfyUI can accomplish with the help of a few custom nodes. This workflow is the result of that effort.

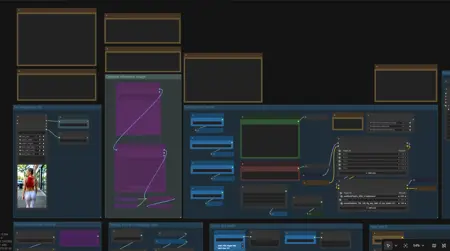

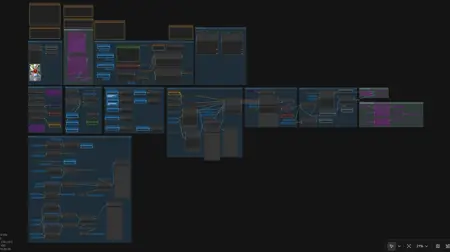

The workflow looks large, but once you've made sure all your models are loading correctly and your video save paths are set the way you like, you should only need to use the three groups across the top-left. You set your beginning clip, you set other values like steps and so on, then you run the workflow and check your output. All of the masking, resizing, and frame interpolation/smoothing is automated.

This extend video workflow is based on my earlier Wan Join Clips workflow. This simplified version is designed to extend a video using Wan with Vace starting with a beginning clip.

All of the machinery is exposed in all the other groups below, so feel free to mess with anything you like. You will find a few settings buried inside that you may want to tweak, like the Model Sampling Shift value, for example.

On my machine, using the default values given here, my 16gb VRAM card can load and run this workflow without using any shared GPU memory and without block swapping. It runs at a steady 14.2gb, usually, though your mileage may vary.

I hope you enjoy.

Description

Removed/Disabled color/saturation correction. See notes inside workflow.

Replaced nodes that are missing or broken in new installations of comfyui.

FAQ

Comments (4)

I am getting a transition that shows grey and the extended content appears to have zero knowledge of the reference video? All I did was adjust inputs (reference video, and ensure all model files in place) and check the final output changing nothing else...

I tested the workflow using the downloadable .json and putting a new video in place of my original sample and I didn't have any errors. I can think of a couple of places where you might run into trouble...

1 - for some reason (I forget why at this point) I am forcing the frame rate to 16 for all the math. if you expect and plan to use a different frame rate, then the frame calculation may be off from what you expect. If you want to change this value, in the group called "Getting info and prepping clips" you'll see an input widget called "Force FPS to 16". Just change that number if you need a new fps.

2 - this workflow expects to use a full 1 second of overlap frames to generate smooth continuous motion from the first clip to the new material. If your starting clip is too short (less than the default 16 frames), then you will probably get a weird result because the workflow will make a 1-second overlap no matter what, even if you don't have that many frames to fill it with. You could change this part, but it would take some editing and would be a little complicated. Probably easier to use a longer starting clip at that point.

I think number 2 is probably what's going on, but you didn't say anything about your source clip so I don't know.

@darkroast175696 is it because I am trying to extend a video without providing a motion control layer? trying to just extend via the prompt descriptions. It feels like this way the loras are just "dreaming" where the white frames are and it isnt related to the overlap frames.

Could you maybe include example input files for the workflow?

@snake88 You shouldn't need a motion control layer. You're basically just doing "first frame last frame" kind of stuff with Vace, except you only have the "first frame", and you use multiple frames instead of one in order to get smooth motion. I posted an article about using these tools for transformation videos and I added a zip file with the clips and the workflows embedded. I don't remember if the original image/video clip is there, but I will check. Also, those workflows are now out of date because of changes in comfyui, so be prepared for some broken nodes if you open those workflows. You'll be better off just using those workflows as reference points and copy/paste the prompt and settings into your own more recent workflow.

https://civitai.com/articles/16981/using-wan-with-vace-to-make-a-transformation-video