Important credit for this workflow goes to pftq for creating the seamless video extend workflow. That workflow was the first to show me this feature existed and gave the building blocks I used to make this version. The workflow by pftq uses Kijai's Wan Wrapper nodes, so if you prefer to work with the wrapper nodes in order to make use of all the options and add-ons, then by all means give pftq's workflow a try. This workflow uses all native ComfyUI nodes for the Wan processing.

This I2V workflow is based on my earlier Wan Join Clips workflow. This simplified version is designed to extend a video using Wan with Vace based on a single beginning frame. Yes, I know Wan Image-to-Video already exists, but the results you get from using the Vace system can be quite different, so I think this is a useful tool.

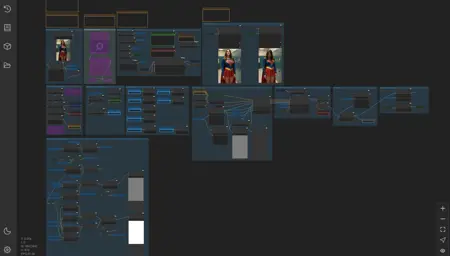

The workflow looks large, but once you've made sure all your models are loading correctly and your video save paths are set the way you like, you should only need to use the three groups across the top-left. You set your initial image (and reference image, if you like), you set other values like steps and so on, then you run the workflow and check your output. All of the masking, resizing, and frame interpolation/smoothing is automated.

All of the machinery is exposed in all the other groups below, so feel free to mess with anything you like. You will find a few settings buried inside that you may want to tweak, like the Model Sampling Shift value or the interpolation settings, for example.

On my machine, using the default values given here, my 16gb VRAM card can load and run this workflow without using any shared GPU memory and without block swapping. It runs at a steady 14.2gb, usually, though your mileage may vary.

I hope you enjoy.

Description

FAQ

Comments (35)

This took 2h 35 min to create a 5 sec vido on my 16gb VRAM card, that surely must be some kind of record, not a good one though. I have to admit that it looked pretty good.

Wow. That's... a lot. I have a 5070ti with 16gb and this workflow usually takes about 5 minutes to make a 480x720 clip of 5 seconds with causvid for 10 steps.

That said, I've noticed that with wan in general, not just this workflow, if I change resolutions or switch to a different tab to run a generation... anything really small like that, it doesn't clear the card cache from the last thing and it uses shared vram which bogs everything down to the level your talking about.

Now I have the habit of keeping a performance monitor open on my computer to show GPU stats like vram and shared vram used. If my system tries to use shared vram at all, I stop the task and use the icon in comfyui that dumps out the models from the video card. That almost always fixes it.

I hope that's the problem in your case because it's an easy fix.

@darkroast175696 I have to admit that i read your description and did as you say, set my models, added and image and here we go. Now i'm going to take my time, add the caus vid lora, reduce resolution and steps and so on, see if it gets better, i'll update my comment with the results.

@skyrimer3d Please do let me know how it goes. The workflow has been reliable for me, but I don't know about your hardware. Don't forget to set CFG to 1.0 when you use causvid. You can even try 8 steps and a low res like 320x480 just to test. I hope it works for you.

So i checked this with caus / lightx loras, CFG 1 / 2, steps 8-10, incredibly fast, but videos look pretty bad, colors and default image have a lot of distortion in environment / faces. There may be more stuff to tweak to improve it, but for now not too good, although speed is great now. Should i change their strength to something other than 1?

@skyrimer3d Some settings and options for you to confirm and/or try...

Source image: crop to dimensions giving you an easy square or 2:3 ratio

Extension time: 5 or 6

Clip height: 720, but may depend on your source image

Steps: 8

CFG: 1.0

LoRA: causvid at 1.0, no other lora for now

Are you using version 2 of causvid? It's much better than version 1.

Also, I've been using the GGUF for Wan/Vace: Wan2.1-VACE-14B-Q4_K_M.gguf. I do NOT use sage_attention. I use the gguf clip loader for umt5_xxl_fp16.safetensors.

Then I use uni_pc/normal in my KSampler with a ModelSamplingSD3 setting of 6.0.

darkroast175696 I was already having good results with FusionX lora but it took way more time than caus lora for the same 5 sec vid in 2-3 min or so with caus.

Your suggested settings worked great, and the key part for me other than the config was the v2 version of caus vid, first vid with original caus vid looked okish, but v2 looks great, no fancy colors or face deformation, so this is looking promising. I've added blockswap node too that always helps me avoid OOM, so i'm quite happy right now with this amazing workflow.

One question, i see 30+ secs vids as examples here, any tips to get vids so long or just add extension time 30 and try my luck?

skyrimer3d I'm really glad it's working for you. Be sure to drop me a link if you post something so I can check it out.

My longer videos were extended multiple times with my other workflow Wan Extend. You can take the 16fps output from this workflow and feed it into the extend workflow to add another several seconds. I recommend 4 to 6 seconds as a comfortable range because wan works best there. Then rinse and repeat. When your happy with the next step, take the "complete" 16fps clip and use it as the source to keep extending.

I published an article about making transformation videos that explains this process.

Good luck!

darkroast175696 Thanks i'll look into this, and thanks for providing these amazing workflows :)

So if I'm understanding this, it's basically a img2vid that lets you bookend another image as the last frame? But does it in a way that for some reason beyond my inexperience works better? Sounds amazing.

DISREGARD- I think I understand. You use this workflow to make the img2vid, then the Video Extender to add footage. So many applications. I have a bunch of ideas that I was forced to shelve that could be realized by this, once I get this personal life stuff out d the way!

Yes, to your extra comment at the end. That's exactly what I've been doing. The video extend can work repeatedly to keep adding more clips, but it helps to start things off with a really good starting image or video clip. If you can't or didn't want to make a whole clip to start with then this workflow lets you get going with just one image. The workflow just turns that image into a one frame video and away you go.

I published an article today about making transformation style videos and it walks through this process.

@darkroast175696 That's amazing! You could make whole movies, not just 3 second clips! You can splice like 7 or 8 doing one thing, then 3 or 4 with a progression in the action, then keep doing that! Consider my mind boggled!

@JamesBondage69 It's worked pretty well for me so far. I published a few videos today that were around 30 seconds long, each made from one image then extended again and again. Different LoRAs and prompts at each stage to move the video along in the direction I wanted. Sometimes it took several tries to get the next stage the way I wanted it, but at least I have some control over the steering wheel unlike with Kling or whatever where I'm just hoping and spending credits. I hope you get a chance to give it a try and have fun with it!

darkroast175696 You ain't kiddin'. Those are some great videos, and just what I'm talking about! In fact, BE+Bimbofication were on list of some of the ideas I can take off the shelf for this, a theme that is well served by scripted videos spliced together for a more cohesive narrative. Plus, this is on top of your She-Hulk model (quite a She-Hulk fetish over here), I can make Jenn turn into She-Hulk, then get Bimbofied, huge tits and all, pop a few buttons on her jacket, maybe blonde hair but I prefer dark, by some villain's bimbo ray, then sexy shenanigans ensue.

is it possible to modify this to enable a perfect loop?

For that job, I would probably just use the native Wan first/last image process. I can't remember the acronym right now. The one where you can set the begin and end image. Just set the same image for both and describe an action for the middle. If you've already tried that and it didn't work, then I would guess you could make something similar using Vace. I already have the video join workflow and this image-to-video workflow is based on that. So a similar process could add the start image onto the end and that might encourage Vace to give you a loop.

If I were making that with this workflow, I would find the part where it assembles the masked video and add another "add two image batches together" node after the mask is made. Then you take the already built batch from the workflow and add your starting image batch onto the end of it, then send THAT output to the rest of the workflow. I don't know if it would work, but that's how I would start.

@darkroast175696 Thank you for the indepth response. I think I see the pattern.

@makiaevelio543 I actually had another idea, but I want to try it before I try typing it out. If it works, I can just post the result and you can get the workflow from there.

@makiaevelio543 Ok, I have a simple example working.

Step 1: make a 1-second video at 16fps.

Step 2: Using the workflow embedded in my sample clip, load your 1-second video as the starting image.

Step 3: Write a prompt and choose a duration for the video. Run the workflow.

The loop clips are saved in separate video save nodes next to the main rendered videos box. It saves a .mp4 and a .gif, both at 16fps. If you want smoothing, you will have to edit or do the smoothing yourself.

If you want to make a much longer video using multiple extended clips, that's a more complex workflow that I haven't tried yet, but it should be possible. This example will be a simple one to get you started, though.

See video clip posted here: https://civitai.com/images/88667625

@makiaevelio543 Ok, so it turns out it wasn't as complex as thought. I've posted a new video with an embedded workflow: https://civitai.com/images/88675275

This workflow will take a video of any length and add a short extra clip to your video make it loop smoothly. It uses the first 1 second of your original video as the target. It's up to you to write a prompt and choose a duration for the extension clip to give Wan a chance to make your video wrap around nicely.

In your original video, you will need to keep track of the background and other details. If the end of the clip is too different from the beginning, you will get some warping and weird effects. My demo uses a plain background and motions that are easy to loop. Just keep that in mind.

So now, you can make a video as long as you want (like using my Wan Vace Extend Video workflow for example) and then you throw it into this special workflow to make it loop.

Have fun! And let me know if you make anything with it so I can see it.

darkroast175696 I will absolutely try this out and see if I can get it to work

Okay so installed all the missing node through manager, tried (and failed) to understand the concept (I mean I do kinda understand, it I just don't see it in the nodes)

So, I put a random civitai video in the load video, put a random reference image of a lady in the reference image, hit run, and now it's going for 25 steps and expected time 1h30+... what did I do wrong (steps seems too high right ?)

Okay spotted you already have Lightx and causvid lora in the power loader, they are just greyed out by default, so this answer my question :)

fouchardmilcoupes311 Yes, and also you will want to adjust steps and cfg (8 and 1.0 works well) when you use causvid. I've had better luck with causvid 2 than lightx2v but that might be a matter of taste. You can play with both.

Also, this is an image-to-video workflow, so you would start with an image, not a video at first. Did you mean to put this comment in the other workflow? (Wan Vace Video Extend, the one that adds more video to an existing clip)

One other performance note... I use a 16gb video card and can't go any higher than 640x960 pixels with Vace or I run out of memory. I haven't tried the block swapping tricks from other Kijai wrapper workflows because I prefer the simpler native nodes and they generally work for me. So depending on your video hardware, you may need to pay close attention to the dimensions of the video clips you use.

darkroast175696 I believe there is a native block swapping now, no ? not sure how it goes with wan_Vace, but it does allow to push the model through usual OOM scenarios yeah, worth a try, I have 24GB Vram and I'm already at 21.8 out of 24 used in my test run, so I would need that too, to push for 1280*720 for example

Okay native block swapp is called WanVideoBlockSwapp, you apply it just after gguf or model loading, recommended 20 can push to 40 for greater gain (but I believe it make things slower) and put all the other parmeters to true and should work, i'll give it a try after my first test complete...

fouchardmilcoupes311 thanks for the info, I'll have to give that a try sometime soon. Meanwhile drop a comment and let me know if the workflow is working better with the adjusted settings.

darkroast175696 my first test (the one lasting 1h30) was a disaster, mostly because i didn't give any prompt and made a reference image that was not going well with the base video, but it worked beside this, now with adjusted setting, so far it work okay, tried blockswapp, it refused gave me an error at Ksampler node saying not (something) I suspect it's block swapping just before sageattention that can be the issue i'll test again later different way)

Or maybe Vace need a specific blockswapping that the native wan don't cover.

Edit: BlockSwapp seems to work for 20 block and when I disable sageattention and torchfp6 accumulation, and untick export img_emb in the blockswapp node, honestly seems to put as much Vram than without though, so maybe it's not really working...

darkroast175696 My problem currently, is the video that to fill the gap, is way over saturated and almost cartoony in comparison to the base video, so it really render this useless in this form, I suspect it's a setting with lightx2 and/or causvid to find, but soe far after 3-4 test no luck, pushing to 8 step gave slightly better than 6 steps but overall quite bad because of the stark different contrast saturation etc

fouchardmilcoupes311 I'm having trouble figuring out from your comments which workflow you're using. image-to-video, video extend, or join clips?

darkroast175696 darkroast175696 I didn't even know there was more than one workflow in this one, well apparently it take a video, add 5 second of grey extension to it, and then make a video to fill that gap and join them back in a final video

fouchardmilcoupes311 I meant...

https://civitai.com/models/1778962/wan-vace-image-to-video

https://civitai.com/models/1778987/wan-vace-extend-video

https://civitai.com/models/1695320/wan-join-clips

They each do different things. If you have a picture, then image-to-video. If you have one video and want it longer, then extend video. If you have two videos and want a smooth transition clip to join them, then join clips. Which are you using?

darkroast175696 I'm on Wan Vace extend video json, bu thanks for the tip it seems the most fun of Wan join videos, I will test that one next

fouchardmilcoupes311 I'm about to get up and leave the computer for a bit so last reply for a while. The join clips workflow includes color correction because I found the two source clips often were not the same color balance so some correction was helpful to make the whole result consistent. Auto color correction isn't perfect though so some clips may end up worse because of it. In the video extend workflow, I have seen that saturation sometimes increases a bit over time, but I only really notice it after twoor three extensions. If you see a lot of extra saturation right away, then that's different from what I've experienced. Maybe just more steps would correct it? I don't know.

darkroast175696 yeah, I think it's causvid that is known to cause over saturated/overexposed results, maybe only using lightx2 can do? no idea, i'll check later, anyway thanks for the reply, workflow seems load of fun, I'm just not having amazing result so far mostly because I probably use some bad settings, but the potential is there.