Attention!!! PLEASE READ TEXT BELOW!!!

中文版往下翻

日本語のバージョンは下へどうぞ

the lora model upload here is not created by me, but by jappww (https://civarchive.com/user/jappww).

the reason I upload here just for example cuz I found this impact when I was trying to use his zero two 02 model.

The following content may already be known to many of you, but I would like to briefly introduce the impact of prompt order on results to many people who are still unaware of this information.

The conclusion is that the arrangement order of prompts has a significant impact on the image output results (especially in Hires.Fix mode).

Then I will provide some example to explain what I'm saying.

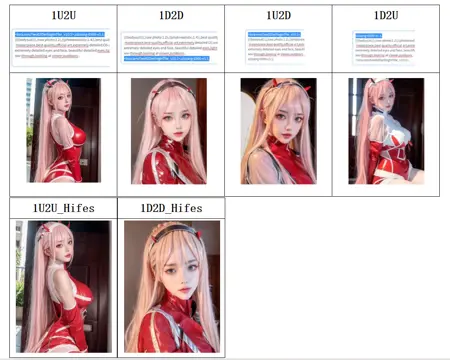

All the pictures will be shown below are generated by same parameters and same seeds except prompt order.

It can be seen that when the appearance-related prompts are placed at the bottom (1D2D), the image generated by Hires.Fix is very different from the original image, while placing the appearance-related prompts at the top (1U2U) retains a relatively high correlation between the generated image and the original image.

It is evident that in picture generation(especially in Hires.Fix mode), the order of prompts has a remarkable impact on the output results. When appearance-related prompts are placed at the beginning, the generated image has a high relevance to the model. However, when they are placed at the end, Hires.Fix will refine based on the initial 8K and 4K keywords, resulting in a significantly different appearance from the original image before processing.

This leads to the conclusion that when using a LoRa model related to appearance, especially in Hires.Fix mode, it is best to place all LoRa models related to appearance at the beginning. Personally, I suggest the following order:

The LoRa model of a specific character, such as the Zero Two LoRa model in this example.

Overall optimization models, such as the famous ulzzang-6500 (https://civarchive.com/models/8109/ulzzang-6500-korean-doll-aesthetic), xxdoll, etc.

The rest of the models sorted by importance.

I hope this tutorial will be helpful to you~ go create your own arts~

===========

2023/3/11

After being reminded by kmlau (https://civarchive.com/user/kmlau), I found that the placement of the <Lora> model does not affect the image generation results. That is, when stable diffusion reads the prompt, it will treat the six characters "<", "l", "o", ..., ">" as blank spaces. However, it is important to note that any other symbol, such as "<lora>,"(the comma) will affect the generation of results.

For example,

<lora>prompt_A, prompt_B, prompt_C,

and

prompt_A, prompt_B, prompt_C, <lora>

and

prompt_A<lora>, prompt_B, prompt_C,

and even

prompt_A, prom<lora>pt_B, prompt_C,

will all produce exactly the same results.

However,

<lora>prompt_A, prompt_B, prompt_C,

and

<lora>, prompt_A, prompt_B, prompt_C,

are different. The reason for the problem in the previous example(days ago) is that the comma in the prompt affects the final result generation, which is also why the change occurred in the previous example images.

Other than <Lora>, no significant problems were found with the other summaries, if there are other mistakes I found, I will continuedly edit it.

===========

==============================================

注意!!!请仔细阅读以下内容!!!

在这里上传的LoRa模型并不是我自己创建的,而是由jappww (https://civarchive.com/user/jappww) 创建的。我在尝试使用他的Zero Two 02模型时发现了这种影响,因此在此仅作为示例上传。

太长不看版:

画图的时候把最重要的提示词放最前面(在本例中,是02的lora模型)。具体区别可以看图。

详细版:

下面的内容可能已经为你所知,但我想简要介绍一下提示词顺序对结果的影响,以便许多仍未意识到此信息的人了解。

结论是,提示词的排布顺序对图像输出结果有巨大影响(尤其是在Hires.Fix模式下)。

接下来我将提供一些示例来说明。

下面显示的所有图片都是使用相同参数和相同随机种子生成的,唯一不同的是提示词的排布顺序。

可以看到,在将人物外貌提示词放在最下面(即1D2D)的情况下,Hires.Fix生成的图片与原始图片差异很大,而将外貌提示词放在最上面(1U2U)时,生成的图片保留了相当高的相关性。

可以明显看出,在图像生成(尤其是在Hires.Fix模式下),提示词的顺序对输出结果具有显著影响。当与外貌相关的提示词被放在最前面时,生成的图像与模型相关度非常高。然而,当它们放在最后时,Hires.Fix将会基于最初的8K和4K关键词进行细化,导致最终生成的图像外貌与处理前的原始图像存在显著差异。

这导致结论是,在使用与外貌相关的LoRa模型时,特别是在Hires.Fix模式下,最好将所有与外貌相关的LoRa模型放在最前面。我个人建议按照以下顺序:

某个具体角色的LoRa模型,例如本例中的Zero Two LoRa模型。

整体优化模型,例如著名的ulzzang-6500 (https://civarchive.com/models/8109/ulzzang-6500-korean-doll-aesthetic)、xxxdoll等。

其余的模型按重要性排序。

希望这篇教程对你有帮助~

===========

2023/3/11

经过kmlau(https://civarchive.com/user/kmlau )的提醒,我发现<Lora>模型放在哪里并不会影响图片生成结果,即,stable diffuion在读prompt的时候会将"<lora>"这六个字符("<","l","o",...,">")视为空白,但是注意,一旦带了任何其他符号,比如"<lora>,",都会影响结果的生成。举个例子,

<lora>prompt_A,prompt_B,prompt_C,

与

prompt_A,prompt_B,prompt_C,<lora>

与

prompt_A<lora>,prompt_B,prompt_C,

甚至

prompt_A,prom<lora>pt_B,prompt_C, 这四种产生的结果都是完全一样的。

但是

<lora>prompt_A,prompt_B,prompt_C,

与

<lora>,prompt_A,prompt_B,prompt_C,

这二者是不同的。之前的示例中出现问题的原因是,这也是之前示例图片中变化出现的原因。即,这个逗号影响了结果的最终生成。 而除了<Lora>以外,其他的总结并未发现明显问题,如果回头发现其他问题,我将再次进行修改。

===========

==============================================

日本語バージョン

以下の内容をよくお読みください!!!

ここでアップロードされたLoRaモデルは私が作成したものではなく、jappwwさん(https://civarchive.com/user/jappww)が作成したものです。私は彼のZero Two 02モデルを使用しようとしたところ、このような影響を発見したため、ここでは単に例としてアップロードしています。

一言バージョン:

画像を作成するときに、最も重要なキーワードを最前面に配置してください(この例では、02のloraモデルです)。

具体的な違いは画像で説明します。

詳細:

以下の内容は既にご存知の方もいるかもしれませんが、キーワード(Prompt)の順序が結果に与える影響について簡単に紹介したいと思います。まだこの情報に気づいていない方が理解できるように説明します。

結論として、キーワードの配置順序が画像の出力結果に巨大な影響を与えることです(特にHires.Fixモードの場合)。

以下にいくつかの例を示します。

本記事で添付した画像は、すべて同じパラメータと同じランダムシードを使用して生成されたもので、違いはキーワードの配置順序だけです。

人物の外見に関するキーワードを一番最後/下(つまり、1D2D)に置いた場合、Hires.Fixが生成する画像は元の画像と大きく異なります。その一方で、外見に関するキーワードを最上部/最初のところ(1U2U)に置いた場合、生成される画像はかなりの相関性を持っていることがわかります。

画像生成(特にHires.Fixモードの場合)において、キーワードの順序が出力結果に対して明らかな影響を与えることが明らかになりました。外見に関連するキーワードが最初に配置された場合、生成された画像はモデルと非常に関連しています。しかし、それらが最後に配置された場合、Hires.Fixは最初の8Kと4Kのキーワードを基に微調整を行い、最終的に生成された画像の外観は元の元の画像と明らかに異なります。

結論として、外見に関連するLoRaモデルを使用する場合、特にHires.Fixモードの場合ですと、すべての外見に関連するLoRaモデルを最初のところに配置することが最善です。以下の順序は個人のおすすめ:

特定のキャラクターのLoRaモデル、例えばこの例であればZero Two LoRaモデル。

全体的に最適化されたモデル、例えば有名なulzzang-6500(https://civarchive.com/models/8109/ulzzang-6500-korean-doll-aesthetic)、xxxdollなど。

その他のモデルを重要度の順に並べます。

このチュートリアルが役に立つことを願っています~

本記事は主にCHATGPTで翻訳して、変なとことは自分で添削したので、もしまたどこか変なところがあれば、遠慮せずにお声掛けください!(日本語で論文などを書きすぎで丁寧すぎるところがいっぱいあるかもしれませんので、ご容赦を・・・)

===========

2023/3/11

kmlauさん(https://civarchive.com/user/kmlau )の提案を受けて、私は<Lora>モデルの配置が画像生成結果に影響を与えないことを気づきました。つまり、stable diffusionがpromptを読む際、"<lora>"この6つの文字("<"、"l"、"o"、...、">")を空白として扱うことがわかりました。ただし、"<lora>,"(一つのカンマが入れた)のように他の記号を含めると、結果の生成に影響を与えることに注意してください。

例えば、

<lora>prompt_A,prompt_B,prompt_C,

と、

prompt_A,prompt_B,prompt_C,<lora>

そして、

prompt_A<lora>,prompt_B,prompt_C,

さらには、

prompt_A,prom<lora>pt_B,prompt_C,

これら4つのものは完全に同じ結果を生成します。

しかし、

<lora>prompt_A,prompt_B,prompt_C,

と、

<lora>,prompt_A,prompt_B,prompt_C,

は異なります。前の例で問題が発生した理由は、カンマが結果の最終生成に影響を与えたためであり、前回の画像で変化が発生した理由でもあります。

<Lora>以外に明らかな問題は見つかりませんでしたが、もし他の問題が発見した場合に、改めて修正いたします。

===========

Description

FAQ

Comments (41)

太感謝了

总结是什么顺序优先?没搞懂

说实话,这个问题困扰了很久

我直到今天之前还被这个问题困扰呢hhhh

hifes之前生成的明明还能看,一进入hifes之后就立刻怪了起来,直接变了个人

thanks for your kindness

If you find any parts difficult to understand, please leave a comment here and I will try my best to modify the document to make it as clear and understandable as possible.

Hi, what is the difference between using koreandoll, uzang, and other models? I mean from the photos I see the same "asian" looking style? Is there any differences that I missing? Thank you.

@OneSkyStudio Hey OneSky Thanks for asking xD.

From my understanding, There are sorts of TINY difference between each of them, so that only those people who really care about the specific appearance will make an effort to it lol.

And, another difference is, each model has its own strengths in specific areas. For example, Model A may not be good at handling seated poses while Model B excels in this area, and Model B may not be good at handling a particular angle while Model C is good at it. By using multiple models, it is possible to handle different scenarios to a certain extent while retaining the overall appearance characteristics (since they are all looks pretty, right? xD).

Hope I didn't misunderstand what you mean.

Anyway, all of these are my own understanding, I'm also a beginner of AIGC, so feel free to correct me xD.

Hope my explanation is helpful to you~

@setsunal Thanks! It's like i'm starting of studying photography because of stable diffusion LOL

如果你认为有任何难以理解的地方,请在这里评论,我会将文档尽可能修改得更加易于理解。

lora原生,lora插件,这两个模式有测试过吗,是否一样

不好意思这个还没有测试,不过我认为应该有影响

如果方便的话你可以测试一下,我会把你的测试结果还有你的名字放在文本里

马住

My understanding of the inner mechanics of prompt-parsing is that all LoRA and Hypernetwork embeddings are loaded into the layers of the diffusion model in VRAM first, then that string of characters is removed from the prompt, and only THEN the prompt is executed, so to speak.

When the <lora> and <hypernetwork> are removed, this leaves behind things like empty parentheses and commas, which slightly affect the outcome expecially when they are in front, which is why it's better to place them at the end, so that the prompt doesn't have something like (:0.8), (:0.6)," at the front of it, which is just nonsense to the model.

TI embeddings are another matter - there the order matters a whole lot more.

Thanks for comment.

I'm not expert of stable diffusion lol, just a normal user. All of these text in this page are just based on what I experienced. Of course it could be better if we can understand what inner mechanics is, but for now I think a specific method (such as push important prompt at first) is more easy to all of people like me to use. what do you think?

anyway really thanks for comment and talking about inner mechanics, it's truly meaningful.

@setsunal My advice would be this: Put <LoRA> and <Hypernetwork> last if you use parentheses around them, so that no empty parentheses are left behind at the start of the prompt once they are removed.

As for the rest, your findings regarding prompt order are good advice.

Just to clarify, from my limited testing, the order of the LoRA/Hypernetwork tags does not seem to matter, and as @kwik states, the tag (everything inside and including the <> brackets) is removed entirely from the prompt, i.e. it's not part of the prompt at all. Its presents just instructs the webui to load the LoRA. However any surrounding characters like commas and spaces are part of the prompt, e.g. "<lora:chunli:1>, chunli kicking" is seen by the model as ", chunli kicking", whereas "chunli kicking, <lora:chunli:1>" is seen as "chunli kicking, ", two different prompts. Also emphasis parentheses have no effect on <> tags, because again they're removed from the prompt. They only work on the trigger words.

As long as you don't put anything around the LoRA/Hypernetwork tags, you can move them anywhere without changing the output. I usually put them at the front, with no spaces or commas around them (e.g. "<lora:chunli:1><lora:someStyle:1>chunli kicking"). Aside from that, none of your advice is specific to extra networks. Tokens (including trigger words) near the front of the prompt have more "weight" than ones near the end so it's always best to put the most important elements near the start.

太有用了,老哥,好多B站up主并没有提到提示词先后顺序的影响,有用的知识增加了

如果有b站搬运视频搬运了这个小技巧麻烦踢我一下,我去看个热闹(

我在试做的时候也发现了这个问题,特化lora排在前面效果会非常好,而且显著提高hires fix的成品稳定性。但是提示词的排序没有尝试过,当时是觉得排序肯定有影响,不知道会影响这么大

我想知道sd-webui-additional-networks这个插件使用的区别,是放前面还是后面的效果

因为我个人没使用过sd-webui-additional-networks这个插件,可能没办法帮到你呜呜

不过你说的如果是extra network,也就是lora那些的话,则我图里展示的就是这些

受教了,受用不盡

何か分かりにくいところがあれば、遠慮せずここでコメントしてお願いします。こちらから記事をできるだけ分かりやすく直します。

thanks!

谢谢你!

这个要怎么用

兄弟你把LORA前后的逗号去掉再试试吧

很有帮助的实验,非常感谢

科研精神值得点赞

請教一下各位,三月才開始用Colab的Chilloutmix ,用Lora時候一直不理想,就算參考了大家嘅prompt、設定同種子都產生不到一樣的女孩的樣子(河北彩花成功的,但例如koreanDollLikeness設定了0.3-0.6就一直產生不到),有什麼要注意呢?搜了好多教學也沒有深入的解說,要堅持用同一粒seed?

seed并不影响lora的表达,但是以为绝大部分的人物lora训练都时候用的都是半身或脸部特写,所以,在你生成的时候半身和脸部特写生成成功的概率要大很多,反之,全身像、添加了夸张表情的提示词几乎不会成功,而且,例如你用了某人的lora再假如比如xxdoll这种东西就要保持后者的低权重,不然它也会污染你的人物lora,人物lora大部分权重应该在0.8左右,但是部分国内的lora一般用1.0的效果会最好。

多謝你的分享,我也會開始多加留意,常發現一用Lora圖的質素就下降了不小,真煩惱

@henry992 lora的使用需要注意的问题很多,比如有些lora必须要添加合适的触发词,但是有些则不需要,而且要注意作者用的是哪个大模型,比如有些必须用uber的你就不能用chilloutmix。人物和doll最好不要同时用,一定要用就把doll的权重拉低到0.1-0.2不能再高了。一般来说表情或身型的lora都会污染脸部lora。

太好了,一直知道有影响但不知道什么影响,我也喜欢钻研,谢谢兄弟!

1. This probably belongs in an article post instead of a LoRA repo

2. Surprising that the comma in LoRA does lead to a change, could you also test this in ComfyUI and see if this is a parsing issue over a Stable Diffusion issue?

Details

Files

zeroTwo02DarlingInThe_v10.safetensors

Mirrors

zeroTwo02DarlingInThe_v10.safetensors

zeroTwo02DarlingInThe_v10.safetensors

zeroTwo02DarlingInThe_v10.safetensors

zeroTwo02DarlingInThe_v10.safetensors

ZeroTwo_realistic.safetensors

zero two 02(darling_in_the_franxx_)_V1.0.safetensors

zero two 02darling_in_the_franxx__V1.0.safetensors

zeroTwo02DarlingInThe_v10.safetensors

Available On (1 platform)

Same model published on other platforms. May have additional downloads or version variants.