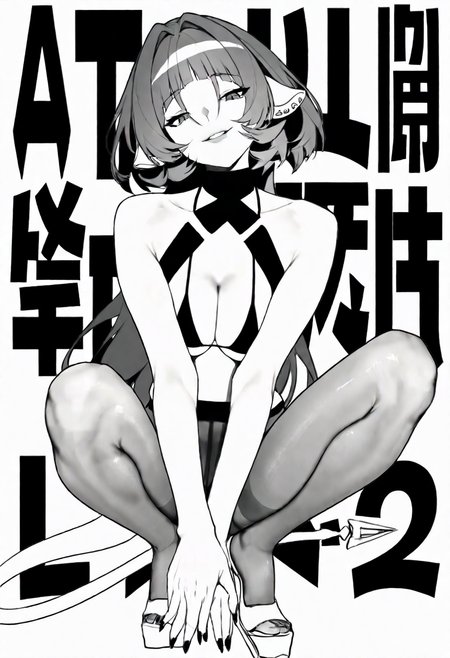

this lora is trained base on specifically the 27 pics of line art greyscale uma musume daiwa scarlet & curren chan picture set, it doesn't include the entire range of dataset spectrum

these 27 pics were crawled with ~10x quantity ratio using albumentation transformer library, to give it random ~5% gamma curve adjustment, random cropping with xy shift, rotate, zoom in, resulting ~300 extra 1024px images as training supplement, pair with the original master of 27 pics, with 10 repeats, so every master trained, the model is fed with another albumentation processed crop in image, keeping 1:1 ratio. since 27 pics of dataset is considered as diverse enough, this ratio is used.

using RMSProp optimizer, with 0.99999999999 momentum, base factor 0 to 1, so it is basically 1, the code does not allow 1 so I had to use this weird number.

learning rate of 1e-5, unet and text encoder.

5000 steps, batch size of 2. BF16 precision training.

cycling learning rate scheduler, 500 steps each cycle, linear.

512 network/convolution dimension.

training model is rouwei 0.8 base fp32, a spectacular base model for training.

I suggest keeping these several tags in the negative prompt. close-up, cropped, out of frame.

Description

trigger > t1r1i1g1g1e1r = add 1 per alphabet

add lineart, greyscale, monochrome in positive prompt if you desire the original lineart look

FAQ

Looks like we don't have an active mirror for this file right now.

CivArchive is a community-maintained index — we catalog mirrors that volunteers upload to HuggingFace, torrents, and other public hosts. Looks like no one has uploaded a copy of this file yet.

Some files do get recovered over time through contributions. If you're looking for this one, feel free to ask in Discord, or help preserve it if you have a copy.