logs:

v1 is unreliable use v2;

target name:

v1:

"^.*output.blocks.1.*attn.*$", for unet;

v2:

"^.*output.blocks.1.*attn.*$", for unet;

"^.*(q|v).proj.*$", for text enc;

size:

v1 (~5mb);

v2 (~8mb);

trigger:

v1 no trigger

v2 3d-filter

lora str:

v2:

0.5-1 (between 0.5 - 0.8, is giving semi 3d/real);

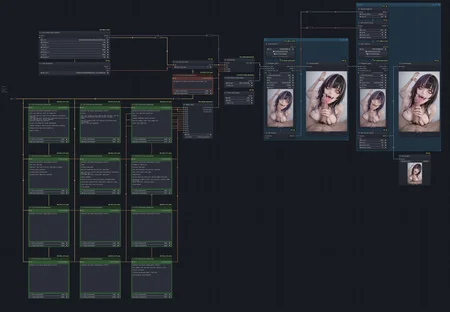

use hiresfix with 0.4-0.6 denoise will clean up some artifacts (especially fingers (see workflow img)) and keep cfg on the low end (~3 - 5);

sampler: euler a;

additional:

v2 is trained with 24 img from slash-soft and 1 other 3d artist, I forgot the name. Compared to v1 (which is trained on 500ish img) is giving much better result. I think because with v2 I manually tag the image while v1 is using wd tagger and not using trigger? or maybe because v2 has better hyperparams and text enc is trained to?

Maybe I will upload/train a third version with more img and using real life cosplay photo;

v2 face has a bias towards tifa and aerith (because that's ~90% the datasets lol).