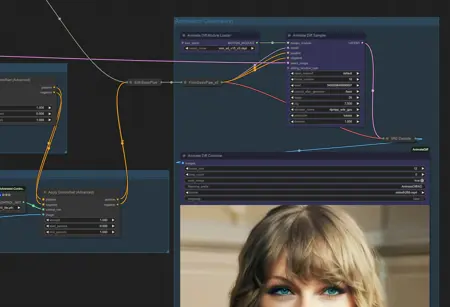

Basic demo to show how to animate from a starting image to another. Chain them for keyframing animation.

Node Explanation:

Latent Keyframe Interpolation:

We have one for the starting image and one for the ending image.

The starting image will start on frame 0 and end roughly after the midway through the frame count. This is the batch_index_from and batch_index_to_excl fields.

The starting image has the strength going from 1.0 to 0.2. This tells the script to start with very strongly to use the starting image and have it not used so much as the frames goes on. This is the strength_from and strength_to

The interpolation describes how fast it should approach the strength_to.

The ending image has these field but in reverse. We want to start with a weak reference to the image, and strength it all the way at the end

Load ControlNet Model (Advanced)

We're using tile model here because we want the images themselves to be used

Feeding the latent keyframe interpolation through the timeframe keyframe node allows us to control how strongly the controlnet should apply as the frames goes on

Apply ControlNet (Advanced)

We leave the strength at one because we are actually controlling it through the Load ControlNet Model. Like-wise we leave the start / end percent to the defaults of 0.0 and 1.0 respectively

Animate Diff Module Loader

Be sure you have the right model here for the right checkpoint. I'm using a SD v1.5 checkpoint model, so I'm using a motion model for SD v1.5. If you are using SDXL, you will have to use another model

Animate Diff Sampler

frame_number - this tells the script how many frames should be generated. For your first test leave this at 16. Going over 16 will set it to continous animation mode. Depending on your machine you might need to make adjustments to the sliding_window_opts. See: ArtVentureX/comfyui-animatediff: AnimateDiff for ComfyUI (github.com)

denoise - leave this at 1.0. We're passing in an empty latent image, even though we are using control net's tile to pass in our reference images.

The rest of it should be however you like to use for your generation

Animate Diff Combine

frame rate - I find 12 to work the best, but change it to however you like depending if you want something smoother or choppier.

formate - video/h264-mp4 is what is accepted for civit.ai uploads

Description

FAQ

Comments (8)

@a1lazydog

Thank you for the workflow. Sorry if this is a silly question but how do you load an image into the preview node? I tried replacing it with an image loader note but then I cant connect it to the VAE Decode as you've done in the json with the preview image node.

Also if I run as is I am getting the following error:

Error occurred when executing LatentKeyframeTiming: strength_to must be greater than or equal to strength_from.

Any ideas?

I also encountered the same problem

If you are using an image loader, you don't need connect it to the VAE decode, that was just there to generate the sample start & end image from the ksampler.

For the LatentKeyframeTiming error, are you on the latest for ComfyUI-Advanced-ControlNet? There was a bug that was fixed two days ago for the LatentKeyframeInterpolation node.

@shenb526960 to expand on what ailazydog said, delete the ksampler pipe, vae decode, and preview image nodes. Add two load inage nodes. Connect each load image node to the correct reroute node (that the vae decode for the preview image was connected to). If you don't delete the ksampler pipe and view decode node that the preview node was connected to you may get an out of vram error.

Error occurred when executing AnimateDiffSampler: list index out of range File "D:\sd\ComfyUI_windows_portable\ComfyUI\execution.py", line 152, in recursive_execute output_data, output_ui = get_output_data(obj, input_data_all) File "D:\sd\ComfyUI_windows_portable\ComfyUI\execution.py", line 82, in get_output_data return_values = map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True) File "D:\sd\ComfyUI_windows_portable\ComfyUI\execution.py", line 75, in map_node_over_list results.append(getattr(obj, func)(**slice_dict(input_data_all, i))) File "D:\sd\ComfyUI_windows_portable\ComfyUI\custom_nodes\comfyui-animatediff\animatediff\sampler.py", line 295, in animatediff_sample return super().sample( File "D:\sd\ComfyUI_windows_portable\ComfyUI\nodes.py", line 1236, in sample return common_ksampler(model, seed, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, denoise=denoise) File "D:\sd\ComfyUI_windows_portable\ComfyUI\nodes.py", line 1206, in common_ksampler samples = comfy.sample.sample(model, noise, steps, cfg, sampler_name, scheduler, positive, negative, latent_image, File "D:\sd\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-Impact-Pack\modules\impact\hacky.py", line 9, in informative_sample return original_sample(*args, **kwargs) File "D:\sd\ComfyUI_windows_portable\ComfyUI\comfy\sample.py", line 90, in sample real_model, positive_copy, negative_copy, noise_mask, models = prepare_sampling(model, noise.shape, positive, negative, noise_mask) File "D:\sd\ComfyUI_windows_portable\ComfyUI\comfy\sample.py", line 81, in prepare_sampling comfy.model_management.load_models_gpu([model] + models, comfy.model_management.batch_area_memory(noise_shape[0] noise_shape[2] noise_shape[3]) + inference_memory) File "D:\sd\ComfyUI_windows_portable\ComfyUI\comfy\model_management.py", line 397, in load_models_gpu cur_loaded_model = loaded_model.model_load(lowvram_model_memory) File "D:\sd\ComfyUI_windows_portable\ComfyUI\comfy\model_management.py", line 290, in model_load device_map = accelerate.infer_auto_device_map(self.real_model, max_memory={0: "{}MiB".format(lowvram_model_memory // (1024 * 1024)), "cpu": "16GiB"}) File "D:\sd\ComfyUI_windows_portable\python_embeded\lib\site-packages\accelerate\utils\modeling.py", line 1033, in infer_auto_device_map tied_module_index = [i for i, (n, ) in enumerate(modulesto_treat) if n in tied_param][0]

Something new happened here

@shenb526960 There’s two animatediff custom nodes in the comfyui manager. You might have both installed and I believe one of them breaks the other. This workflow is currently using the non advanced one. You might have luck disabling the advance one then uninstall and reinstall the basic one then restart comfyui

@a1lazydog Thanks