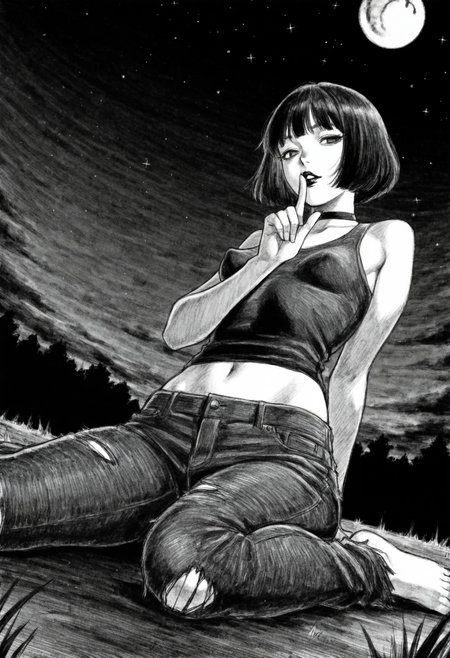

An experiment on traning the w5 layer block (from blora paper), but with loha instead of lora.

!!!

This loha is trained with fp8 unet without training text encoder. So, probably it will be bad.

You can download the image and drag n drop to your comfyui for more detail on prompt, sampler, etc;

I'm using either 2x-AnimeSharpV2_ESRGAN_Soft or lanczos for upscaling (hires fix).

!!!

notes:

v1

Lora str = -1 - 1;

Trigger word = tpppt;

Style : hand drawn black and white pencil;

Recommended checkpoint : hassakuXLIllustrious_v21fix;

Recommended sampler : dpmpp 2m karras with hires fix.

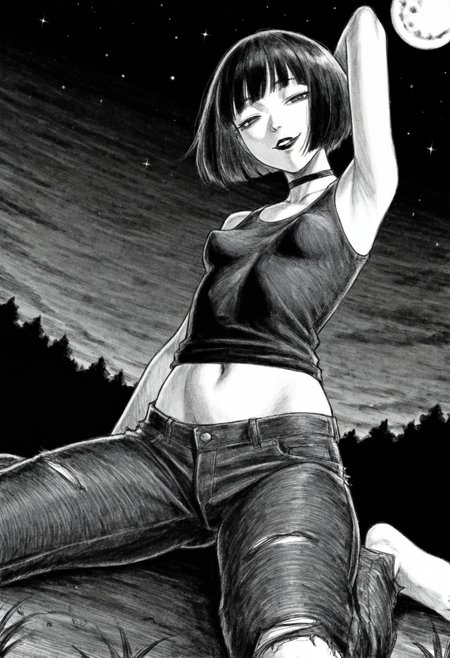

v2

Lora str = 0 - 1.25 (play with it, depends on models and prompts. go above 1 if necessary);

Trigger word = caststatsimple;

Style : flatish color and chibi/simple artstyle?;

Recommended checkpoint : hassakuXLIllustrious_v21fix;

Recommended sampler : euler a (normal) with hires fix.

logs:

v1

Trained on illustriousXL_v01;

Data : 5 img by the artist tp p pt;

// Initial concept, testing and training.

v2

Trained on illustriousXL_v01;

Data : 40 img by the artist caststation;

// testing with bigger datasets.

sry if my english is bad, not a native speaker.