Workflow description :

The aim of this workflow is to generate video using the face from an existing photo in a simple window.

Resources you need:

📂Files :

WAN2.1

Recommendation :

24 gb Vram: Q8_0

16 gb Vram: Q5_K_S

<12 gb Vram: Q4_K_S

I2V Quant Model: Wan2.1-I2V-14B-480P-gguf or Wan2.1-I2V-14B-720P-gguf

In models/diffusion_models

CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

in models/clip

CLIP-VISION: clip_vision_h.safetensors

in models/clip_vision

VAE: wan_2.1_vae.safetensors

in models/vae

FLUX

GGUF_Model: FLUX.1-dev-gguf

"flux1-dev-Q8_0.gguf" in ComfyUI\models\unet

GGUF_clip: t5-v1_1-xxl-encoder-gguf

"t5-v1_1-xxl-encoder-Q8_0.gguf" in \ComfyUI\models\clip

Text encoder: ViT-L-14-TEXT-detail-improved-hiT-GmP-TE-only-HF.safetensors

"ViT-L-14-GmP-ft-TE-only-HF-format.safetensors" in \ComfyUI\models\clip

VAE: ae.safetensors

"ae" in \ComfyUI\models\vae

FLUX PuLID : pulid_flux_v0.9.0.safetensors

"pulid_flux_v0.9.0" in \ComfyUI\models\pulid

ANY upscale model (depreciated):

Realistic : RealESRGAN_x4plus.pth

Anime : RealESRGAN_x4plus_anime_6B.pth

in models/upscale_models

📦Custom Nodes :

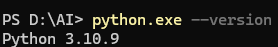

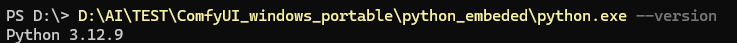

PuLID need your python to have Insightface :

Check your python version :

for windows portable version : (the path depends on where you unzipped ComfyUI)

for windows portable version : (the path depends on where you unzipped ComfyUI)

Download Insightface whl which corresponds here : Assets/Insightface

Download Insightface whl which corresponds here : Assets/Insightface

(Here my local python is on 310 and mobile version in 312)

Then install all prerequisites and insightface :

python.exe -m pip install --use-pep517 facexlibpython.exe -m pip install git+https://github.com/rodjjo/filterpy.git

python.exe -m pip install onnxruntime==1.19.2 onnxruntime-gpu==1.15.1 insightface-0.7.3-cp310-cp310-win_amd64.whl

Description

Bugfix : upscaling node

Add :

LoRA,

Change of execution order between interpolation and upscaling, really independent and better optimized.

FAQ

Comments (7)

1.15.1 insightface-0.7.3-cp310-cp310-win_amd64.whl??? how can i get?

comfyui-easy-use is not work

all so this workflow is not work

If you read the whole post it says where to download this file and how to install it.

Hey, amazing work.

Just updating that at least in 1.2v the upscaling happens in 16fps and not 32 after the interpolation (or ignoring it), maybe the order is reversed?

ERROR: Could not find a version that satisfies the requirement onnxruntime-gpu==1.15.1