If you run into any problems feel free to pm me on civitai/discord

Hunyuan 720p I2V

1316.72s 73F 688x800 22steps dpmpp_2m simple

Hunyuan720pI2V Q6_K gguf (adjust as needed)

https://huggingface.co/city96/HunyuanVideo-I2V-gguf/tree/main

llava_llama3_vision

https://huggingface.co/Comfy-Org/HunyuanVideo_repackaged/blob/main/split_files/clip_vision/llava_llama3_vision.safetensors

clip_l (renamed to clip_hunyuan)

https://huggingface.co/Comfy-Org/HunyuanVideo_repackaged/tree/main/split_files/text_encoders

hunyuan_video_vae_bf16

https://huggingface.co/Comfy-Org/HunyuanVideo_repackaged/tree/main/split_files/vae

Python version 3.12.7 Cuda 12.6 Torch 2.6.0+cu126

Triton windows: https://github.com/woct0rdho/triton-windows/releases

Once you’ve downloaded the appropriate wheel file for your Python version, proceed to open your command prompt and navigate to the directory where the downloaded file is located. Then, run the following command:

Through python_embeded

python.exe -m pip install triton-3.2.0-(filename)

python.exe -m pip install sageattention==1.0.6

------------------------------------------------------------------------------------------------------

Wan2.1

562.51s 512x512 uni_pc simple 33F

12step & 8step split works as intended

81F 1018.89s!

81F 573.99s!

8step Split 161F/10s (16fps) 512x512 uni_pc simple 6760.70seconds but it works! (metadata baked png posted)

I got buzz to tip, post your creations to the workflow gallery or add the resource to your posts, Have fun!

Wan2.1 I2V update published!

49F

512x512

12step(2stage 6+6)

Uni_pc

Simple

Seems like each lora I add +200-400s inference time

33F 700-900s

49F 1000-1500s

Wan2.1 480p I2V /unet (Adjust as needed)

https://huggingface.co/city96/Wan2.1-I2V-14B-480P-gguf/blob/main/wan2.1-i2v-14b-480p-Q6_K.gguf

Clip vision /clip_vision

https://huggingface.co/Comfy-Org/Wan_2.1_ComfyUI_repackaged/blob/main/split_files/clip_vision/clip_vision_h.safetensors

Vae /vae

https://huggingface.co/Comfy-Org/Wan_2.1_ComfyUI_repackaged/blob/main/split_files/vae/wan_2.1_vae.safetensors

Text encoder /clip or /text_encoders

https://huggingface.co/Comfy-Org/Wan_2.1_ComfyUI_repackaged/blob/main/split_files/text_encoders/umt5_xxl_fp8_e4m3fn_scaled.safetensors

(Optional) Upscale /upscale_models

https://huggingface.co/lokCX/4x-Ultrasharp/blob/main/4x-UltraSharp.pth

-----------------------------------------------------------------------------------------------------

Skyreels

Final barebones+ text weighted Hunyuan Lora compatibility update published

831.61 seconds (NO US)

932.07 seconds (NO US)

published vids in showcase

Could potentially work on 8GBVRAM or lower if you tinker with virtual_vram_gb on the UnetLoaderGGUFDisTorchMultiGPU custom node (if you have sufficient RAM GB)

Stage 1 415.369 Stage 2 315.937 VAE 70.838 total 837.93seconds. Q6+6stepLORA+SmoothLORA+DollyLORA

(I have defaulted to DPM++2M\Beta + Smooth LORA always (without for human-centric), AVG runtime: 700-900s 73F No US)

Comfyui_MultiGPU = UnetLoaderGGUFDisTorchMultiGPU (image latent batch 4 flux-finetune Q8, replace gguf loader in txt2img workflow)

Comfyui_KJNodes = TorchCompileModelHyVideo, Patch Sage Attention KJ, Patch Model Patcher Order (Add nodes>KJNodes>Experimental)

∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨∨

https://huggingface.co/spacepxl/skyreels-i2v-smooth-lora

∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧∧

Finetune the virtual_vram_gb to fit your requirements (I suggest looking at the Comfyui cmd for the distorch allocation values that show up after loading the model into SamplerCustom) or use normal Unet Loader (GGUF) with skyreels-hunyuan-I2V-Q?_

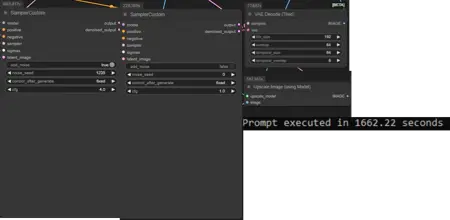

1st load

Prompt executed in 1662.22 seconds -587.365 seconds for upscale = 1075 seconds

640x864

73 frames (stable/generation time)

Steps: 6-12 (Stage 1 6 steps + Stage 2 6 steps)

cfg: 4.0

Sampler: Euler

Scheduler: Simple

(Original Kijai WF https://huggingface.co/Kijai/SkyReels-V1-Hunyuan_comfy/blob/main/skyreels_hunyuan_I2V_native_example_01.json)

Barebones I2V workflow with Upscaler, optimised on 306012GBVRAM + 32GBRAM

Make sure you update comfyui, torch & cuda

Run the update_comfyui.bat from the update folder

Go back to your python_embeded folder

Click on the file directory bar at the top, type cmd then hit enter

In cmd type "python.exe -m pip install --upgrade torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu126"

∨∨ May ruin older workflows ∨∨

Run the other update.bat if it still aint working: update_comfyui_and_python_dependencies.bat

∧∧ May ruin older workflows ∧∧

Workflow Resources:

Fast_Hunyuan Lora (models/lora): https://huggingface.co/Kijai/HunyuanVideo_comfy/blob/main/hyvideo_FastVideo_LoRA-fp8.safetensors

GGUF Model (Switch the models to fit your requirements) (models/unet):

https://huggingface.co/Kijai/SkyReels-V1-Hunyuan_comfy/blob/main/skyreels-hunyuan-I2V-Q6_K.gguf

VAE model (models/vae): https://huggingface.co/Kijai/HunyuanVideo_comfy/blob/main/hunyuan_video_vae_bf16.safetensors

Clip_l model (I renamed it to clip_hunyuan) (models/clip):

llava_llama3 model (models/clip):

https://huggingface.co/calcuis/hunyuan-gguf/blob/main/llava_llama3_fp8_scaled.safetensors

Upscale Model (models/upscale_models):

https://huggingface.co/uwg/upscaler/blob/main/ESRGAN/4x-UltraSharp.pth

Personal Generation Times

after 1st load base gen runtimes(2Stage+Vae Decode):

758.173 seconds

704.589 seconds

with suggested lora after 1st:

779.494

169F tests after 1st (No Load Test):

OOM

121F test after 1st+6stepLORA+smoothLORA (No Load Test):

1st stage

525.14s 1st iteration

729.66s 2nd

736.19s 3rd

645.15s 4th

665.55s 5th

764.12s 6th/Average

2nd stage

81.90s 1st+2nd iteration

OOM

Instant requeue after oom runs from 2nd stage

6.17s 1st Iteration

113.74s 2nd+3rd

222.92s 4th

327.62s 5th

282.29s 6th/Average

VAE 128.309s

97F tests I2V+6stepLora (posted in gallery) (no oom yet)

1123s

1013s

Description

(Original Kijai WF https://huggingface.co/Kijai/SkyReels-V1-Hunyuan_comfy/blob/main/skyreels_hunyuan_I2V_native_example_01.json)

Barebones I2V workflow with Upscaler, optimised for 306012GBVRAM + 32GBRAM (potentially on lower vram)

Make sure you update comfyui, torch & cuda

FAQ

Comments (44)

The achieve is RAR, but downloaded as ZIP. That's why some ppl cant open it under windows. To open it just renamed extension.

Updated, thank you

Really appreciate your putting together a workflow for us 12GBers. I've updated everything but I still can't find nodes:

- UnetLoaderGGUFDisTorchMultiGPU

- ImageNoiseAugmentation

- TorchCompileModelHyVideo

I see ImageNoiseAugmentation comes from KJNodes which I have installed and updated. Any help welcome!

For the ImageNoiseAugmentation side quest, you get the reward from here https://github.com/kijai/ComfyUI-KJNodes

same here missing torch!

@ToxicBot Except I have that installed and updated (as far as I can tell) and that node is not available. Uninstall and reinstall?

@sarashinai Comfyui_MultiGPU = UnetLoaderGGUFDisTorchMultiGPU

Comfyui_KJNodes = TorchCompileModelHyVideo (Add nodes>KJNodes>Experimental), ImageNoiseAugmentation

@tsolful Can confirm, Comfyui_MultiGPU package does contain the needed Unet loader node. For whatever reason, the KJNodes wasn't updating using the Comfyui Manager so I uninstalled it and did a manual git clone of in the custom nodes folder, that brought in the missing nodes. THANK YOU!

"TorchCompileModelHyVideo" error, Uninstall kjnodes in Manager and reinstall kjnodes nightly after restart.

1662.22 seconds !!!!

-587.365 seconds for upscale = 1075seconds for 73 frame

@tsolful What GPU

I have raised this topic for a long time. There is a memory overflow and the render increases significantly. It is not being cleared. Only restarting comfyui helps.

InstructPixToPixConditioning: Input type (CUDABFloat16Type) and weight type (CPUBFloat16Type) should be the same

Any ideas? Can't get past this error.

Didn't run into this error my self, but searching for this error returned me with this :

https://github.com/smthemex/ComfyUI_FramePainter/issues/3

https://stackoverflow.com/questions/59013109/runtimeerror-input-type-torch-floattensor-and-weight-type-torch-cuda-floatte

LLM Why Reasoning:

Model Loading: Your GGUF file (e.g., for HunyuanVideo) might not be properly moved to the GPU during loading.

ComfyUI Config: A misconfiguration in ComfyUI or its nodes might default weights to CPU instead of CUDA.

Mixed Precision: Using FP16 (common with GGUF quants like Q5_K_M or Q6_K) can expose device bugs if not handled consistently.

@tsolful That's a handful. I'll have to dig in and see if I can figure those out. Thank you!

@sarashinai or its as simple as your PyTorch might be outdated or mismatched with your CUDA toolkit

BRO, i read somewhere that triton must match with cuda version. pytorch 2.6 + cuda 12.6, and so on. also u need to ad 2 cuda path Vars to windows sistem. I just erase my oldest comfyui and reinstall all step by step, its aworking amazing in my 306012gb . so kep trying!!!!

I'm getting this error during the Sampler step:

SamplerCustom

shape '[1, 32, 12, 80, 80]' is invalid for input of size 1228800

I haven't changed the default image size in the workflow. Using the Q6 GGUF and SageAttention

Could be something to do with sageattention, but i ain't to knowledgeable. I downloaded/use triton with my WF

@tsolful Same I use Triton as well, I'm not sure what's going on. Workflow doesn't work for me.

@Melty1989

LLM Possible causes:

Mismatched Input Dimensions

Incorrect Model or Checkpoint Configuration

Sampler-Specific Issue

Batch Size Mismatch

VRAM Issues or Corrupt Installation

Try 640x640 batch 1 Sampler Euler or Dpm++ simple/beta, clear cache models/restart comfyui

could possibly be pytorch and cuda mismatch

@tsolful Already tried 640x640. Cuda toolkit is also at 12.4 as is the pytorch. Not really sure what's going on.

@Melty1989 Could be Cuda and pytorch, im on 12.6

@tsolful Hmm, so I'd have to upgrade my current CUDA toolkit to 12.6 then. Goddamnit.

@tsolful I tried it with 12.6 as well, upgraded pytorch - no dice at 640x640

Fixed it (thanks tsolful!). The issue was I had an outdated TeaCache version

sageattention-2.1.1 must be compiled manually -> https://www.reddit.com/r/StableDiffusion/comments/1h7hunp/how_to_run_hunyuanvideo_on_a_single_24gb_vram_card/

it will take a long time to complete, so dont close cmd window

Seeds in the 000's seem to have good character movement

Encountered error: Exception: An error occurred in the ffmpeg subprocess:

which made my GPU crash temporarily making my screen black for 1 second.

Trying to queue a new batch i ran into this error: RuntimeError: CUDA error: unknown error

I rebooted Comfyui, works as expected

Where do we get UnetLoaderGGUFDisTorchMultiGPU

Its the only one im missing, and im not finding it.

ComfyUI-MultiGPU through comfyui manager

@tsolful Thank you! :) Found it using Comfyui MultiGPU

Im getting another error:

PathchSageAttentionKJ - No module named 'sageattention'

No problem, Install triton or sageattention

@tsolful For some reason it's still saying I don't have the nodes: unetloaderggufadvancedtorchmultgpu, wanimagetovideo, saveWEBM ???? I've restarted the container and installed multigpu through comfyui, but it still says I dont have th em.

@staythepath I am currently on Python 3.12.2, Cuda 12.6, Torch 2.6.0+cu126. if that helps let me know

AMAZING AMAZING AMAZING !!!!! THX SO MUCH !!!!!! 546 s. for people with bad results or error -> U NEED CRAZY INSTALATIONS BROS, I WAS LIKE 8 HRS INSTALLING ALL, WHEELS, PYTORCH, CUDA, ETC. AND TESTING

GPU?

works fine on my 3060 12go just disable "Patch Model Patcher Order"

Why?

"SamplerCustom

expected str, bytes or os.PathLike object, not NoneType"

I'm not using the portable version and installed triton and sage attention

bro. u need sageattention-2.1.1 to be installed manualy. i have days fixing problems for this , and all worked for me , very fast and good quality. 2 options -> install linux as a sub system or follow this -> https://www.reddit.com/r/StableDiffusion/comments/1h7hunp/how_to_run_hunyuanvideo_on_a_single_24gb_vram_card/

this is the crazyest install i have ever done. i have delete all and reinstall again, but finally its completely working

sageattention-2.1.1 -> compiled manually i mean, u need ti create ur own

@blablabla666234 GREAT lmao. I might skip this for awhile and come back later. I just reinstalled the portable version of comfy and it took hours to figure out how to use my backup of ReActor.