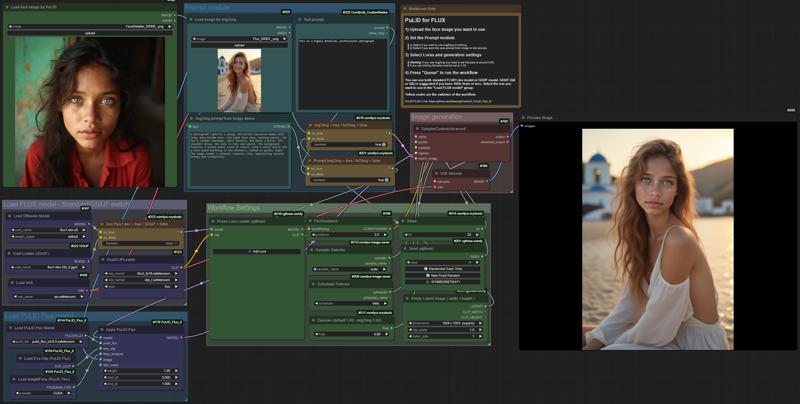

This workflow is based on lldacing's custom nodes: ComfyUI_PuLID_Flux_ll

It allows you to "faceswap" using PuLID 2 both with img2img or txt2img prompting.

You can upload an image and "faceswap" the face you want on it, or you could just generate an image, using simple txt2img prompt, with that specific face.

You can upload an image and "faceswap" the face you want on it, or you could just generate an image, using simple txt2img prompt, with that specific face.

It is possible to use one or more Loras too.

You can use PuLID 0.9.0 or 0.9.1.

Link to the models:

https://huggingface.co/guozinan/PuLID/blob/main/pulid_flux_v0.9.0.safetensors

https://huggingface.co/guozinan/PuLID/blob/main/pulid_flux_v0.9.1.safetensors

Differences between the two model (0.9.1 is a little better):

(Img 18 is with 0.9.0, img 19 is with 0.9.1)

Have fun!

Tenofas

Description

FAQ

Comments (24)

How I get JoyCaption working? :(

You should follow the install instructions on its GitHub Page.

Here are the instructions: https://github.com/EvilBT/ComfyUI_SLK_joy_caption_two/blob/main/readme_us.md

VERY IMPORTANT, once you install the custom node (even with ComfyUI Manager) you need to download some other files! 1. google/siglip-so400m-patch14-384; 2. unsloth/Meta-Llama-3.1-8B-Instruct-bnb-4bit; 3. Joy-Caption-alpha-two Model Download (must be downloaded manually).

Instructions in the link above are very clear and easy to follow.

Let me know if you still have trouble running JoyCaption.

I've installed JoyCaption by instruction models, but getting "slow_conv2d_cpu" not implemented for 'Half' now

@n1xton could be a problem with pytorch... did you update your python dependencies?

Excellent!

This is the best working face swap workflow for me. Take into account that you need to download stuff for joycaption. OP described this in first comment. Thanks!

I got it working but when the face swap the swap face becomes blurry. how do you keep the image sharpness?

it may depend on many things... the size of the "face to be swapped" image, the other settings, if you are doing an img2img the denoise should be set around 0.60, if you are doing txt2img it has to be set to 1.00.

These are just a few of the possible reasons you get a blurry face.

have an error with Joy_caption_two — onverting from Tiktoken failed, if a converter for SentencePiece is available, provide a model path with a SentencePiece tokenizer.model file. screen: https://i.imgur.com/LB1LI8x.png please help!

This problem may happen if a download ed model file Is corrupted. Try to download them manually: https://github.com/EvilBT/ComfyUI_SLK_joy_caption_two/blob/main/readme_us.md#downloading-related-models

Most important is to make sure this model file Is downloaded correctly: https://github.com/EvilBT/ComfyUI_SLK_joy_caption_two/blob/main/readme_us.md#3-joy-caption-alpha-two-model-download-must-be-downloaded-manually

@Tenofas tnx for advice, now it works, sent you buzz

getting this error Joy_caption_two

function 'cdequantize_blockwise_fp32' not found

I would first update all the python dependencies, ComfyUI and all custom nodes. If error persists, check here if there is a fix: https://github.com/EvilBT/ComfyUI_SLK_joy_caption_two/issues?page=1

If you cloned repositories, check the folder names, they probably need to be renamed to those in the pictures that the author gives

How would you make it only use the face and not the hairstyle?

it should use just the face.... The hairstyle should stay the same as the original picture.

can we have another version of the workflow that we just give two images and combines them without the Joycaption. whenever i try to install it i fail miserably

Did you follow the step by step instructions to install Joycaption 2? https://github.com/EvilBT/ComfyUI_SLK_joy_caption_two/blob/main/readme_us.md#installation

There are some files that need to be downloaded manually and saved in specific folders.

i did but in the end i just replaced that part with florence just to make it work, i might need a new installation of comfyui

@Sven111 I am sorry you could not install joycaption... maybe you have some conflicts with other custom nodes. florence should work fine too anyway.

after updating Comfyui this WF not works anymore:

Prompt execution failed

Prompt outputs failed validation: KSamplerSelect: - Return type mismatch between linked nodes: sampler_name, received_type(['euler', 'euler_cfg_pp', 'euler_ancestral', 'euler_ancestral_cfg_pp', 'heun', 'heunpp2', 'exp_heun_2_x0', 'exp_heun_2_x0_sde', 'dpm_2', 'dpm_2_ancestral', 'lms', 'dpm_fast', 'dpm_adaptive', 'dpmpp_2s_ancestral', 'dpmpp_2s_ancestral_cfg_pp', 'dpmpp_sde', 'dpmpp_sde_gpu', 'dpmpp_2m', 'dpmpp_2m_cfg_pp', 'dpmpp_2m_sde', 'dpmpp_2m_sde_gpu', 'dpmpp_2m_sde_heun', 'dpmpp_2m_sde_heun_gpu', 'dpmpp_3m_sde', 'dpmpp_3m_sde_gpu', 'ddpm', 'lcm', 'ipndm', 'ipndm_v', 'deis', 'res_multistep', 'res_multistep_cfg_pp', 'res_multistep_ancestral', 'res_multistep_ancestral_cfg_pp', 'gradient_estimation', 'gradient_estimation_cfg_pp', 'er_sde', 'seeds_2', 'seeds_3', 'sa_solver', 'sa_solver_pece', 'ddim', 'uni_pc', 'uni_pc_bh2', 'legacy_rk', 'rk', 'rk_beta', 'deis_3m_ode', 'deis_2m_ode', 'deis_3m', 'deis_2m', 'res_6s_ode', 'res_5s_ode', 'res_3s_ode', 'res_2s_ode', 'res_3m_ode', 'res_2m_ode', 'res_6s', 'res_5s', 'res_3s', 'res_2s', 'res_3m', 'res_2m']) mismatch input_type(COMBO)

Yes, it's an old workflow, I should probably retire it.