Preparing datasets for LoRAs can be a pain in the ass. Being lazy, I attempted to automate as much of the process as possible.

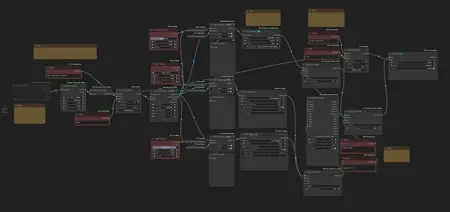

First, I have a workflow that extracts videos from a folder, and converts their fps to a target framerate of your choice (default 24 fps for Hunyuan). To do that, it takes the least common multiple "lcm" of the original and target fps (for example, for 30 and 24 it's 120), then it uses FILM to interpolate up to the lcm of the two numbers, and then it keeps only the frames it needs to go back down to the target fps. It's a bit overkill, but if you computer can handle it, it can save a bit of hassle.

I had to create a custom node for the math, here it is if the manager can't find it: https://github.com/EmilioPlumed/ComfyUI-Math.

Second, I have another workflow that captions the videos in a folder. I have it set up to use Joy Captioner alpha 2 to get a verbose description, and 2 wd14 taggers to get tags from different points. Each of the 3 captions have a slider to select from which portion of the video to extract the description or the tags. Then, it eliminates duplicate tags and puts the text together in a .txt file.

The workflows do require you to rename your files to be numbered consecutively.

Description

FAQ

Comments (9)

Great workflow. I will try in mubusi tuner.

What's the lowest VRAM you've been able to run it with? currently using a 3090 with 24gb and wouldn't mind giving this a try if my system can run it.

I have a 4090 with 24 GB. The Joy caption model pretty much fills all the vram, but you should be fine. The interpolation workflow is light on the vram, but for longer videos with unusual fps (like 25) will end up taking a lot of system ram, I upgraded recently and 32 GB wasn't enough in those cases.

@bonetrousers thanks for the info. I currently have 64 gigs of system Ram so I will give this a shot

You need to install the "python-interpreter-node" from comfyui manager for this to work

Thanks, Comfy Manager didn't catch this.

From one lazy person to another, let me say THANK YOU!

This workflow works with only images or mixed one?

As it is currently set up, you'd have to separate your videos from your images and run them in different workflows.

I'll add an example workflow for captioning single images.